CAIDA's Annual Report for 2015

Mission Statement: CAIDA investigates practical and theoretical aspects of the Internet, focusing on activities that:

- provide insight into the macroscopic function of Internet infrastructure, behavior, usage, and evolution,

- foster a collaborative environment in which data can be acquired, analyzed, and (as appropriate) shared,

- improve the integrity of the field of Internet science,

- inform science, technology, and communications public policies.

Executive Summary

This annual report summarizes CAIDA's activities for 2015, in the areas of research, infrastructure, data collection and analysis. Our research projects span Internet topology, routing, security, economics, future Internet architectures, and policy. Our infrastructure, software development, and data sharing activities support measurement-based internet research, both at CAIDA and around the world, with focus on the health and integrity of the global Internet ecosystem.

Mapping the Internet. We continued to pursue Internet cartography, improving our IPv4 and IPv6 topology mapping capabilities using our expanding and extensible Ark measurement infrastructure. We improved the accuracy and sophistication of our topology annotation capabilities, including classification of ISPs and their business relationships. Using our evolving IP address alias resolution measurement system, we collected curated, and released another Internet Topology Data Kit (ITDK).

Mapping Interconnection Connectivity and Congestion. We used the Ark infrastructure to support an ambitious collaboration with MIT to map the rich mesh of interconnection in the Internet, with a focus on congestion induced by evolving peering and traffic management practices of CDNs and access ISPs, including methods to detect and localize the congestion to specific points in networks. We undertook several studies to pursue different dimensions of this challenge: identification of interconnection borders from comprehensive measurements of the global Internet topology; identification of the actual physical location (facility) of an interconnection in specific circumstances; and mapping observed evidence of congestion at points of interconnection. We continued producing other related data collection and analysis to enable evaluation of these measurements in the larger context of the evolving ecosystem: quantifying a given ISP's global routing footprint; classification of autonomous systems (ASes) according to business type; and mapping ASes to their owning organizations. In parallel, we examined the peering ecosystem from an economic perspective, exploring fundamental weaknesses and systemic problems of the currently deployed economic framework of Internet interconnection that will continue to cause peering disputes between ASes.

Monitoring Global Internet Security and Stability. We conduct other global monitoring projects, which focus on security and stability aspects of the global Internet: traffic interception events (hijacks), macroscopic outages, and network filtering of spoofed packets. Each of these projects leverages the existing Ark infrastructure, but each has also required the development of new measurement and data aggregation and analysis tools and infrastructure, now at various stages of development. We were tremendously excited to finally finish and release BGPstream, a software framework for processing large amounts of historical and live BGP measurement data. BGPstream serves as one of several data analysis components of our outage-detection monitoring infrastructure, a prototype of which was operating at the end of the year. We published four other papers that either use or leverage the results of internet scanning and other unsolicited traffic to infer macroscopic properties of the Internet.

Future Internet Architectures. The current TCP/IP architecture is showing its age, and the slow uptake of its ostensible upgrade, IPv6, has inspired NSF and other research funding agencies around the world to invest in research on entirely new Internet architectures. We continue to help launch this moonshot from several angles -- routing, security, testbed, management -- while also pursuing and publishing results of six empirical studies of IPv6 deployment and evolution.

Public Policy. Our final research thrust is public policy, an area that expanded in 2015, due to requests from policymakers for empirical research results or guidance to inform industry tussles and telecommunication policies. Most notably, the FCC and AT&T selected CAIDA to be the Independent Measurement Expert in the context of the AT&T/DirecTV merger, which turned out to be as much of a challenge as it was an honor. We also published three position papers each aimed at optimizing different public policy outcomes in the face of a rapidly evolving information and communication technology landscape. We contributed to the development of frameworks for ethical assessment of Internet measurement research methods.

Our infrastructure operations activities also grew this year. We continued to operate active and passive measurement infrastructure with visibility into global Internet behavior, and associated software tools that facilitate network research and security vulnerability analysis. In addition to BGPstream, we expanded our infrastructure activities to include a client-server system for allowing measurement of compliance with BCP38 (ingress filtering best practices) across government, research, and commercial networks, and analysis of resulting data in support of compliance efforts. Our 2014 efforts to expand our data sharing efforts by making older topology and some traffic data sets public have dramatically increased use of our data, reflected in our data sharing statistics. In addition, we were happy to help launch DHS' new IMPACT data sharing initiative toward the end of the year.

Finally, as always, we engaged in a variety of tool development, and outreach activities, including maintaining web sites, publishing 27 peer-reviewed papers, 3 technical reports, 3 workshop reports, 33 presentations, 14 blog entries, and hosting 5 workshops. This report summarizes the status of our activities; details about our research are available in papers, presentations, and interactive resources on our web sites. We also provide listings and links to software tools and data sets shared, and statistics reflecting their usage. sources. Finally, we offer a "CAIDA in numbers" section: statistics on our performance, financial reporting, and supporting resources, including visiting scholars and students, and all funding sources.

CAIDA's program plan for 2014-2017 is available at www.caida.org/about/progplan/progplan2014/. Please do not hesitate to send comments or questions to info at caida dot org.

Research and Analysis

Internet Topology Mapping, Measurement, Analysis, and Modeling

Maps of the Internet topology are an important tool for characterizing this critical infrastructure and understanding its macroscopic properties, dynamic behavior, performance, and evolution. They are also crucial for realistic modeling, simulation, and analysis of the Internet and other large-scale complex networks. These maps can be constructed for different layers (or granularities), e.g., fiber/copper cable, IP address, router, Points-of-Presence (PoPs), autonomous system (AS), ISP/organization. Router-level and PoP-level topology maps can powerfully inform and calibrate vulnerability assessments and situational awareness of critical network infrastructure. ISP-level topologies, sometimes called AS-level or interdomain routing topologies (although an ISP may own multiple ASes so an AS-level graph is a slightly finer granularity) provide insights into technical, economic, policy, and security needs of the largely unregulated peering ecosystem. With support from the Department of Homeland Security (DHS) and the National Science Foundation, we continued support for our active measurement infrastructure Archipelago (Ark), which captures the most comprehensive set of Internet topology data made available to the research community. (Ark also supports a variety of supports cybersecurity and other network research and experimentation, details in the Ark Infrastructure section of this report.)

This year CAIDA continued our collaboration with MIT Computer Science and Artificial Intelligence Laboratory (MIT/CSAIL), a NSF-funded project in the Internet: Colocation, Connectivity and Congestion. The two most daunting technical challenges of this project are topological inference of the point of interconnection from the best available data sets, and then accurately quantifying evidence of congestion for a given interconnection. Expansion of our Ark measurement infrastructure, into more broadband residential was essential to gathering data for both tasks. For the topology tasks, we undertook two separate but related studies dealing with identification of borders between networks in traceroute data, and attempting to locate exactly where the physical interconnection occurs.

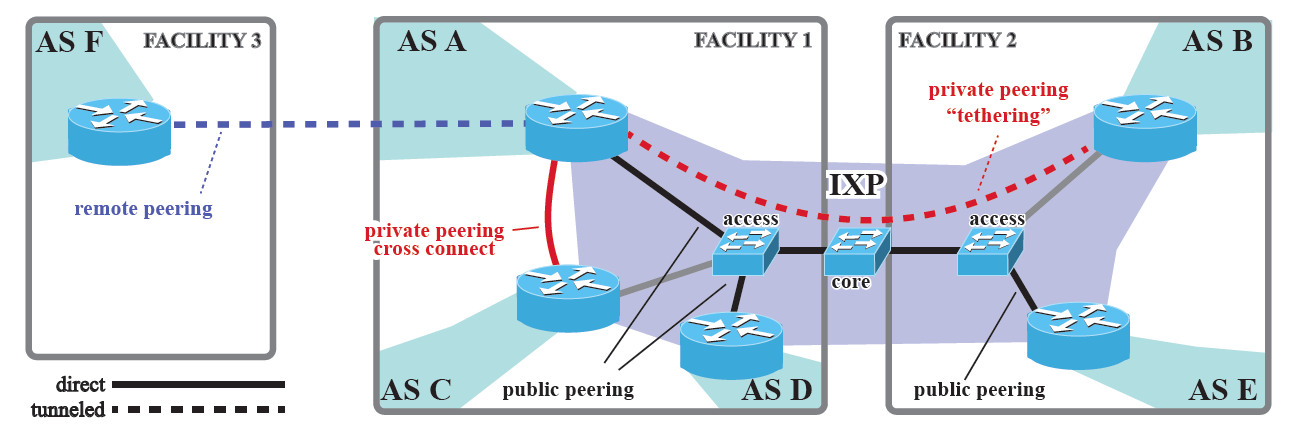

Mapping Peering Interconnections to a Facility: Interconnection facilities host routers of many networks

and partner with IXPs to support many types of public and

private interconnection.

Mapping Peering Interconnections to a Facility: Interconnection facilities host routers of many networks

and partner with IXPs to support many types of public and

private interconnection.

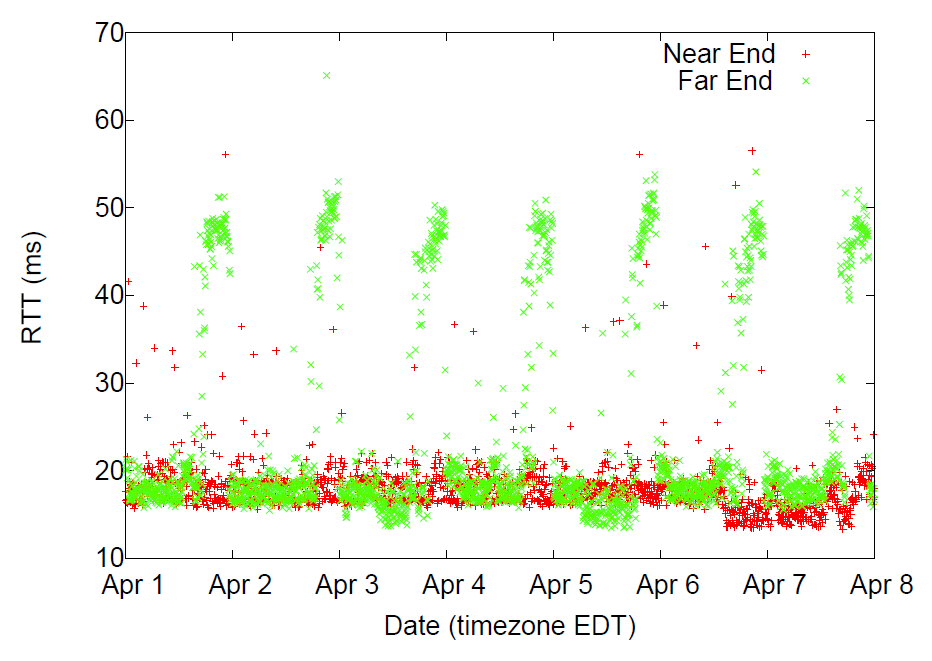

Measuring Interdomain Congestion and its Impact on QoE: Congestion on an interdomain link in April 2015. TSP probes show evidence of sustained congestion during peak times in the local time zone of the VP.

Measuring Interdomain Congestion and its Impact on QoE: Congestion on an interdomain link in April 2015. TSP probes show evidence of sustained congestion during peak times in the local time zone of the VP.

Identification of interconnection borders. Remarkably, there currently exists no rigorous method to accurately identify a border router, and its owner, from traceroute data. In 2015 we worked on heuristics that build on CAIDA's proven experience in inferring AS relationships and router-level topology to tackle this challenge. We intend to publish our validated method and software in 2016, but we used our preliminary method to assist with an MIT/Akamai/Duke collaboration to investigate server-to-server performance characteristics in the Internet's core. (A Server-to-Server View of the Internet, CoNEXT)

Identification of physical location (facility) of an interconnection. Using publicly available data about the presence of networks at different facilities and traceroute measurements executed from more than 8,500 available measurement servers scattered around the world to identify the technical (engineering) approach used to establish an interconnection, which often narrowed down the possible set of facilities hosting that interconnection to a single one. Validation on our first experiment with this method revealed that it outperforms heuristics based on naming schemes and IP geolocation. Our study also revealed that in many cases the same router carries out both private interconnections and public peerings, in some cases via multiple Internet exchange points. ("Mapping Peering Interconnections to a Facility", best paper award at CoNEXT).

Mapping evidence of congestion at points of interconnection. We also began to design and develop a comprehensive system for collecting and processing time-series latency probe (TSLP) data focused on these points of interconnection. This system will perform adaptive probing of discovered interdomain links, maintain detailed historical state about topology and probing, provide efficient storage, analysis and retrieval of historical data, and enable visualization of the collected measurements. To support execution of this probing on resource-constrained nodes such as the FCC's MBA infrastructure, Matthew Luckie added functionality to scamper that allows a central machine to coordinate individual scamper probing processes on many monitors.

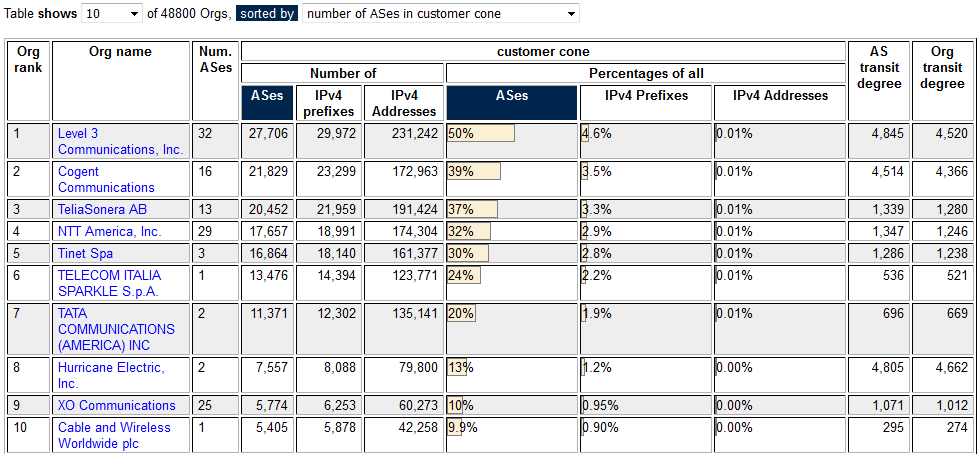

AS Rank by Org: AS Rank uses our AS2org mapping to rank organizations by sizes of the customer cones of ASes the organization operates.

AS Rank by Org: AS Rank uses our AS2org mapping to rank organizations by sizes of the customer cones of ASes the organization operates.

Comparing the size of ISPs in terms of their routing footprint. We explored how CAIDA's ranking of Autonomous Systems compared to Dyn's IP Transit Intelligence AS ranking, acknowledging that their methodology is proprietary and we do not know exactly what they are doing beyond what they have released publicly. This comparison revealed the difference in weighted ranking for select periods where IPv4 census information is available. The two rankings roughly agree on the top ASes, although their relative weighting diverges. ( What's in a Ranking? Comparing Dyn's Baker's Dozen and CAIDA's AS Rank, July blog post).

Classifying Autonomous Systems (ASes) according to business type. We trained, validated, and documented our AS type classification system algorithm. We investigated errors and added features to better classify content networks. The Positive Predictive Value (PPV) of the classifier is currently 73%.

Mapping ASes to organizations. We refined our method to map Autonomous Systems (AS) to organizations that operate them, and curated and shared the resulting data set. Our AS-ranking interactive page ranks at the granualarity of either individual AS or these inferred organization.

AIMS-7. We organized and hosted the 7th Workshop on Active Internet Measurement (AIMS-7).

Monitoring Global Internet Security and Stability

Our research in Internet security and stability monitoring in 2015 focused on three areas: 1) designing and creating technology that can serve as the basis for early-warning detection systems for large-scale Internet outages and to characterize such events (e.g., networks, regions, and protocols affected over which periods); 2) developing methodologies to detect and characterize large-scale traffic interception events based on BGP hijacking and implementing a prototype system capable to monitor the Internet 24/7; and 3) study and use of measurement to estimate infrastructure vulnerabilities. This area of research inspired the creation of a new monitoring, analysis and data sharing infrastructure: BGPStream, described in its own section below.

Detection of large-scale traffic interception events. We developed and deployed a system -- based on BGPStream -- for continuous live BGP monitoring, based on measurements provided by the RouteViews and RIPE RIS projects. We are also developing methodologies, and then implementing and deploying the corresponding live monitoring modules, to detect and classify (by severity) suspicious BGP events, e.g., MOAS, subMOAS, new AS links.

Outage detection monitoring infrastructure. Our macroscopic Internet outage detection project involves a number of big data research and infrastructure challenges. To support this project, we deployed three production instances of DBATS, our time-series database optimized for high-speed simultaneous updates of millions of metrics. All instances run on a dedicated IO-node of the Gordon supercomputer at SDSC. We extended our software libraries to support distributed writing to the same DBATS instance. In order to fully support use of BGP metrics in outage detection, we extended our software libraries for fast Internet meta-data lookups to enable efficient queries for network prefixes and we developed monitoring modules based on BGPStream. In parallel, we improved our IBR-based (Internet background radiation) metrics for the analysis of large-scale Internet outages. We collaborated with MERIT Networks to deploy our software infrastructure for processing IBR traffic on their darknet, integrated their data into our analysis infrastructure, and began investigating differences in metrics extracted from the two different darknets. We also continued to design and develop the visual interface, adding several visualization modules (e.g., heatmaps, correlations, proportions) and support for live updates and dashboards. We introduced animations in visualization modules both for historic timeline and for live monitoring. In March and April 2015, we presented the IODA project to the US FCC and the DHS National Cybersecurity and Communications Integration Center (NCICC) respectively. Finally, we applied these methods to analyze (and report) real-world events of macroscopic Internet disruption. We hope to have a full prototype system operational by Fall 2016. ("Partial Time Warner Cable outage today around 1.20am EDT", tweet).

Surveying BCP38 (blocking packets with forged source addresses) compliance. In May 2015, CAIDA took over stewardship of the Spoofer project from Robert Beverly of the Naval Postgraduate School. The objective of this project is to comprehensively measure the Internet's susceptibility to spoofed source address IP packets. Malicious users capitalize on the Internet's ability (and often, tendency) to forward packets with fake source IP addresses, for anonymity, indirection, targeted attacks and security circumvention. Compromised hosts on networks that permit IP spoofing enable a wide variety of attacks, and new spoofed-source-based exploits regularly appear despite decades of filtering effort. We measure various source address types (invalid, valid, private), granularity (can you spoof your neighbor's IP address?), and location (which providers are employing source address validation?). Matthew Luckie was the lead on this project when he accepted a faculty position at the University of Waikato, and continues to provide technical leadership on the project from Waikato. By the end of 2015 we had redeployed the server instance to CAIDA and released updated client software that supports IPv6 on all platforms, and coordinates measurement with the new CAIDA service. We have no peer-reviewed publication for this project yet, but we posted two blog entries on the project announcement and first DHS S&T DDoS Defense PI Meeting in August 2015 where we presented introductory slides.

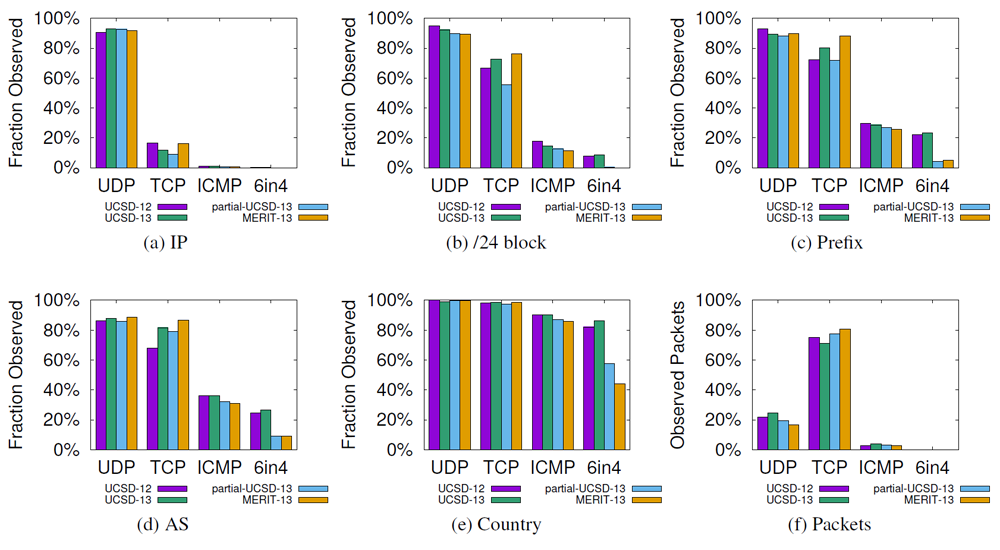

Leveraging Internet Background Radiation for Opportunistic Network Analysis: Fraction of IPv4 addresses, /24 blocks, prefixes, ASes, and country codes observed through the most popular transport layer

protocols. At the /24 block, prefix, AS and country levels we observe a similar percentage of sources sending TCP and UDP. TCP accounts for most packets. (UCSD and MERIT darknets, August 2013)

Leveraging Internet Background Radiation for Opportunistic Network Analysis: Fraction of IPv4 addresses, /24 blocks, prefixes, ASes, and country codes observed through the most popular transport layer

protocols. At the /24 block, prefix, AS and country levels we observe a similar percentage of sources sending TCP and UDP. TCP accounts for most packets. (UCSD and MERIT darknets, August 2013)

Surveying TCP vulnerabilities on the Internet. We used Ark nodes as vantage points of active measurements to empirically study the resilience of deployed TCP implementations to blind in-window attacks. In such an attack, an off-path adversary disrupts an established connection by sending a packet that the victim believes came from its peer, causing data corruption or connection reset. We tested operating systems (and middleboxes deployed in front) of webservers in the wild in September 2015 and found 22% of connections vulnerable to in-window SYN and reset packets, 30% vulnerable to in-window data packets, and 38.4% vulnerable to at least one of three in-window attacks we tested. In addition to evaluating commodity TCP stacks, we found vulnerabilities in 12 of 14 of the routers and switches we characterized critical network infrastructure where the potential impact of any TCP vulnerabilities is particularly acute. This surprisingly high level of extant vulnerabilities in the most mature Internet transport protocol in use today is a perfect illustration of the Internet's fragility. Embedded in historical context, it also provides a strong case for more systematic, scientific, and longitudinal measurement and quantitative analysis of fundamental properties of critical Internet infrastructure, as well as for the importance of better mechanisms to get best security practices deployed. (Resilience of Deployed TCP to Blind Attacks, best paper IMC)

Quantifying the threat of Internet scanning. Working with collaborators at ETH Zurich and FORTH-ICS, we used a unique combination of data sets to estimate how many hosts replied to scanners, and whether they were subsequently compromised. By analyzing unsampled NetFlow records from an R&E campus network captured during a comprehensive Internet-wide scan (the entire IPv4 space) in February 2011, we found that 2% of hosts scanned on this campus responded to the scanning packets. Correlating scan replies with IDS alerts from the same network revealed significant exploitation activity against at least 8% of those hosts responding. In the network we observed, at least 142 scanned hosts were eventually compromised. World-wide, the number of hosts that were compromised as a result of this scanning episode is likely much larger. (How Dangerous Is Internet Scanning? A Measurement Study of the Aftermath of an Internet-Wide Scan, TMA)

A Framework for Using IBR traffic for Research. We explored the utility of Internet Background Radiation (IBR) as a data source of Internet-wide measurements. Intuitively, IBR can provide insight into network properties when traffic from that network contains relevant information and is of sufficient volume. We turned this intuition into a scientific investigation, examining which networks send IBR, identifying aspects of IBR that enable opportunistic network inferences, and characterizing the frequency and granularity of traffic sources and how they impact inferential capabilities. We used three case studies to show that IBR can supplement existing techniques by improving coverage and/or diversity of analyzable networks while reducing measurement overhead. We also offered a framework for understanding the circumstances and properties for which unsolicited traffic is an appropriate data source for inference of macroscopic Internet properties, which can help other researchers assess its utility for a given study. This work is a part of Karyn Benson's PhD dissertation with the UCSD CSE department. (Leveraging Internet Background Radiation for Opportunistic Network Analysis, IMC)

Inferring IPv4 address space utilization. In collaboration with ETH, FORTH-ICS, Politecnico di Torino, and TU Berlin, we continued refining our method for inferring which IPv4 addresses are actually in use, and applied it to a diverse set of data obtained through different types of active and passive measurements. (Lost in Space: Improving Inference of IPv4 Address Space Utilization IEEE JSAC 2016)

BGP Hackathon. On 12-13 November 2015, we hosted a planning and preparation meeting for the February 2016 BGP Hackathon, to set in motion supporting code development and service provisioning, including use of computational resources on SDSC's recently installed Comet supercomputer.

Economics

We study the Internet ecosystem from an economic perspective, capturing relevant interactions between network business relations, interdomain network topology, routing policies, and resulting interdomain traffic flows. In a newly NSF-funded collaboration with Georgia Tech, we are exploring fundamental weaknesses and systemic problems of the currently deployed framework of Internet interconnection that will continue to cause peering disputes between ASes. Next year we plan to propose alternate interconnection frameworks based on an inter-disciplinary, techno-economic perspective.

Shedding light on ISP peering practices. We investigated three sources of complexity in peering (interconnection) practices that preclude adoption of a formal approach to optimal peer selection, and show that these complexities and limited information constrain the ability of a Tier-2 NSP from accurately forecasting the effect of its peering decisions. (Complexities in Internet Peering: Understanding the "Black" in the "Black Art", INFOCOM)

Reviewing obstacles to QoS deployment on the public Internet. We revisited the last 35 years of history related to the design and specification of Quality of Service (QoS) on the Internet, in hopes of offering some clarity to the current debates around service differentiation and traffic management. We reviewed the continual failure to get QoS capabilities deployed on the public Internet, including the technical challenges of the 1980s and 1990s, the market-oriented (business) challenges of the 1990s and 2000s, and recent regulatory challenges. We also reflected on lessons learned from the history of this failure, the resulting tensions and risks, and their implications for the future of Internet infrastructure regulation. (Adding Enhanced Services to the Internet: Lessons from History, TPRC)

Workshop on Internet Economics (WIE). We hosted the 5th annual WIE, bringing together researchers, commercial Internet facilities and service providers, technologists, economists, theorists, policy makers, and other stakeholders to empirically inform emerging regulatory and policy debates. This year we discussed the implications of Title II (common-carrier-based) regulation for issues we have looked at in the past, or those shaping current policy conversations. We discussed evolving approaches to interconnection across different segments of the ecosystem (e.g., content to access), QoE and QoS measurement techniques and their limitations, interconnection measurement and modeling challenges and opportunities, and transparency.

Constraints on Innovation. We presented on "sources of and barriers to innovation" at a workshop on The Digital Broadband Migration: First Principles for a Twenty First Century Innovation Policy hosted by the University of Colorado's Silicon Flatiron's program. (video, blog essay).

Future Internet Research

The current TCP/IP architecture is showing its age, and the Internet protocol standards development organization (the IETF) spent over a decade developing the IPv6 architecture, intending it to ultimately replace IPv4 and solve (at least) IPv4's biggest limitation in the 21st century: it has far fewer IP addresses than the world needs, and the address field in the IPv4 packet is of fixed length and not amenable to expansion. But uptake of the IPv6 protocol has languished, and some believe IPv6 will never actually be the default Internet protocol, and furthermore that IPv6 does not offer enough new functionality and capability to accommodate the needs of emerging communication environments, most specifically the "Internet of Things" and its cellular industry cousin, "5G". For over six years, NSF and other research funding agencies around the world have invested in research on entirely new Internet architectures, including resources to fund software prototypes, national-scale testbeds, and evaluation of candidate architectures in community-proposed network environments. We have been fortunate to be engaged in long-term studies of both IPv6 evolution, and a new architecture research project that allows us to draw on insights from the struggle to deploy IPv6 as we investigate the potential deployment paths for a much more radical change in the Internet's basic protocol architecture.

IPv6: Measuring and Modeling the IPv6 Transition

IPv6 Topology Structure. Our IPv6 measurement work has in past years focused on improving the fidelity, scope, and usability of IPv6 measurement technology. We continued measurements of IPv6 topology using our Ark measurement platform. As of the end of 2015, we had 55 Ark monitors capable of IPv6 probing. As part of our efforts to provide a comprehensive view of the IPv6 topology from core to edge, we compared topological characteristics of traceroute-observed IPv4 and IPv6 AS-core graphs in our January 2015 version of our popular online visualization of the IPv4 and IPv6 AS Core AS-Level Internet Graph

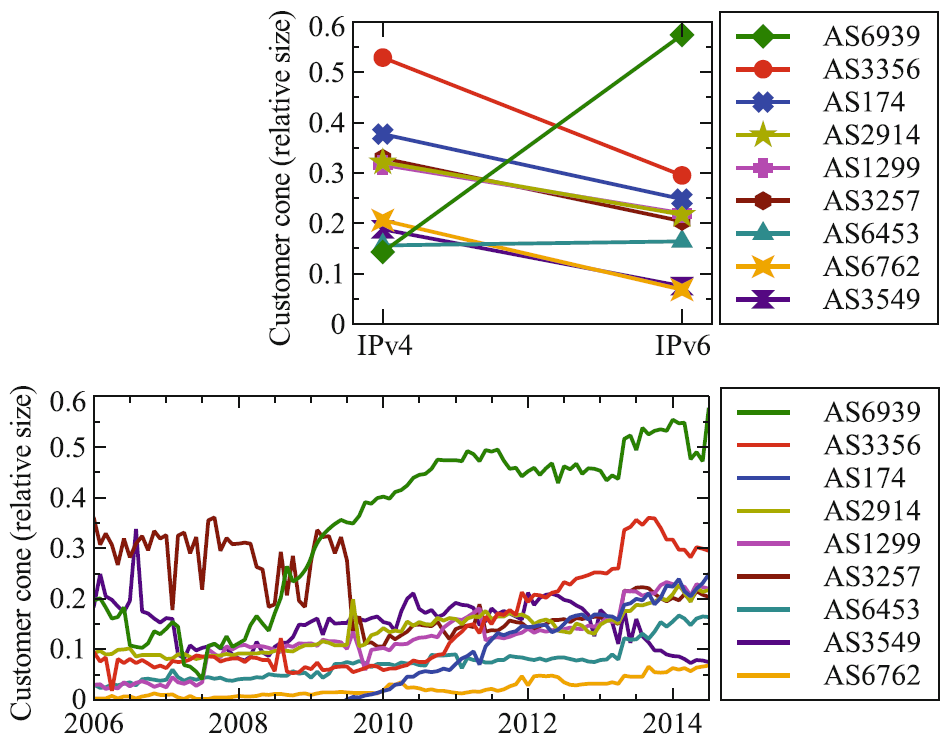

IPv6 AS Relationships, Cliques, and Congruence: Fraction of ASes in the customer cones of the largest 9 providers. Top plot compares IPv4 and IPv6 cones for July 2014. Bottom plot shows IPv4 cone size growth over time.

IPv6 AS Relationships, Cliques, and Congruence: Fraction of ASes in the customer cones of the largest 9 providers. Top plot compares IPv4 and IPv6 cones for July 2014. Bottom plot shows IPv4 cone size growth over time.

Inferring IPv6 routing relationships. Due to the different political economy driving IPv6 than IPv4, IPv4 AS routing relationship algorithms will not accurately infer routing relationships (e.g., customer, provider, peer) between autonomous systems in IPv6, encumbering our ability to model and understand IPv6 AS topology evolution. We modified CAIDA's IPv4 relationship inference algorithm to accurately infer IPv6 relationships using publicly available BGP data, and discovered that peering networks are converging toward the same routing relationship types in IPv4 and IPv6, but disparities remain due to differences in the transit-free clique and the still-dominant influence of Hurricane Electric in IPv6. (IPv6 AS Relationships, Cliques, and Congruence PAM)

Improving performance with IPv4 and IPv6 multihoming. With more Internet service providers (ISPs) now offering customers both IPv6 and IPv4 connectivity, an obvious question is whether a user can leverage both in parallel to improve network resilience and application performance. We found that more than 60% of the current IPv4 and IPv6 AS-paths are non-congruent at the AS-level, but even under this condition, Multi-Path TCP (MPTCP) can still improve end-to-end performance of a single dual-stack connection, even potentially up to the sum of the IPv4 and IPv6 bandwidths. (Leveraging the IPv4/IPv6 Identity Duality by using Multi-Path Transport IEEE Global Internet)

IPv6 router stability. We probed to periodically elicit IPv6 fragment identifiers from IPv6 router interfaces, and analyzed the resulting identifier time series for reboots. We found evidence of clustered reboot events, popular maintenance windows, and correlation with globally visible control plane data. ( Measuring and Characterizing IPv6 Router Availability, PAM)

Comparing IPv4 and IPv6 path stability. More recently, we compared stability and performance measurements from the control and data plane in IPv6 and IPv4, finding that in both cases IPv6 exhibited less stability than IPv4. In the control plane, a few unstable prefixes generated most of the instability. In the data-plane, IPv6 unavailability episodes were longer than on IPv4. Some performance degradations are correlated, due to shared IPv6 and IPv6 infrastructure. (Characterizing IPv6 control and data plane stability, INFOCOM 2016)

Characterizing IPv6 Network Security Policy. We examined the extent to which IPv4 security best practices are also deployed in IPv6. We found several high-value target applications with a comparatively open security policy in IPv6. Specifically: (i) SSH, Telnet, SNMP, are more than twice as open on routers in IPv6 as they are in IPv4; (ii) nearly half of routers with BGP open were only open in IPv6; and (iii) in the server dataset, SNMP was twice as open in IPv6 as in IPv4. (Matthew Luckie collaborated on this project with researchers from ICSI, UIUC, and UMich, while he was at CAIDA; the results were published in 2016. Don't Forget to Lock the Back Door! A Characterization of IPv6 Network Security Policy , NDSS 2016)

Named Data Networking: Next Phase (Project web site: http://www.named-data.net/)

The NDN project investigates Van Jacobson's proposed evolution from today's host-centric network architecture (IP) to a data-centric network architecture (NDN). This conceptually simple shift has far-reaching implications for how we design, develop, deploy, and use networks and applications. By naming data instead of locations, this architecture aims to transition the Internet from its current reliance on where data is located (addresses and hosts) to what the data is (the content that users and applications care about). Our activities for this project included research on new NDN-compatible routing protocols (via a subcontract with Dima Krioukov now at Northeastern University), schematized trust models, testbed participation, overall management support.

Scalable routing research. We contributed to the scalable routing research activities of the NDN project, via a subcontract to Northeastern. Dima and his Postdoc Rodrigo Aldecoa developed a software package to generate graphs embedded in hyperbolic spaces that reproduce realistic network topologies, such as the Internet at the Autonomous System topology. Being able to generate synthetic networks with real-world properties is critical for the analysis and understanding of real complex systems. They also developed and explored a new method for inferring hidden geometric coordinates of nodes in complex networks based on the number of common neighbors between the nodes. Mapping a network into a metric space improves our understanding of network dynamics and allows for efficient greedy routing using only local information. This new method improves both accuracy and speed over previous strategies. ("Hyperbolic graph generator." Computer Physics Communications; Network geometry inference using common neighbors, Physical Review E)

Dima's team also analyzed BGP update behavior, and found extreme volatility, long-term correlations and memory effects, similar to seismic time series, or temperature and stock market price fluctuations. Their statistical characterization of BGP update dynamics could serve as a basis for validation of existing and developing better models of Internet interdomain routing. (Long-Range Correlations and Memory in the Dynamics of Internet Interdomain Routing., PLOS ONE)

Development of trust schemas to support NDN application development. We explored the ability of NDN to enable such automation through the use of trust schemas. Trust schemas can provide data consumers an automatic way to discover which keys to use to authenticate individual data packets, and provide data producers an automatic decision process about which keys to use to sign data packets and, if keys are missing, how to create keys while ensuring that they are used only within a narrowly defined scope (the least privilege principle). We have developed a set of trust schemas for several prototype NDN applications with different trust models of varying complexity. Our experience suggests that this approach has the potential of being generally applicable to a wide range of NDN applications, and even to other content-based network systems such as the HTTP-based web. (Schematizing Trust in Named Data Networking, ICN)

Testbed infrastructure. We continued to maintain a local UCSD hub on the national NDN testbed using the NDN Platform software running on an Ubuntu server on SDSC's machine room floor. We also host a desktop computer configured with NDN-based video and audio software (provided by UCLA Center for Research in Engineering, Media, and Performance) to support team experiments and testing of instrumented environments, participatory sensing, and media distribution using NDN.

Management support. CAIDA continued to provide management and consulting support for the whole NDN project, including coordination and scheduling among the twelve institutions for research activities, weekly management and other strategy calls with PIs, and NDN Consortium teleconferences. We hosted the 5th NDN Retreat in Feburary 2015 and published slides and related materials via NDN. We co-organized the 2nd Named Data Networking Community Meeting (NDNcomm 2015) hosted at UCLA in September 2015. The meeting provided a platform for attendees from 63 institutions across 13 countries to exchange research and development results, and provide feedback into the NDN architecture design evolution. Throughout the year we recorded meeting minutes and archived them on the project wiki.

We presented an invited talk, "A Brief History of a Future Internet: the Named Data Networking Architecture" at the USENIX Large Installation System Administration (LISA) conference in November 2015.

Policy

Our policy research expanded in 2015, mostly due to requests from policymakers for empirical research results or guidance to inform industry tussles and telecommunication policies, but we also contributed to develop frameworks for ethical assessment of Internet measurement research methods.Technical Guidance on Measuring Internet Interconnection Performance Metrics. In 2015, the most exciting policy research project followed from AT&T and the U.S. Federal Communication Commission jointly selecting CAIDA as the Independent Measurement Expert to perform duties outlined in the FCC order 15-94 approving the AT&T/DirecTV merger. Our responsibility (statement of work published here) was to develop a methodology for AT\&T to use to measure and report metrics related to performance of its interconnections. CAIDA submitted its initial draft of the methodology on 31 December 2015, publicly available in the FCC's electronic filing system. The FCC has not yet approved this methodology; we begin a joint review in early 2016.

Using stable points to frame regulation of a rapidly evolving ICT ecosystem. As a means to achieve more sustainable regulatory frameworks for ICT industries, we introduced an approach based on finding stable points in the system architecture. We explored the origin and role of these stable points in a rapidly evolving system, and explain how they can provide a means to support development of policies, including adaptive regulation approaches, that are more likely to survive the rapid pace of evolution in technology. (Anchoring policy development around stable points: an approach to regulating the co-evolving ICT ecosystem, Telecommunications Policy)

Aspiring for a Better Future Internet. We went blue sky for a spell last year, motivated by watching many policy debates devolve into an ideological standstill over ``What should the Internet be/do for society?'' First, we catalogued as many policy-related aspirations for a future Internet as we could find published in some form. We then thought about how we might achieve them, and how we might measure progress toward them. We used the WIE 2014 workshop to refine our articulation of these principles, and published our current annotated list as a white paper. We intend this list to be a resource for the network and policy research communities as they consider challenges each aspiration raises, what new technology might help promote or measure progress toward a given aspiration, and whether we can create novel means to reduce conflicts among aspirations. (An Inventory of Aspirations for the Internet's future, working white paper)

Measuring the Return on Federal Investment in Cybersecurity R&D. The NSF's Request for Information (RFI) on the Federal Cybersecurity R&D Strategic Plan asked, "What innovative, transformational technologies have the potential to enhance the security, reliability, resiliency, and trustworthiness of the digital infrastructure, and to protect consumer privacy?" We recommended broadening this question, since today's security problems are neither new nor blocked on technical breakthroughs. Rather, they arise in a landscape of misaligned economic incentives, leadership vacuums, regulatory and legal barriers, and intrinsic complications of a globally connected ecosystem with radically distributed ownership. Worse, despite heavy investment in cybersecurity technologies for a decade, we lack data that can characterize the nature and extent of vulnerabilities, or assess the effectiveness of countermeasures. Public policy, including research and development funding strategies, must bridge the inherently disconnected views of cybersecurity used by policymakers and technologists, and prioritize development of ways to measure the return on federal investment in cybersecurity R&D. ( Feedback on Federal Strategic Plan for Cybersecurity Research and Development, submitted to Federal Register)

Legal aspects of cyberwarfare and cyberattacks. We participated in a panel at the 9th Circuit Judicial Conference, on Cyberwarfare and Cyberattacks, an opportunity to explain to an audience full of judges the range and depth of legal and policy obstacles to improved cybersecurity. We published our comments on our blog.

Pragmatic Disclosure & Transparency Policies. D&T policies comprise a significant component of the regulatory provisions in the FCC's 2015 Open Internet Report and Order. We presented a framework with which to interpret the OIO's D&T provisions within the larger context of other regulatory provisions as well as other mechanisms such as third-party performance testing platforms. We argued that the richness of D&T tools can help address the complex and diverse questions that arise in the context of broadband network management, but application of these tools should incentivize cooperation and voluntary disclosure by ISPs while also safeguarding interests of end users and intermediaries in the broadband Internet ecosystem. We applied our framework to prototypical examples of broadband network management scenarios, including the required reporting of packet loss. ( The Road to an Open Internet is Paved with Pragmatic Disclosure & Transparency Policies", TPRC)

Ethical assessment frameworks for Internet research. We contributed to the evolving dialogue in the Internet research community on ethical issues pertinent to a range of information and communication technology research. Recognizing the increasing occurrence of circumstances where existing codes of conduct or law break down in the context of online research, we offered a more formal framework to navigate grey areas by applying common legal and ethical tenets, and embedded it into an initial decision support tool for this purpose. In our view, pursuing a more formal framework for conduct for ethical and legal ICTR research using online data can avoid harm to users, researchers, and the expanding field itself. (How to Throw the Race to the Bottom: Revisiting Signals for Ethical and Legal Research Using Online Data, ACM SIGCAS Computers and Society; and Cyber-security Research Ethics Decision Support (CREDS) Tool, ACM SIGCOMM Workshop on Ethics in Networked Systems Research)

Measurement Infrastructure and Data Sharing Projects

Archipelago (Ark)

CAIDA has conducted measurements of the Internet topology since 1998. Our current tools (since 2007, scamper deployed on the Archipelago measurement infrastructure) track global IP level connectivity by sending probe packets from a set of source monitors to millions of geographically distributed destinations across the IPv4 address space. Since 2008, Ark has continuously probed IPv6 address space as well. In addition to supporting these continuous measurements, and curating and sharing the data with the research community, Ark is a resource available for researchers to run their own vetted experiments. Ark provides a secure and stable platform that reduces the effort needed to develop, deploy and conduct sophisticated experiments. We maintain a list of researchers using the Ark infrastructure and data, and regularly email them with updates and important news.

We supported six cybersecurity-related global Internet measurement experiments, including our own spoofer, congestion and TCP vulnerability measurement projects. We added a measurement experiment from external researchers in Belgium that compares IPv4 and IPv6 middlebox behavior. From Ark vantage points, they probed all dual-stack servers in the top 1 Million Alexa web site list, using the data to classify middlebox behavior and its unexpected complications. (Towards a Middlebox Policy Taxonomy: Path Impairments, NetSciCom 2015)

In 2015 we continued using the Raspberry Pi platform for the Ark hardware, which drastically reduces deployment costs and allowed us to expand our residential deployment. We deployed 28 Ark monitors to extend our global topological views into Argentina (1), Australia (2), Canada (1), China (2), Denmark (1), Germany (1), Ghana (1), Italy (2), New Zealand (2), Serbia (1), Sweden (2), Tanzania (1), and United States (11). We continued large-scale macroscopic topology measurements using this platform. We completed the 8th calendar year of the IPv4 Routed /24 Topology Dataset collection, including automated DNS name lookups for discovered IP addresses. As part of these ongoing measurements, we collected 7,663 billion IPv4 traces in 51,564 files totaling 3.15 TB of data. For IPv6, we collected 264 million traces in 6482 files totaling 124.04 GB.

We upgraded our aging central compute server for post-processing and analysis of Ark measurement data, using two repurposed nodes that previously served as part of SDSC'S Trestles HPC platform. Each node has 32 cores, 64 gigabytes (GB) of memory, and 120 GB of flash memory.

We improved our measurement techniques and analysis methodologies for efficient probing and alias resolution inferences. We released an update to the MIDAR alias resolution system which included performance improvements and bugfixes.

We prepared Dolphin, our bulk DNS resolution tool, for future public release. The Dolphin tool conducts parallel PTR DNS lookups of IPv4 and IPv6 addresses on the order of millions of lookups per day from a single host. It retries failed lookups once per day for up to 3 days. The logic ensures targets only get looked up once per week regardless of TTL which reduces load on authoritative DNS servers. The code is an extensible Python source file (845 lines) that requires no installation or root privileges for use.

BGPStream

In 2015, we finished the design and development of BGPStream, a software framework for processing large amounts of historical and live BGP measurement data and released it in October 2015. This software, designed with extensibility and scalability in mind, provides a time-ordered stream of data from heterogeneous sources and supports near-realtime data processing. In collaboration with Cisco, we began experimenting with access to BGP Monitoring Protocol (BMP) sources and exporting using OpenBMP. To complement BGPStream, we also built software infrastructure capable of monitoring BGP reachability of countries and ASes.

Using this framework, we developed extensions to extract, from RIBs and individual BGP updates, the routing table exported by each Routeviews/RIS monitor (~860 monitors) with 5-minute resolution. One challenge is to contain the memory footprint of the data structures where the routing tables are stored. We purchased and deployed storage capacity for databases and historic archives. We retrieved and indexed all BGP data distributed by the Routeviews and RIPE RIS projects starting from 1999, for a total of ~12TB.

We started the development of techniques and software modules that use BGPstream to detect control-plane anomalies. Working with students from Stony Brook, we developed modules to identify changes in Multi-Origin AS (MOAS) prefixes observed in BGP, as well as in sub-prefixes generating similar conflicts between different origin ASes (sub-MOAS). We developed a module to extract a daily list of all recently announced prefixes to be periodically probed by Archipelago nodes with traceroutes. An inference engine will use this data to identify changes in paths when a suspicious event is detected.

In addition to one technical report (see Publication list below) we presented on BGPStream 5 times during 2015: at AIMS (March), RIPE 70 (May), ACM IMC (October), to the IETF (November), and at the mPlane Workshop (November).

UCSD Network Telescope

We develop and maintain a passive data collection system known as the UCSD Network Telescope to study Internet phenomena by monitoring and analyzing unsolicited traffic arriving at a globally routed underutilized /8 network. We are seeking to maximize the research utility of these data by enabling near-real-time data access to vetted researchers and solving the associated challenges in flexible storage, curation, and privacy-protected sharing of large volumes of data. As part of this effort, we share and support our Corsaro software for capture, processing, management, analysis, visualization and reporting on data collected with the UCSD Network Telescope. The current Near-Real-Time Network Telescope Dataset includes: (i) the most recent 60 days of raw telescope traffic (in pcap format); and (ii) aggregated flow data for all telescope traffic since February 2008 (in Corsaro flow tuple format).

Privacy-Sensitive Data Sharing / PREDICT / IMPACT

The Department of Homeland Security project Protected Repository for the Defense of Infrastructure Against Cyber Threats (PREDICT) provides vetted researchers with current network security-related data in a disclosure-controlled manner that respects the security, privacy, legal, and economic concerns of Internet users and network operators. Toward the end of 2015, the PREDICT project was renamed to the DHS Information Marketplace for Policy and Analysis of Cyber-risk & Trust (IMPACT) project. CAIDA supports IMPACT goals as Data Provider and Data Host and also contributes to developing technical, legal, and practical aspects of IMPACT policies and procedures. Data sets that CAIDA collects, curates, and/or shares through this project include: anonymized IP packet header traces on several backbone OC192 links in Chicago and San Jose, California; legacy and current Internet topology measurements from the Ark Platform (IPv4 Routed /24 Topology, IPv4 Routed /24 DNS Names, IPv6 Topology); heavily curated Internet Topology Data Kits (ITDKs); and Near-Real-Time Network Telescope Data. (See next section.)

Data

Data Collection Statistics

In 2015, CAIDA captured the following raw data. (Storage space compressed and uncompressed in parenthesis.)

- traceroutes of IPv4 address space collected by our Ark infrastructure (1.03 TB/3.15 TB).

- starting Dec 2015, daily traceroutes to a single target IPv4 address in each announced prefix in RIPE and RouteViews BGP data (62.0 GB/189.5 GB).

- traceroutes of IPv6 address space collected by the subset of IPv6-enabled Ark monitors (24.3 GB/124.0 GB)

- passive traffic traces from the equinix-chicago monitor connected to a Tier-1 ISP backbone links at the Equinix facilities in Chicago, IL (411.6 GB/1.0 TB)

- passive darkspace traffic traces collected by our UCSD Network Telescope (188.9 TB/489.7 TB)

- IPv4 Routed /24 Topology

- IPv4 Routed /24 DNS Names

- IPv4 Routed /24 AS Links

- IPv4 Prefix-Probing

- Macroscopic Internet Topology Data Kit ITDK-2015-08

- IPv6 Topology

- The IPv6 DNS Names

- IPv6 AS Links

- Anonymized Internet Traces 2015

- Complete Routed-Trace DNS Lookups

We provided several academic researchers with access to our Near-Real-Time Network Telescope Dataset.

BGP-derived data We maintained the AS Rank web site and the related AS Relationships Dataset that use BGP data and empirical analysis algorithms developed by CAIDA researchers to infer business (routing) relationships between ASes.

We published our AS Classification dataset which classifies ASes by business type, and documented our methodology for generating this dataset. We trained and validated the method with ground-truth data from PeeringDB, the largest source of self-reported data about the properties of ASes.

We list all available data, including legacy data, on our CAIDA Data page.

Data Distribution Statistics

Publicly Available Datasets require that users agree to CAIDA's Acceptable Use Policy for public data, but are otherwise freely available. The table lists the number of unique visitors and the total amount of data downloaded in 2015. In February 2014, we began to make more data unrestricted, now reflected in astronomical growth in downloads of this type of data.

![[Figure: request counts statistics for public data]](images/2015_annual_report_public_count.png)

![[Figure: download statistics for public data]](images/2015_annual_report_public_download.png)

* Volume downloaded per unique user per unique file. (Multiple downloads of same file, which is common, only counted once.)

Restricted Access Data Sets require that users: be academic or government researchers, or sponsors; request an account and provide a brief description of their intended use of the data; and agree to an Acceptable Use Policy.

![[Figure: request statistics for restricted data]](images/2015_annual_report_restricted_count.png)

![[Figure: download statistics for restricted data]](images/2015_annual_report_restricted_download.png)

* Volume downloaded per unique user per unique file. (Multiple downloads of same file, which is common, only counted once.)

Publications using public and/or restricted CAIDA data (by non-CAIDA authors)

We know of a total of 176 publications in 2015 by non-CAIDA authors that used these CAIDA data. (Not all publications are reported back to us, so this is a lower bound that we will update as we learn more.) Some of these papers used more than one dataset. A more complete list is at: Non-CAIDA Publications using CAIDA Data

Tools

CAIDA develops tools for Internet data collection, analysis and visualization, and share them with the community.

In 2015 we released the public version of BGPStream, an open-source software framework for live and historical BGP data analysis (See BGPStream section.)

In January of 2015 we released version v0.5.0 of MIDAR tools. This release contains an implementation of the MIDAR alias resolution system described in "Internet-Scale IPv4 Alias Resolution with MIDAR" and provides three front-ends to MIDAR: a small-scale resolution tool, a medium-scale resolution system, and a large-scale distributed mode of the MIDAR system capable of testing an Internet-scale (at least 2 million) set of IP addresses with the MIDAR IP ID test, with all MIDAR stages and probe methods, from multiple monitor hosts.

We publicly released Marinda, a tuple space implementation in the Ruby programming language, and is used for decentralized communication and coordination which can simplify network programming in a distributed system. It provides, for example, message-oriented synchronous and asynchronous group communication, a persistent connection, and automatic marshaling of structured data.

We continued development of DBATS, a high performing time series database. This database will serve as a back-end component for our next generation visualization and analysis system, Charthouse.

We officially replaced our dated HostDB bulk DNS resolution service with our new Python-based system called Dolphin. We developed a similar but more focused DNS resolution tool called qr. Both packages remain under development for a future public release.

In October we provided an early release of the Periscope Looking Glass API, made available for limited download by request while continuing development of the interface.

The following table displays CAIDA developed and currently supported tools and number of external downloads of each version during 2015.

| Tool | Description | Downloads |

|---|---|---|

| arkutil | RubyGem containing utility classes used by the Archipelago measurement infrastructure and the MIDAR alias-resolution system. | 419 |

| Autofocus | Internet traffic reports and time-series graphs. | 425 |

| Chart::Graph | A Perl module that provides a programmatic interface to several popular graphing package | 202 |

| CoralReef | Measures and analyzes passive Internet traffic monitor data. | 877 |

| Corsaro | Extensible software suite designed for large-scale analysis of passive trace data captured by darknets, but generic enough to be used with any type of passive trace data. | 723 |

| Cuttlefish | Produces animated graphs showing diurnal and geographical patterns. | 1250 |

| dnsstat | DNS traffic measurement utility. | 409 |

| iatmon | Ruby+C+libtrace analysis module that separates one-way traffic into defined subsets. | 126 |

| iffinder | Discovers IP interfaces belonging to the same router. | 494 |

| libsea | Scalable graph file format and graph library. | 266 |

| kapar | Graph-based IP alias resolution. | 248 |

| Marinda | A distributed tuple space implementation. | 203 |

| MIDAR | Monotonic ID-Based Alias Resolution tool that identifies IPv4 addresses belonging to the same router (aliases) and scales up to millions of nodes. | 421 |

| Motu | Dealiases pairs of IPv4 addresses. | 93 |

| mper | Probing engine for conducting network measurements with ICMP, UDP, and TCP probes. | 254 |

| otter | Visualizes arbitrary network data. | 899 |

| plot-latlong | Plots points on geographic maps. | 234 |

| plotpaths | Displays forward traceroute path data. | 90 |

| rb-mperio | RubyGem for writing network measurement scripts in Ruby that use the mper probing engine. | 1571 |

| RouterToAsAssignment | Assigns each router from a router-level graph to its Autonomous System (AS). | 717 |

| rv2atoms (including straightenRV) | A tool to analyze and process a Route Views table and compute BGP policy atoms. | 140 |

| scamper | A tool to actively probe the Internet to analyze topology and performance | 2115 |

| sk_analysis_dump | A tool for analysis of traceroute-like topology data. | 198 |

| topostats | Computes various statistics on network topologies. | 319 |

| Walrus | Visualizes large graphs in three-dimensional space. | 2024 |

Publications

(ordered by primary topic area, but many cross multiple topics)- NAT Revelio: Detecting NAT444 in the ISP, Passive and Active Network Measurement Workshop (PAM), Mar 2016.

- Investigating Excessive Delays in Mobile Broadband Networks, ACM SIGCOMM Workshop on All Things Cellular (ATC), Aug 2015.

- Measuring Interdomain Congestion and its Impact on QoE, Workshop on Tracking Quality of Experience in the Internet, Oct 2015.

- Mapping Peering Interconnections to a Facility, ACM SIGCOMM Conference on emerging Networking EXperiments and Technologies (CoNEXT), Dec 2015.

- A Server-to-Server View of the Internet, ACM SIGCOMM Conference on emerging Networking EXperiments and Technologies (CoNEXT), Dec 2015.

- Workshop on Active Internet Measurement Systems (WIE2015) Report (March 2015), ACM SIGCOMM CCR, Jan 2016.

Internet Topology Mapping, Measurement, Analysis, and Modeling

- Analysis of a "/0" Stealth Scan from a Botnet, IEEE/ACM Transactions on Networking, Apr 2015.

- How Dangerous Is Internet Scanning? A Measurement Study of the Aftermath of an Internet-Wide Scan, Traffic Monitoring and Analysis Workshop (TMA), Apr 2015.

- Teaching Network Security With IP Darkspace Data, IEEE Transactions on Education, Apr 2015.

- Leveraging Internet Background Radiation for Opportunistic Network Analysis, Internet Measurement Conference (IMC), Oct 2015.

- Lost in Space: Improving Inference of IPv4 Address Space Utilization published as a CAIDA technical report in September 2015, to appear in IEEE JSAC, June 2016.

Monitoring Global Internet Security and Stability

- Complexities in Internet Peering: Understanding the "Black" in the "Black Art", IEEE Conference on Computer Communications (INFOCOM), Apr 2015.

- Workshop on Internet Economics (WIE2014) Report, ACM SIGCOMM Computer Communication Review (CCR), Jul 2015.

- Adding Enhanced Services to the Internet: Lessons from History, Telecommunications Policy Research Conference (TPRC), Sep 2015.

Economics

- How to Throw the Race to the Bottom: Revisiting Signals for Ethical and Legal Research Using Online Data, ACM SIGCAS Computers and Society, Feb 2015.

- An Inventory of Aspirations for the Internet's future, Center for Applied Internet Data Analysis (CAIDA), Apr 2015.

- Anchoring policy development around stable points: an approach to regulating the co-evolving ICT ecosystem, Telecommunications Policy, Aug 2015.

- Ethics Research & Development Summary: Cyber-security Research Ethics Decision Support (CREDS) Tool, ACM SIGCOMM Workshop on Ethics Networked Systems Research, Aug 2015.

- Comments on Cybersecurity Research and Development Strategic Plan, Federal Register, Jun 2015.

- The Road to an Open Internet is Paved with Pragmatic Disclosure & Transparency Policies, Telecommunications Policy Research Conference (TPRC), Sep 2015.

- Infrastructure to support QoE measurement, Workshop on Tracking Quality of Experience in the Internet, Oct 2015.

Policy

- IPv6 AS Relationships, Cliques, and Congruence, Passive and Active Network Measurement Workshop (PAM), Mar 2015.

- Measuring and Characterizing IPv6 Router Availability, Passive and Active Network Measurement Workshop (PAM), Mar 2015.

- Leveraging the IPv4/IPv6 Identity Duality by using Multi-Path Transport, IEEE Global Internet Symposium (GI), Apr 2015.

- Characterizing IPv6 control and data plane stability, IEEE Conference on Computer Communications (INFOCOM), Apr 2016.

- Don't Forget to Lock the Back Door! A Characterization of IPv6 Network Security Policy , Network and Distributed Systems Security (NDSS), Feb 2016.

Future Internet Research: IPv6

- Network Mapping by Replaying Hyperbolic Growth, IEEE/ACM Transactions on Networking, Feb 2015.

- The First Named Data Networking Community Meeting (NDNcomm), ACM SIGCOMM Computer Communication Review (CCR), Apr 2015.

- Hyperbolic graph generator, Computer Physics Communications, Jun 2015.

- Named Data Networking Next Phase (NDN-NP) Project May 2014 - April 2015 Annual Report, Named Data Networking (NDN), Jun 2015.

- Network geometry inference using common neighbors, Physical Review E, Aug 2015.

- Schematizing Trust in Named Data Networking, ACM Conference on Information-Centric Networking (ICN), Sep 2015.

- Long-Range Correlations and Memory in the Dynamics of Internet Interdomain Routing, PLOS ONE, Nov 2015.

- The Second Named Data Networking Community Meeting (NDNcomm 2015), ACM SIGCOMM Computer Communication Review (CCR), Jan 2016.

Future Internet Research: Named Data Networking

- BGPStream: a software framework for live and historical BGP data analysis, Center for Applied Internet Data Analysis (CAIDA), Oct 2015.

BGPStream

CAIDA 2015 in Numbers

CAIDA researchers published 27 peer-reviewed publications and 3 technical reports. For details, please see CAIDA papers.

CAIDA researchers presented their results and findings at PAM (New York, NY), and CoNEXT (Heidelberg, Germany), IMC (Tokyo, Japan), SIGCOMM (London, UK), TMA (Barcelona, Spain), and the IEEE Global Internet Symposium (Hong Kong, China), as well as at various other workshops, seminars, Program and PI meetings. A complete list of presented materials are available on CAIDA Presentations page.

CAIDA organized and hosted 5 workshops: AIMS 2015: Workshop on Active Internet Measurements, the 5th NDN Retreat, an internal DHS HSARPA CSD-IMAM mid-term PI meeting, NDNcomm 2015: 2nd NDN Community Meeting (co-hosted with UCLA), and WIE 2015: 6th Workshop on Internet Economics.

In 2015, our web site www.caida.org attracted 279,155 unique visitors, with an average of 2.27 visits per visitor.

As of the end of December 2015, CAIDA employed 17 staff, hosted 11 visiting scholars, 3 postdocs, 3 graduate students, and 10 undergraduate students.

We received $3.14M to support our research activities from the following sources:

![[Figure: Allocations by funding source]](images/allocation_byfunding_2015.png)

| Funding Source | Amount ($) | Percentage |

|---|---|---|

| NSF | $1,957,972 | 62% |

| DHS | $937,805 | 30% |

| AT&T | $177,360 | 6% |

| Gift & Members | $74,365 | 2% |

| Total | $3,147,502 | 100% |

These charts below show CAIDA expenses, by type of operating expenses and by program area:

![[Figure: Operating Expenses]](images/operating_expenses_2015.png)

| Expense Type | Amount ($) | Percentage |

|---|---|---|

| Labor | $2,097,524 | 62% |

| Indirect Costs (IDC) | $1,118,829 | 33% |

| Professional Development | $5,165 | <1% |

| Supplies & Expenses | $72,319 | 2% |

| Workshop & Visitor Support | $18,086 | 1% |

| CAIDA Travel | $49,839 | 1% |

| Equipment | $34,362 | 1% |

| Total | $3,396,123 | 100% |

![[Figure: Expenses by Program Area]](images/expenses_byprogram_2015.png)

| Program Area | Amount ($) | Percentage |

|---|---|---|

| Economic & Policy | $160,310 | 5% |

| Future Internet | $575,235 | 17% |

| Mapping & Congestion | $955,597 | 28% |

| Infrastructure | $826,550 | 24% |

| Security & Stability | $812,852 | 24% |

| Outreach | $55,449 | 2% |

| CAIDA Internal Operations | $10,130 | <1% |

| Total | $3,396,123 | 100% |

Supporting Resources

CAIDA's accomplishments are in large measure due to the high quality of our visiting students and collaborators. We are also fortunate to have financial and IT support from sponsors, members, and collaborators, and monitoring hosting sites.

Visiting Scholars

- Rodrigo Aldecoa, postdoc at Northeastern (working with Dima Krioukov)

- Ruwaifa Anwar, PhD student intern from Stonybrook University

- Karyn Benson, PhD student at UC San Diego

- Adriano Faggiani, Phd student intern from University of Pisa

- Roderick Fanou, PhD student intern from University Carlos III Madrid

- Alex Gamero-Garrido, PhD student at UC San Diego

- Julien Gilon, graduate student at Université de Liège, Belgium

- Vasileios Giotsas, postdoc from University College London (UCL)

- Siyuan Jia, PhD student from Northeastern University in Shengyang, China

- Natalie Larson, PhD student at UC San Diego

- Danny Lee, PhD student intern from Georgia Tech.

- Ioana Livadariu, Phd student from University of Oslo

- Andra Lutu, PhD student intern from University Carlos III Madrid

- Chiara Orsini, postdoc from University of Pisa, Italy

- Ramakrishna Padmanabhan, graduate student from the University of Maryland

Funding Sources

- NSF grant (CNS-1228994) Detection and analysis of large-scale Internet infrastructure outages

- DHS S&T contract (D15PC00188) Software Systems for Surveying Spoofing Susceptibility

- NSF grant (NSF CNS-1513283) Internet Laboratory for Empirical Network Science: Next Phase

- NSF grant (NSF CNS-1528148) Modeling IPv6 Adoption: A Measurement-driven Computational Approach

- NSF grant (NSF CNS-1513847) Economics of Contractual Arrangements for Internet Interconnections

- NSF grant (NSF CNS-1414177) Mapping Interconnection in the Internet: Colocation, Connectivity and Congestion

- NSF grant (NSF CNS-1423659) HIJACKS: Detecting and Characterizing Internet Traffic Interception based on BGP Hijacking

- DHS S&T Cooperative Agreement (DHS FA8750-12-2-0326) Supporting Research and Development of Security Technologies through Network and Security Data Collection

- DHS S&T contract (N66001-12-C-0130) Cartographic Capabilities for Critical Cyberinfrastructure

- NSF grant (CNS-1345286) Named Data Networking Next Phase

- NSF grant (CNS-1111449) Exploring the evolution of IPv6: topology, performance, and traffic

- University Research sponsorship from Cable Labs, Comcast, Google, and NTT Corporation.

![[Figure: The number of times each tool was downloaded from

the CAIDA web site in 2015.]](images/caida-tools-downloads-2015.png)