CAIDA's Annual Report for 2014

Mission Statement: CAIDA investigates practical and theoretical aspects of the Internet, focusing on activities that:

- provide insight into the macroscopic function of Internet infrastructure, behavior, usage, and evolution,

- foster a collaborative environment in which data can be acquired, analyzed, and (as appropriate) shared,

- improve the integrity of the field of Internet science,

- inform science, technology, and communications public policies.

Executive Summary

This annual report covers CAIDA's activities in 2014, summarizing highlights from our research, infrastructure, data-sharing and outreach activities. Our research projects span Internet topology, routing, traffic, security and stability, future Internet architecture, economics and policy. Our infrastructure activities support measurement-based Internet studies, both at CAIDA and around the world, with focus on the health and integrity of the global Internet ecosystem.

Mapping the Internet. We continued to collect and share the largest Internet topology data sets (IPv4 and IPv6) available to academic researchers. We published four new research studies of how to more accurately infer topology, geolocation, and complex routing relationships from traceroute and BGP data, and three new studies on interdomain routing behavior. We also curate and share many aggregated derivative data sets, integrating strategic Internet measurement and data analysis capabilities to provide comprehensive annotated Internet topology maps that will improve our collective ability to identify, monitor, and model critical cyberinfrastructure. We released our eighth and ninth Internet Topology Data Kits (ITDK), which curated measurements taken in April and November 2014, respectively.

We also published two studies on using passive measurement data to improve the inference of IPv4 address space utilization and a study that detected network tarpits (hosts that masquerade as many fake hosts on a network) and assessed their impact on measurement surveys based on active measurements.

Mapping Interconnection Connectivity and Congestion. In a newly NSF-funded project, we built on our detailed understanding of and capability to measure and infer Internet interdomain topology to investigate a loudly debated issue in public policy: congestion at interconnection points. We published analyses of our initial results in both the flagship Internet Measurement Conference (IMC) and the Telecommunications Policy Research Conference (TPRC), as well as participated in panels in D.C. to educate policymakers on how measurement can inform Internet policy development.

Future Internet Architectures. We expanded our involvement in the Named Data Networking project, a global collaboration exploring a generalization of the Internet architecture that allows naming not just the endpoints, i.e., source and destination IP addresses, but rather the data (content) itself. By naming data instead of locations, the new architecture transforms data into a first-class entity while addressing several known technical challenges of today's Internet, including routing scalability, network security, content protection and privacy. We co-authored two high-level introductions for NDN -- one technical and one focused on societal implications -- and participated in NDN routing research, testbed operations, management duties, and provided web site support.

IPv6. Our IPv6 research effort has enabled us to improve the fidelity, scope, and usability of IPv6 measurement technology. The resulting data sets have informed our understanding of how observed IPv6 use correlates with other technical and socioeconomic data: address allocation, available geographic and traffic data, ISP organizational structure (commercial, government, educational), and political/regulatory factors influencing IPv6 deployment.

Security and Stability. We published new and updated versions of studies analyzing large-scale Internet outages and new methods for analysis of darknet (telescope) data. We started the development of BGPStream, a software framework for efficiently processing live and historical BGP measurement data. We also started a new project funded by NSF to develop methodologies and infrastructure to detect BGP man-in-the-middle attacks.

Economics and Policy. We published a study of the industry standard for volume-based transit pricing and whether it makes sense today, a journal version of the platform economics paper we published last year, the policy-relevant interpretation of our interdomain congestion measurement study (TPRC paper mentioned above), and we began a new policy paper proposing an approach for more auspicious regulation of the co-evolving ICT ecosystem. We also submitted a comment to the FCC public comment period on its Open Internet regulation, entitled ``Approaches to transparency aimed at minimizing harm and maximizing investment.''

We continued to expand the functionality of two traffic measurement infrastructures in response to the research community's needs, which enable us to share Internet backbone traffic data (anonymized) samples as well as real-time snapshots of traffic data shared from UCSD's network telescope. We support two community-oriented projects to facilitate sharing of this and other Internet measurement data: (1) DHS's Protected Repository for the Defense of Infrastructure Against Cyber Threats (PREDICT) to support protected sharing of security-related Internet data with researchers; (2) the Internet Measurement Data Catalog (DatCat), an index of information (metadata) about data sets and their availability under various usage policies. We published a draft survey of end-to-end mobile network measurement testbeds as a technical report while it undergoes peer review.

Finally, as always, we engaged in a variety of tool development, and outreach activities, including maintaining web sites, publishing 24 peer-reviewed papers, 4 technical reports, 3 workshop reports, 21 presentations, 17 blog entries, and hosting 6 workshops. Details of our activities are below. CAIDA's program plan for 2014-2017 is available at https://www.caida.org/about/progplan/progplan2014/. Please do not hesitate to send comments or questions to info at caida dot org.

Research Areas and Analysis

Mapping the Internet

Cartographic Capabilities for Critical Cyberinfrastructure

Goals

Maps of the Internet topology are an important tool for characterizing this critical infrastructure and understanding its macroscopic properties, dynamic behavior, performance, and evolution. They are also crucial for realistic modeling, simulation, and analysis of the Internet and other large-scale complex networks. These maps can be constructed for different layers (or granularities), e.g., fiber/copper cable, IP address, router, Points-of-Presence (PoPs), autonomous system (AS), ISP/organization. Router-level and PoP-level topology maps can powerfully inform and calibrate vulnerability assessments and situational awareness of critical network infrastructure. ISP-level topologies, sometimes called AS-level or interdomain routing topologies (although an ISP may own multiple ASes so an AS-level graph is a slightly finer granularity) provide insights into technical, economic, policy, and security needs of the largely unregulated peering ecosystem.

CAIDA has conducted measurements of the Internet macroscopic topology since 1998. Our current tools (since 2007, scamper deployed on the Archipelago measurement infrastructure) track global IP level connectivity by sending probe packets from a set of source monitors to millions of geographically distributed destinations across the IPv4 address space. Since 2008, we have continuously probed IPv6 address space as well.

Infrastructure and Data Support

- We continued large-scale macroscopic topology measurements using our Archipelago (Ark) measurement platform. We completed the 7th calendar year of the IPv4 Routed /24 Topology Dataset collection, including automated DNS name lookups for discovered IP addresses. We created the annual IPv4 AS Core Graph using January 2014 Ark data.

- We released two new Internet Topology Data Kits (ITDK), synthesizing the IPv4 Routed Topology Dataset and targeted alias resolution measurements conducted in April 2014 and November-December 2014. Both the April 2014 ITDK and December 2014 ITDK include: two related router-level topologies; router-to-AS assignments; geographic location of each router; and DNS lookups of all observed IP addresses.

- We deployed 28 Ark monitors in the 2014 calendar year extending our global topological views into Bulgaria (2), Canada (2) Chile (2) Cypress (1) France (2) Germany (3) Greece (1) India (1) Puerto Rico (1) Netherlands (2) Norway (1) Rwanda (1) Sweden (3) and United States (6). We also setup "ping-home" packets from monitors behind NATs to track hosts with dynamically assigned IP addresses. In 2014, we collected 6.892 billion IPv4 traces in 47,345 files totaling over 3 TB of data. For IPv6, we collected 192 million traces in 6,195 files totaling 95.74 GB.

- We released a new version of scamper, our all purpose active probing tool for IPv4 and IPv6 networks. Improvements include: 1) allow scamper to be built in debug mode on FreeBSD after version 10. 2) in scamper-ping, support a probe frequency smaller than once every second. The minimum is now once a millisecond. 3) handle fragmented responses in Linux and SunOS correctly. This is important for IPv6 IP-ID based alias resolution techniques (e.g. speedtrap, ally, radargun) on those platforms. 4) numerous bugfixes and improvements to sc_ally pair-wise alias resolution utility.

- We published the CAIDA 2014 IPv4 & IPv6 AS Core AS-level Internet Graph. This visualization illustrates the extensive geographical scope and rich interconnectivity of nodes participating in the global Internet routing system, and compares snapshots of macroscopic connectivity in the IPv4 and IPv6 address space.

- We designed, developed, and deployed Dolphin our bulk DNS resolution tool. The Dolphin tool conducts parallel PTR DNS lookups of IPv4 and IPv6 addresses on the order of millions of lookups per day from a single host. It retries failed lookups once per day for up to 3 days. The logic ensures targets only get looked up once in 7 days regardless of TTL which reduces load on authoritative DNS servers. The code, a single, extensible Python source file (845 lines) requires no installation or root privileges for use.

Research Activities

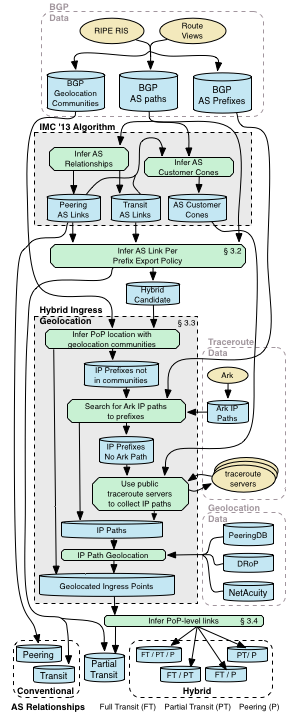

Figure 1. Overview of our process to infer complex AS relationships.

(see Research Activity (5) and related

IMC2014 paper.)

Figure 1. Overview of our process to infer complex AS relationships.

(see Research Activity (5) and related

IMC2014 paper.)

- We advanced the state-of-the-art for automated methods for geolocating Internet resources in our paper, "DRoP:DNS-based Router Positioning" published in SIGCOMM CCR July 2014. For the paper, we compiled an extensive dictionary associating geographic strings (e.g., airport codes) with geophysical locations. We then searched a large set of router hostnames for these strings, assuming each autonomous naming domain uses geographic hints consistently within that domain. We used topology and performance data continually collected by our global measurement infrastructure to ascertain whether a given hint appears to co-locate different hostnames in which it is found. Finally, generalized geolocation hints into domain-specific rule sets. We generated a total of 1,711 rules covering 1,398 different domains and validated them using domain-specific ground truth we gathered for six domains. We extend this method to others through the beta version of DNS Decoded, CAIDA's public web interface for decoding organizational information such as geographic location, router type, and other information encoded in DNS hostnames. The interface allows researchers to decode hostnames, find rules for individual domains, lookup encoding rules by name, or dump all the rule sets.

- We continued work to improve the performance of our AS type classification system algorithm. We investigated classes that were often incorrectly classified and added features to better classify content networks. The code now achieves an accuracy of 73%.

- We collaborated with Simula Research to develop a model that expresses BGP churn in terms of four measurable properties of the routing system, and applied the model to historical BGP update data from 2004-2012. We showed why the number of updates normalized by the size of the topology is constant, and why routing dynamics are qualitatively similar in IPv4 and IPv6. We also showed that the exponential growth of IPv6 churn was expected, as the underlying IPv6 topology was growing exponentially during the time period considered in the paper, "Revisiting BGP Churn Growth", published in SIGCOMM CCR.

- We took a second look at previous work detecting third-party addresses in traceroute traces with the IP Timestamp Option, by cross-validating a technique proposed by Marchetta et al. in PAM2013, with a first-principles approach. Our approach is rooted in the assumption that subnets between connected routers are often /30 or /31 because routers are often connected with point-to-point links. We infer if an address in a traceroute path corresponds to the interface on a router that received the packet (the inbound interface) by attempting to infer if its /30 or /31 subnet mate is an alias of the previous hop. We traceroute from 8 Ark monitors to 80K randomly chosen destinations, and find that most observed addresses are configured on the in-bound interface on a point-to-point link connecting two routers, i.e. are on-path. Because the technique from reports 70.9% -- 74.9% of these addresses as being off-path, we conclude it is not reliable at inferring which addresses are off-path or third-party.

- In "Inferring Complex AS Relationships" we extended our AS relationship inference algorithm to infer more than the three simple relationship types: transit, peering, and sibling. We use BGP, traceroute, and geolocation data to enhance our algorithm to infer two types of complex relationships: hybrid relationships, where two ASes have different relationships at different interconnection points, and partial transit relationships, which restrict the scope of a customer relationship to the provider's peers and customers. (See Figure 1.)

- To gain a better understanding of the topological and economic structure of the Internet, we developed a method to map Autonomous Systems (AS) to organizations that operate them. Our AS-ranking interactive page provides an organization-based ranking of Internet providers based on these inferred mappings. Users can download the Inferred AS to Organization Mapping Dataset.

Outreach

- We organized and hosted the 6th Workshop on Active Internet Measurement (AIMS-6). The workshop report is available.

- We hosted a Mini-Workshop on Topology, BGP and Traceroute Data with collaborators Rob Beverly (Naval Postgraduate School) and Ethan Katz-Bassett (Univ. Southern California) at CAIDA 22-23 May 2014.

- In 2014, we hosted visiting scholar Chiara Orsini, a postdoc from University of Pisa, Italy.

Publications

- Revisiting BGP Churn Growth, ACM SIGCOMM Computer Communication Review (CCR), Jan 2014.

- A Second Look at Detecting Third-Party Addresses in Traceroute Traces with the IP Timestamp Option, Passive and Active Network Measurement Workshop (PAM), Mar 2014.

- Spurious Routes in Public BGP Data, ACM SIGCOMM Computer Communication Review (CCR), Jul 2014.

- DRoP:DNS-based Router Positioning, ACM SIGCOMM Computer Communication Review (CCR), Jul 2014.

- Measurement and Analysis of Internet Interconnection and Congestion, Telecommunications Policy Research Conference (TPRC), Sep 2014.

- Inferring Complex AS Relationships, Internet Measurement Conference (IMC), Nov 2014.

- Challenges in Inferring Internet Interdomain Congestion, Internet Measurement Conference (IMC), Nov 2014.

Internet Census

Goals

One challenge in understanding the evolution of Internet infrastructure is the lack of systematic mechanisms for monitoring the extent to which allocated IP addresses are actually used. IPv4 Address utilization has been monitored via actively scanning the entire IPv4 address space. However, several networks do not respond to such probing packets and therefore we need alternative approaches to estimate their address utilization. Our goal is to improve the current methodologies for inferring IPv4 (and in future IPv6) address space utilization.Research Activities

- In January 2014 we published Estimating Internet address space usage through passive measurements (ACM SIGCOMM Computer Communication Review), which reports our evaluation of the potential to leverage passive network traffic measurements in addition to or instead of active probing. We investigated two challenges in using passive traffic for address utilization inference: the limited visibility of a single observation point; and the presence of spoofed IP addresses in packets that can distort results by implying faked addresses are active. We proposed a methodology for removing such spoofed traffic on both darknets and live networks, which yields results comparable to inferences made from active probing. Our preliminary analysis revealed novel insight into the usage of the IPv4 address space that would expand with additional vantage points.

- We extended and applied the above methodology to additional vantage points and published the results in Lost in Space: Improving Inference of IPv4 Address Space Utilization, Center for Applied Internet Data Analysis (CAIDA), Oct 2014.

- In collaboration with NPS, we undertook a measurement study of network tarpits, their prevalence, and how they impact Internet measurement studies, and published the results: Uncovering Network Tarpits with Degreaser, Annual Computer Security Applications Conference (ACSAC), Dec 2014.

Publications

- Estimating Internet address space usage through passive measurements (ACM SIGCOMM Computer Communication Review)

- Lost in Space: Improving Inference of IPv4 Address Space Utilization, Center for Applied Internet Data Analysis (CAIDA), Oct 2014.

- Uncovering Network Tarpits with Degreaser, Annual Computer Security Applications Conference (ACSAC), Dec 2014.

Mapping Interconnection in the Internet: Colocation, Connectivity and Congestion

Goals

In collaboration with MIT Computer Science and Artificial Intelligence Laboratory (MIT/CSAIL), CAIDA began a NSF-funded project Mapping Interconnection in the Internet: Colocation, Connectivity and Congestion. This project focuses on two related transformations of the Internet ecosystem: the emergence of Internet exchanges (IXes) as anchor points in the mesh of interconnection, and the growing role of content providers and Content Delivery Networks (CDNs) as major sources of traffic flowing into the Internet. In this project we will construct a new type of semantically rich Internet map to elucidate the role of IXes in facilitating robust and geographically diverse but complex interdomain connectivity. Second, we will develop techniques to measure congestion on interdomain links between ISPs. Finally, we will investigate questions related to infrastructure resiliency, scientific modeling, network economics, and public policy.

Infrastructure and Data Support

- We continued implementing and supporting data processing architecture described in Figure 3. We begin by inferring conventional peering and transit relationships and customer cones using AS paths from public BGP data. Using customer cones and conventional relationships, we infer partial transit relationships and candidate hybrid relationships. We use traceroute and geolocation data to infer hybrid relationships, and classify the arrangement by policy and location.

- We began work on designing and developing a comprehensive backend system for collecting and processing TSP data. The system is designed to perform adaptive probing of interdomain links discovered from a VP, maintain detailed historical state a bout topology and probing, provide efficient storage, analysis and retrieval of historical data, and enable visualization of the collected measurements. We have also developed a system to efficiently generate and process time series for thousands of interdomain links, apply a level-shift detection algorithm to infer episodes of congestion, and perform reactive measurements, e.g., loss rate or throughput measurement tests based on the results of time-series analysis.

Research Activities

- A rigorous method to map and accurately annotate border routers with their AS owner is required to understand how ASes interconnect at the router level, and is critical to our TSLP method of localizing interdomain congestion. We began working on new heuristics that build on CAIDA's proven experience in inferring AS relationships and router level topology to address the unsolved critical infrastructure mapping problem of accurate border router identification. We are developing open-source software and algorithms to infer peering interconnections at the router level, focused primarily on the connectivity found in the network of the vantage point (VP).

- The capability to identify peering interconnections with robust physical coordinates at the level of a building will improve a number of operations including network troubleshooting and location of attacks and Internet congestion points, as well as assessing the reliability and availability of network interconnections during outages or attacks. We are developing a new methodology, called constrained facility search to infer the physical interconnection facility where an interconnection occurs among all possible candidates. The method relies on publicly available data about the presence of networks at different facilities and utilizes highly distributed public measurement infrastructure to perform measurement campaigns.

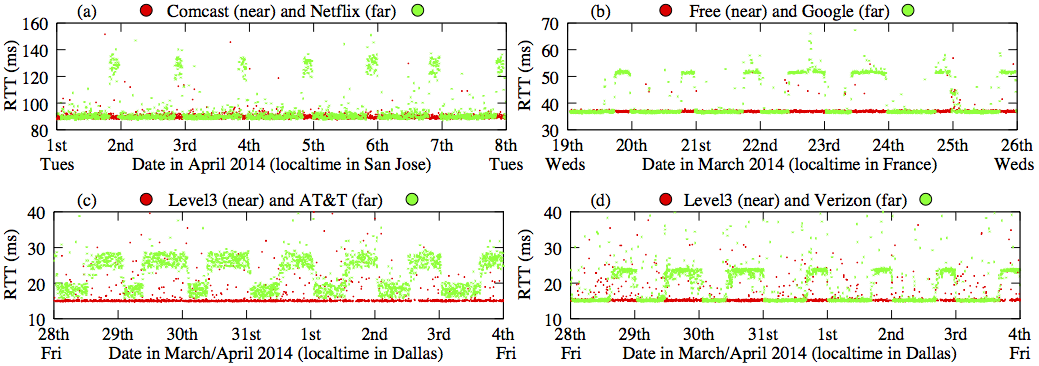

-

Figure 2. A significant and persistent difference between RTT measurements at the "near" and "far" nodes across the inter-domain link is evidence of congestion. (See Research Activity (3) and our IMC2014 paper.) - We began work on analyzing the Network Diagnostic Test (NDT) data and Paris traceroutes collected by the Mlab platform for its potential to reveal congestion at interconnection points between ISPs. We delved into a congestion event in early 2014, where transit ISP Cogent prioritized certain classes of traffic in order to improve the performance at its congested interconnects. We analyzed the qualitative properties of TCP connections before and after this event to develop a metric that could indicate whether a TCP connection started up on a previously congested path.

Outreach

- Graduate student Natalie Larson worked on analyzing NDT data from Mlab for evidence of congestion on end-to-end paths.

- Vasileios Giotsas, a postdoc from University College London (UCL) worked on inferring complex AS relationships, and is leading the effort on locating interdomain links at the facility level.

Publications

- Inferring Complex AS Relationships, Internet Measurement Conference (IMC), Nov 2014.

- Challenges in Inferring Internet Interdomain Congestion, in Internet Measurement Conference (IMC), Nov 2014.

Monitoring Internet Security and Stability

Detection and analysis of large-scale Internet infrastructure outages (IODA)

Goals

Our goal is to create technology that can serve as the basis for automated early-warning detection systems for large-scale Internet outages and to characterize such events (timing, infrastructure and protocols affected, ASes and geographic areas involved, etc.).

Infrastructure and Data Support

We worked on refining and operationalizing methods of analysis and aggregation of Internet measurement data from multiple available sources that shed light on Internet connectivity disruptions, including global connectivity disruptions due to political or catastrophic causes. In particular, this year we focused on the design and development of BGPStream, a software framework for processing large amounts of historical and live BGP measurement data (targeted for release as open source in mid 2015). On top of BGPStream we built more software infrastructure capable of monitoring BGP reachability of countries and ASes. We also continued our investigation of darkspace traffic and of which insights can be extracted from this data source. Finally, we developed and refined visualization techniques and interactive web interfaces to monitor and investigate disruptive events such as Internet outages.Research Activities

- Sudden increases of different types of darkspace traffic can

serve as

an indicator of new vulnerabilities, misconfigurations or large scale

attacks. In Nightlights:

Entropy-based Metrics for Classifying Darkspace Traffic

Patterns (PAM2014), we leverage the fact that darkspace

traffic typically originates from processes that use randomly chosen

addresses or ports (e.g. scanning) or target a specific address or

port (e.g. DDoS, worm spreading). These behaviors induce a

concentration or dispersion in feature distributions of the resulting

traffic aggregate and can be distinguished using entropy-based metrics.

Its lightweight, unambiguous, and privacy-compatible

character makes entropy a suitable metric that can facilitate early

warning capabilities, operational information exchange among network

operators, and comparison of analysis results among distributed IP darkspaces.

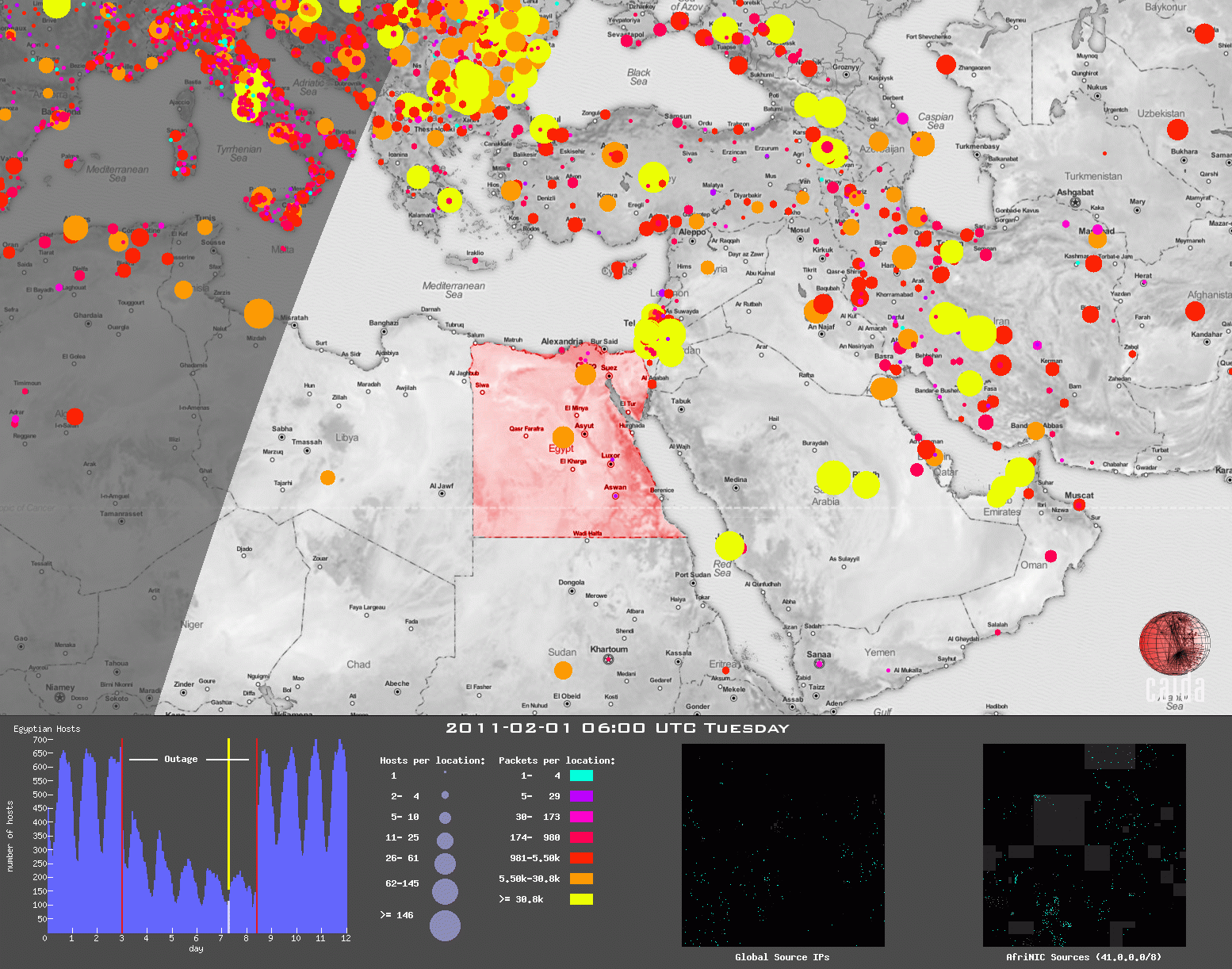

Figure 3. Snapshot of multiple coordinated views animation of the Egypt

Internet blackout during the Arab Spring. (See Research Activity (2) and

related Computing 2014 paper.)

Figure 3. Snapshot of multiple coordinated views animation of the Egypt

Internet blackout during the Arab Spring. (See Research Activity (2) and

related Computing 2014 paper.)

- Critical to our work on outage and event detection on the Internet, we presented A Coordinated View of the Temporal Evolution of Large-scale Internet Events (Computing, Jan 2014), a method to visualize large-scale Internet events, such as a large region losing connectivity, or a stealth probe of the entire IPv4 address space. We applied a well-known technique in information visualization -- multiple coordinated views -- to Internet-specific data (Figure 3). We animated these coordinated views to reveal insight into the temporal evolution of events along different dimensions, including geographic spread, topological (address space) coverage, and traffic impact.

- We completed a journal version of our 2013 paper on Analysis of Country-wide Internet Outages Caused by Censorship (IEEE/ACM Transactions on Networking Dec. 2014). Our analysis relied on multiple sources of large-scale data already available to academic researchers: BGP interdomain routing control plane data; unsolicited data plane traffic to unassigned address space; active macroscopic traceroute measurements; RIR delegation files; and MaxMind's geolocation database.

- We developed a tutorial for conducting Analysis of Unidirectional IP Traffic to Darkspace with an Educational Data Kit (Technical Report, 2014, later published in IEEE Transactions on Education) that describes methods for analyzing unsolicited one-way Internet Protocol (IP) traffic destined to unassigned address space. We provide an educational dataset curated from data collected by the UCSD Network Telescope and detailed step-by-step analysis instructions including the outcome of each of the steps. Our exercises target engineering or computer science undergraduate and graduate students familiar with the concept of IP addresses and port numbers, and IP header fields.

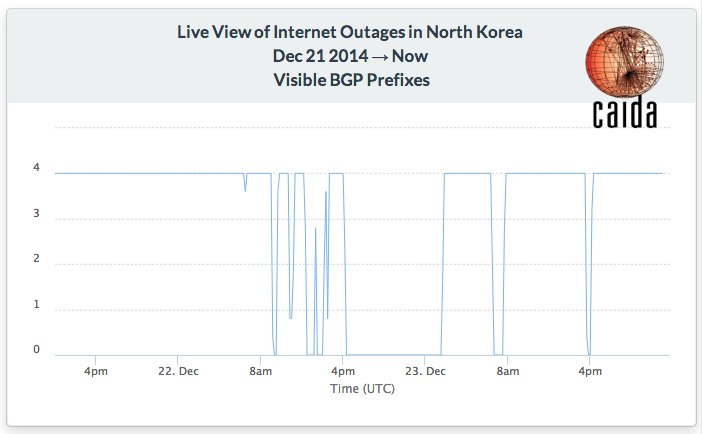

Figure 4. In late December 2014, North Korea experienced unstable Internet connectivity. To preview our outage analysis system, we published a 30-minute delayed view of global visibility of the 4 IPv4 prefixes announced North Korea's national ISP. (See Research Activity (3).)

Outreach

- We organized and hosted the Internet Measurement And Political Science Workshop: Network Outages (IMAPS).

- We posted a blog essay about the disconnection of Time Warner Cable in August 2014: Under the Telescope: Time Warner Cable Internet Outage.

- We posted a blog essay about the multiple disconnections of North Korea around Christmas 2014: North Korean Internet outages observed. (Figure 4.)

- Karyn Benson, a UCSD graduate student, continued analyzing telescope data as part of her thesis work.

- We hosted visiting scholar Alessandro Puccetti, a graduate student from University of Pisa, Italy. Alessandro worked on techniques to identify spoofed packets in darkspace traffic.

Publications

- Nightlights: Entropy-based Metrics for Classifying Darkspace Traffic Patterns (PAM2014)

- A Coordinated View of the Temporal Evolution of Large-scale Internet Events (Computing, Jan 2014)

- Analysis of Unidirectional IP Traffic to Darkspace with an Educational Data Kit (IEEE Transactions on Education)

- Estimating Internet address space usage through passive measurements (ACM SIGCOMM Computer Communication Review)

- Lost in Space: Improving Inference of IPv4 Address Space Utilization, Center for Applied Internet Data Analysis (CAIDA), Oct 2014.

- Uncovering Network Tarpits with Degreaser, Annual Computer Security Applications Conference (ACSAC), Dec 2014.

- Analysis of Country-wide Internet Outages Caused by Censorship (IEEE/ACM Transactions on Networking Dec. 2014).

Detecting and Characterizing Internet Traffic Interception based on BGP Hijacking (HIJACKS)

Goals

In collaboration with Phillipa Gill at Stonybrook, we began a new project to devise effective methodologies for the detection and characterization of large-scale traffic interception events based on BGP hijacking.

Infrastructure and Research Activities

Our proposed route-hijacking detection methods combine passive BGP measurements and active measurements (such as traceroutes), since the mechanism triggering the attack operates on the inter-domain routing control plane, but the actual impact is only verifiable in the data plane. In 2014 we began to develop and evaluate new methods, and to extend our measurement infrastructure to support them.Outreach

- Graduate student Andreas Reuter (University of Berlin, Germany) developed software based on RTRlib to correlate BGP measurements with information from the RPKI and analyze the correlations in the context of potential BGP hijacks.

- We prepared "HIJACKS: Detecting and Characterizing Internet Traffic Interception based on BGP Hijacking" to present at the NSF Secure and Trustworthy Cyberspace (SaTC) PI meeting (January 2015).

Economics and Policy

Goals

Our economics research aims to understand the structure and dynamics of the Internet ecosystem from an economic perspective, capturing relevant interactions between network business relations, internetwork topology, routing policies, and resulting interdomain traffic flows. On the policy side, we strive to respond to requests from government agencies and policymaking bodies for comments and positions that empirically inform industry tussles and telecommunication policies. We also provide expertise on ethical issues pertinent to information and communication technology research.

Research Activities

- In collaboration with Texas A&M University, we published Volume-based Transit Pricing: Is 95 The Right Percentile?, our evaluation of the industry standard 95th percentile pricing mechanism from the perspective of transit providers, using a decade of traffic statistics from SWITCH (a large research/academic network), and more recent traffic statistics from three Internet Exchange Points. In our data set, heavy-inbound and heavy-hitter networks achieved a lower 95th-to-average ratio than heavy-inbound and moderate-hitter networks, perhaps due to their ability to better manage their traffic profile. The 95th percentile traffic volume also does not necessarily reflect the cost burden to the provider. We developed a alternative metric -- the provision ratio for a customer -- which better captures the costs a given customer imposes on a network. We used the provision ratio as the basis for a new transit billing optimization framework that is fair, flexible, and computationally inexpensive. The proposed mechanism has fairness properties similar to the optimal (in terms of fairness) Shapley value allocation, with a much smaller computational complexity.

- Peering agreements between Autonomous Systems affect not only the flow of interdomain traffic but also the economics of the entire Internet ecosystem. The conventional wisdom is that transit providers are selective in choosing their settlement-free peers because they prefer to offer revenue-generating transit service to others. Surprisingly, however, a large percentage of transit providers use an Open peering strategy. In Open Peering by Internet Transit Providers: Peer Preference or Peer Pressure? we investigated the causes of this large-scale adoption of Open peering by transit providers, and the the impact of this peering trend on the economic performance of transit providers. We approached these questions through game-theoretic modeling and agent-based simulations, capturing the dynamics of peering strategy adoption, inter-network formation and interdomain traffic flow. We explained why transit providers gravitate towards Open peering even though that move may be detrimental to their economic fitness. Finally, we examined the impact of an open peering variant that requires some coordination among providers.

- In Using PeeringDB to Understand the Peering Ecosystem we mined one of the few sources of public data available about the interdomain peering ecosystem: PeeringDB, an online database where participating networks contribute information about their peering policies, traffic volumes and presence at various geographic locations. Using BGP data to cross-validate three years of PeeringDB snapshots, we found that PeeringDB membership is reasonably representative of the transit, content, and access providers in terms of business types and geography of participants, and PeeringDB data is generally up-to-date. We found strong correlations among different measures of network size - BGP-advertised address space, PeeringDB-reported traffic volume and presence at peering facilities, and between these size measures and advertised peering policies.

- We began development of an approach to regulating the co-evolving ICT ecosystem Anchoring policy development around stable points (Technical Report, Aug 2014). We described the disparity in the pace of evolution in the information and communications technology (ICT) landscape vs. policy and regulatory innovation, reviewing the natural rates of change in different parts of the ecosystem, and why it has impeded attempts to impose effective regulation on the telecommunications industry. We also offered an explanation for why a recent movement to reduce this disparity by increasing the pace of regulation - adaptive regulation - faces five obstacles that may hinder its feasibility in the ICT ecosystem. As a means to achieve more sustainable regulatory frameworks for ICT industries, we introduced an approach based on finding stable points in the system architecture. We explored the origin and role of these stable points in a rapidly evolving system, and how they can provide a means to support development of policies, including adaptive regulation approaches, that are more likely to survive the rapid pace of evolution in technology.

- We presented a companion paper to our IMC2014 paper on "Challenges in Inferring Internet Interdomain Congestion", aimed at policymakers trying to understand the implications of our measurement method and results: Measurement and Analysis of Internet Interconnection and Congestion, Telecommunications Policy Research Conference (TPRC), Sep 2014. Questions about congestion are an important part of the current debates about reasonable network management. Our results suggest that peering connections are properly provisioned in the majority of cases, and the locations of major congestion are associated with specific sources of high-volume flows, specifically from the Content Delivery Networks (CDNs) and dominant content providers such as Netflix and Google. We see variation in the congestion patterns among these different sources that suggests differences in business relationships among them. We argue that a major goal for the industry is to work out methods of cooperation among different ISPs and higher-level services (who may be competitors in the market) so as to jointly achieve cost-effective delivery of data with maximum quality of service and quality of experience. We discuss some of the problems that must be addressed in this coordination exercise, as well as some of the barriers.

- We published a journal version of our Platform Models for Sustainable Internet Regulation (Journal of Information Policy Sept 2014), which proposed a new model that attempts to capture two durable and persistent features of today's telecommunications ecosystem: the use of layered platforms to implement desired functionality; and interconnection between actors at different platform layers. Using modern theories of multi-sided platforms (MSPs) to focus on key technical and business aspects of today's industry, our MSP-aware layered model explored several recent and impending innovations that have been naively conflated with the global Internet, and illuminated their differences. We illustrated the potential of our model as a baseline for future research by considering how it can help scope consistent policy discourse of three open questions: specialized services, minimum quality regulations (the "dirt road" problem), and structural separation.

- We published a public comment in response to the Federal Communications Commission (FCC) call for comments In the Matter of Protecting and Promoting the Open Internet: Approaches to transparency aimed at minimizing harm and maximizing investment. Embedded in a challenging legal and historical context, the FCC must act in the short term to address concerns about harmful discriminatory behavior. But its actions should be consistent with an effective, long-term approach that might ultimately reflect a change in legal framing and authority. In this comment we do not express a preference among short-term options, e.g., section 706 vs. Title II. Instead we suggested steps that would support any short-term option chosen by the FCC, but also inform debate about longer term policy options. Our suggestions are informed by recent research on Internet connectivity structure and performance, from technical as well as business perspectives, and our motivation is enabling fact-based policy.

Outreach

- We organized and hosted the 4th Workshop on the Internet Economics (WIE 2014). The workshop report is available.

Publications

- Platform Models for Sustainable Internet Regulation, Journal of Information Policy, Sep 2014.

- Approaches to transparency aimed at minimizing harm and maximizing investment, Federal Communications Commission (FCC) Commission Documents, Sep 2014.

- Measurement and Analysis of Internet Interconnection and Congestion, in Telecommunications Policy Research Conference (TPRC), Sep 2014.

- Volume-based Transit Pricing: Is 95 The Right Percentile?, in Passive and Active Measurement Conference (PAM), March 2014.

- Fair, Flexible, and Feasible ISP Billing, in ACM SIGMETRICS Performance Evaluation Review, Dec 2014.

- Open Peering by Internet Transit Providers: Peer Preference or Peer Pressure?, in IEEE Infocom, Apr 2014.

- Using PeeringDB to Understand the Peering Ecosystem, in ACM SIGCOMM Computer Communications Review (CCR), Apr 2014.

Future Internet Research

Our research on the future of the Internet is currently focused on two primary areas: 1) participating in the Future Internet architecture project Named Data Networking Next Phase (NDN-NP); and 2) studying the deployment evolution of the Internet Protocol version 6 (IPv6).

Named Data Networking: Next Phase

Goals

The Named Data Networking Next Phase project (NDN-NP) is the next stage of a collaborative project (one of the three Future Internet Architecture Awards) for the research, development, and testbed deployment of a new Internet architecture that replaces IP with a network layer that routes directly on content names. The NDN project investigates Van Jacobson's proposed evolution from today's host-centric network architecture (IP) to a data-centric network architecture (NDN). This conceptually simple shift has far-reaching implications for how we design, develop, deploy, and use networks and applications. By naming data instead of locations, this architecture aims to transition the Internet from its current reliance on "where" data is located (addresses and hosts) to "what" the data is (the content that users and applications care about). Our activities for this project included testbed participation, research on fundamentally new NDN-compatible routing protocols, and overall management support. For more information see http://www.named-data.net/

Activities

- We maintained a Ubuntu server on SDSC's machine room floor that is the local UCSD hub on the national NDN testbed using the NDN Platform software. We also host a desktop computer configured with NDN-based video and audio software (provided by UCLA Center for Research in Engineering, Media, and Performance) to support team experiments and testing of instrumented environments, participatory sensing, and media distribution using NDN.

- We tested and evaluated code on the NDN testbed, including feedback on problems with functionality and documentation critical to expanding the user base. We worked with developers at UCLA and system administrators at Washington University at St Louis to test a four-way teleconference over the NDN Testbed using NdnCon. The group followed up with feedback and suggestions to the developers.

- We continued general management tasks for the whole NDN project, including coordination and scheduling among the 12 institutions for research activities, weekly management and other strategy calls with PIs, and NDN Consortium teleconferences. We recorded meeting minutes and archived them on the project wiki hosted on CAIDA's network at the San Diego Supercomputer Center (SDSC).

-

To support NDN routing research we developed and released a hyperbolic graph generator, including documentation and benchmarks, added scenarios for NDN network simulation, and documentation on how to install the scenario and use and extend accompanying tools, e.g. what operations are required to build a new simulation that requires a network embedded into a hyperbolic space, or a simulation that wants to adopt the greedy forwarding strategy.

We created a wiki that describe these operations, greedy forwarding in ndnSIM and why we observe different success ratio probability functions when we compare the ndnSIM forwarding strategy and the greedy routing tool (that belongs to the standalone code package).

Outreach

- We co-organized and co-chaired the First Named Data Networking Community Meeting (NDNcomm 2014) hosted at UCLA in September 2014. The meeting provided a platform for the attendees from 39 institutions across seven countries to exchange their recent research and development results, to debate existing and proposed functionality in security support, and to provide feedback into the NDN architecture design evolution. We published a summary report for the meeting in CCR.

Publications

- A World on NDN: Affordances & Implications of the Named Data Networking Future Internet Architecture. Tech. Rep. 18, Named Data Networking (NDN), Apr 2014. In this paper we highlighted four departures from today's TCP/IP architecture, which underscore the social impacts of NDN: the architectures emphases on enabling semantic classification, provenance, publication, and decentralized communication. We described how these changes from TCP/IP will expand affordances for free speech, and produce positive outcomes for security, privacy and anonymity while raising new challenges regarding data retention and forgetting. We described how these changes might alter current corporate and law enforcement content regulation mechanisms by changing the way data is identified, handled, and routed across the Web. We examined how even as NDN empowers edges with more decentralized communication options, by providing more context per packet than IP, it raises new challenges in ensuring neutrality across the public network. Finally, we introduced openings where telecommunications policy can evolve alongside NDN to ensure an open, fair Internet.

- Named Data Networking. ACM SIGCOMM Computer Communication Review (CCR), Jul 2014. (Invited paper). This paper provides a high-level overview of the architecture, describing the motivation and vision of this new architecture, and its basic components and operations. We also provide a snapshot of its current design, development status, and research challenges.

- Named Data Networking (NDN) AnnualProject Report 2013-2014. Technical Report, Nov 2014.

Exploring the evolution of IPv6: topology, performance, and traffic

Goals

CAIDA aims to measure the evolution of IPv6 in three areas: topology, traffic, and performance. The major goal of the IPv6 project is to improve the fidelity, scope, and usability of IPv6 measurement technology: designing measurement primitives for adaptive and intelligent probing, building tools to measure the characteristics of IPv6 adoption at the edge, and comparing characteristics of IPv4 and IPv6 connectivity. The resulting data sets inform our task of correlating these observations with other technical and socioeconomic data: address allocation, available geographic and traffic data, ISP organizational structure (commercial, government, educational), and political/regulatory factors influencing IPv6 deployment. Finally, rigorous empirical data that we collect will help to improve the state of quantitative modeling of the IPv6 transition.

Infrastructure and Data Support

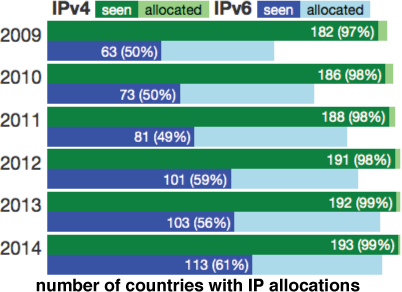

Figure 5. Number of countries with IPv4 and IPv6 allocations compared to

number of countries observed sending traffic to the DNS root servers

during DITL data collection intervals. (See Research Activities (1)

and

interactive web page comparing IPv4 vs IPv6 addressing and

traffic statistics.)

Figure 5. Number of countries with IPv4 and IPv6 allocations compared to

number of countries observed sending traffic to the DNS root servers

during DITL data collection intervals. (See Research Activities (1)

and

interactive web page comparing IPv4 vs IPv6 addressing and

traffic statistics.)

In collaboration with Andra Lutu from IMDEA Networks Institute and University Carlos III of Madrid we are developing a technique to track Carrier-Grade NAT (CGN) deployment in the wild, as another metric of the extent to which carriers are choosing not to deploy IPv6, and working with the FCC who wants to deploy these measurements on their Measure Broadband America infrastructure.

Research Activities

- We correlated address allocation patterns with observable traffic and geopolitical factors. We examined per-country allocation and deployment rates through the lens of the annual "Day in the Life of the Internet" (DITL) snapshots collected at the DNS roots by the DNS Operations, Analysis, and Research Center (DNS-OARC) from 2009-2014. We published an interactive data analysis at https://www.caida.org/archive/policy/dns-country/. (See Figure 5 for sample graph.)

- We designed, implemented, and validated the first Internet-scale AS routing relationship inference technique for IPv6. We modified CAIDA's IPv4 relationship inference algorithm to accurately infer IPv6 relationships using publicly available BGP data. We validated 24.9% of our 41,589 customer-to-provider (c2p) and peer-to-peer (p2p) inferences for July 2014 to have a 99.3% and 94.5% positive predictive value (PPV), respectively. Using these inferred relationships, we analyzed the BGP-observed IPv4 and IPv6 AS topologies, and found that ASes are converging toward the same relationship types in IPv4 and IPv6, however disparities remain due to differences in the transit-free clique and the predominant influence of Hurricane Electric in the IPv6 peering ecosystem. We detail our methods and findings in IPv6 AS Relationships, Clique, and Congruence.

- To investigate (and clarify) claims that the IPv6 routing system is less stable than the IPv4 routing system, we created a specialized prober to support fine-grained correlation of IPv6 router reboots and BGP events, and used it to characterize the availability of IPv6 interfaces and routers. We used our uptime inference algorithm to characterize the availability of IPv6 interfaces and routers. We found a relatively uniform overall rate of interface reboots, indicating a constant background rate of IPv6 interface reboots without the presence of individual events affecting many interfaces. In contrast, the set of core routers exhibited more variation, suggesting correlated reboot events among routers within a provider or organization. We published our results in Measuring and Characterizing IPv6 Router Availability.

- We continued studying the activities of the IPv4 address space transfer "grey" markets as observable in publicly available BGP data along two directions: analysis of address utilization after reported transfers, and inferring transfers in the wild. Using snapshots of the ISI Internet Census (obtained via the PREDICT project) we analyzed the evolution of the utilization of transferred prefixes. We observed that the utilization of transferred prefixes shows a decreasing trend before the transfer, after which it shows an increasing trend, implying that organizations are purchasing addresses to satisfy real need, rather than for hoarding. We have developed new ideas for extending our techniques to detect "grey market" transfers in the wild, including taking scans of reverse DNS mappings to look for candidate transfers, and using traceroute data from the Ark infrastructure to detect changes in data-plane connectivity that may suggest transfer activity. this year.

- With the depletion of IPv4 addresses and gradual deployment of IPv6, many hosts in the Internet today exhibit an unprecedented identity duality (i.e., have both IPv4 and IPv6 addresses) that is likely to remain until IPv4 is phased out completely. We explored whether this identity duality can be leveraged to improve performance, by experimenting with a new use case of MPTCP that considers a dual-stacked interface as two separate interfaces. We measure a marked increase in MPTCP goodput for IPv4 and IPv6 paths that are not sharing a bottleneck; in these cases the MPTCP goodput can be the aggregate of IPv4 and IPv6 goodputs. When the paths are congruent or share a bottleneck link, MPTCP is just as good as TCP, in accordance with MPTCP's main design goals. Our results show that the current identity duality and path incongruence can be leveraged to improve end-to-end performance for large bulk transfers. We published our results in Leveraging the IPv4/IPv6 Identity Duality by using Multi-Path Transport.

Publications

- IPv6 AS Relationships, Clique, and Congruence, Passive and Active Network Measurement Workshop (PAM), Mar 2015.

- Measuring and Characterizing IPv6 Router Availability, Passive and Active Network Measurement Workshop (PAM), Mar 2015.

- Leveraging the IPv4/IPv6 Identity Duality by using Multi-Path Transport, IEEE Global Internet Symposium, Apr 2015.

Measurement Infrastructure and Data Sharing Projects

Archipelago (Ark)

Archipelago (Ark) is CAIDA's active measurement infrastructure, which enables large-scale Internet measurements, while reducing the effort needed to develop, deploy and conduct sophisticated experiments.

Activities

- In February 2014, we made the Ark IPv4 topology data older than two years; all Ark IPv6 topology, dns names, and AS links data; and all raw skitter traces publicly downloadable. Only access to Ark IPv4 topology data from the most recent two years remains restricted.

- We are gradually transitioning from using 1U hardware as Ark monitors to credit-card-sized and approximately $35 Raspberry Pi devices. In 2014, we deployed/replaced 27 monitors (all of them Raspberry Pis) and increased the number of vantage points to 106, including 39 IPv6-capable and 58 Pi-based Ark nodes, deployed in 40 countries.

- We continued improving our measurement techniques and analysis methodologies for alias resolution inferences. We released an update to arkutil, a RubyGem containing various utility classes used by the Ark measurement infrastructure and the MIDAR alias resolution system.

- We continued support for the spoofer experiment (collaboration with Robert Beverly, NPS).

- We maintain a mailing list of researchers using Ark data and regularly email them with updates and important news about the data.

UCSD Network Telescope

We develop and maintain a passive data collection system known as the UCSD Network Telescope to study security related events on the Internet by monitoring and analyzing unsolicited traffic arriving at a globally routed underutilized /8 network. We are seeking to maximize the research utility of these data by enabling near-real-time data access to vetted researchers and solving the associated new challenges in flexible storage, curation, and privacy-protected sharing of large volumes of data.

Activities

- We released an update to our Corsaro software for capture, processing, management, analysis, visualization and reporting on data collected with the UCSD Network Telescope

- We continued sharing the Near-Real-Time Network Telescope Dataset that includes: (i) the most recent 60 days of raw telescope traffic (in pcap format); and (ii) aggregated flow data for all telescope traffic since February 2008 (in Corsaro flow tuple format).

- We released an educational data kit from the telescope data to use as a teaching resource for computer science undergraduate and graduate students. This UCSD Network Telescope Educational Dataset: Analysis of Unidirectional IP Traffic to Darkspace includes all Telescope data collected in April 2012 and shed light on the effects of Microsoft Patch Tuesday activities.

Passive Monitors

Goals

CAIDA continues to support efforts to enable Internet traffic measurements and privacy-respecting sharing. Currently, in collaboration with operators of the hosting sites, we help support data collection efforts on backbone links in Internet exchange points in San Jose, CA and Chicago, IL. We regularly capture packet header (no payload) traces from backbone and peering point links and make anonymized forms of these data available to the research community, albeit with significant policy restrictions on their use.

Activities

- CAIDA routinely supports monthly collection of anonymized traces on several backbone OC192 links: equinix-chicago and equinix-sanjose. These monthly traces of one hour each are provided to interested researchers on request in pcap files containing payload-stripped, anonymized traffic organized in the annual datasets listed below.

- At the end of September 2014 the equinix-sanjose links from San Jose to Los Angeles were upgraded from 10Gig to 100Gig. Since our capture hardware is not capable of tapping these new links the San Jose monitor currently not capturing data. The last monthly trace was taken on September 18, 13:00-14:00 UTC; the report generator stopped at approximately 20:00 UTC on September 18. We are currently investigating our options for reviving this link.

Ongoing data releases

CAIDA continues to make available the following datasets:- CAIDA Anonymized 2008 Internet Traces Dataset

- CAIDA Anonymized 2009 Internet Traces Dataset

- CAIDA Anonymized 2010 Internet Traces Dataset

- CAIDA Anonymized 2011 Internet Traces Dataset

- CAIDA Anonymized 2012 Internet Traces Dataset

- CAIDA Anonymized 2013 Internet Traces Dataset

- CAIDA Anonymized Internet Traces on IPv6 Day and IPv6 Launch Day Dataset

- CAIDA Anonymized Internet Traces 2014 Dataset

Privacy-Sensitive Data Sharing / PREDICT

The Department of Homeland Security project Protected Repository for the Defense of Infrastructure Against Cyber Threats (PREDICT) provides vetted researchers with current network security-related data in a disclosure-controlled manner that respects the security, privacy, legal, and economic concerns of Internet users and network operators. CAIDA supports PREDICT goals as Data Provider and Data Host and also plays an advisory role in developing technical, legal, and practical aspects of PREDICT policies and procedures.

Activities

- We completed a streamlining of various Acceptable Use Agreements (AUA) into a single CAIDA Master Acceptable Use Agreement (AUA) which now applies to the majority of CAIDA datasets with the exception of datasets covered by the Acceptable Use Agreement (AUA) for Publicly Accessible Datasets.

- We collected, hosted, and provided the following current Internet Topology data to PREDICT: (i) Internet Topology measured from Ark Platform (IPv4 Routed /24 Topology, IPv4 Routed /24 DNS Names, IPv6 Topology); and (ii) Internet Topology Data Kits (ITDKs).

- We collected, hosted, and provided UCSD Near-Real-Time Network Telescope Data to qualified researchers through PREDICT.

- We published an educational data kit and related tutorial derived from the Network Telescope data.

- To increase the popularity and usage of our data sets in the research community, we made the Ark IPv4 topology data older than two years; all Ark IPv6 topology, dns names, and AS links data; and all raw skitter traces publicly downloadable. We restructured our data directories to implement this new policy and announced the news in a CAIDA blog entry CAIDA Deliver More Data to the Public. Only access to Ark IPv4 topology data from the most recent two years remains restricted.

- We continued to host and share the legacy Internet topology skitter data (now entirely publicly available) as well as various archived Telescope data sets.

- We captured, curated, and released CAIDA Anonymized Internet Traces 2014 dataset. It contains anonymized passive traffic traces from two high-speed monitors on commercial Internet backbone links and is available to academic and government researchers by request.

- We brought a new data server (Irori) online to add approximately 100 TiB of storage capacity in support of data set curation and distribution. This brings our total storage capacity to approximately 226 TiB.

The PREDICT project also supports our data analysis, sharing, and research activities.

Data

Data Collection Statistics

In 2014, CAIDA captured the following raw data. The first number in parentheses shows the actual disk space used to store the compressed data; the second number is its uncompressed size.

- traceroutes probing IPv4 address space collected by our Ark infrastructure (922.3 GiB GiB/2.7 TiB), and traffic from reverse DNS lookups for discovered IPv4 addresses (45.0 GiB/168.9 GiB)

- traceroutes IPv6 address space collected by a subset of IPv6-enabled Ark monitors (17.4 GiB/89.3 GiB)

- passive traffic traces from the equinix-chicago and equinix-sanjose monitors connected to Tier-1 ISP backbone links at the Equinix facilities in Chicago, IL, and San Jose, CA (633.4 GiB/1.5 TiB)

- passive darkspace traffic traces collected by our UCSD Network Telescope (84.2 TiB/204.3 TiB)

- IPv4 Routed /24 Topology

- IPv4 Routed /24 DNS Names (includes DNS traffic)

- IPv4 Routed /24 AS Links

- Macroscopic Internet Topology Data Kits (ITDKs): ITDK-2014-04 and ITDK-2014-12

- IPv6 Topology

- The IPv6 DNS Names

- IPv6 AS Links

- Anonymized Internet Traces 2014

To increase the popularity and usage of our data sets in the research community, since February 2014, we made the Ark IPv4 topology data older than two years; all Ark IPv6 topology, dns names, and AS links data; and all raw skitter traces publicly downloadable. We restructured our data directories to implement this new policy and announced the news in a CAIDA blog entry CAIDA Deliver More Data to the Public. Only access to Ark IPv4 topology data from the most recent two years remains restricted.

We provided several academic researchers with access to our Near-Real-Time Network Telescope Dataset.

We maintained the AS Rank web site and the related AS Relationships Dataset that use BGP data and empirical analysis algorithms developed by CAIDA researchers to infer business relationships between ASes.

We also provide access to several legacy data sets. We list all available data on our CAIDA Data page.

Data Distribution Statistics

- Publicly Available Datasets require that users agree to CAIDA's Acceptable Use Policy for public data, but are otherwise freely available. The table lists the number of unique visitors and the total amount of data downloaded in 2014.

![[Figure: request counts statistics for public data]](images/2014_annual_report_public_counts.png)

![[Figure: download statistics for public data]](images/2014_annual_report_public_downloads.png)

* We count the volume of data downloaded per unique user per unique file, so if a user downloads a file multiple times, we only count that file once for that user. This methodology significantly underestimates the total volume of data served through our data servers.

** Our AS Taxonomy dataset is mirrored at the Georgia Tech main AS Taxonomy site, so these downloads reflect only a fraction of this data set popularity.

- Restricted Access Data Sets require that users: be academic or government researchers, or sponsor CAIDA; request an account and provide a brief description of their intended use of the data; and agree to an Acceptable Use Policy.

![[Figure: request statistics for restricted data]](images/2014_annual_report_restricted_counts.png)

![[Figure: download statistics for restricted data]](images/2014_annual_report_restricted_downloads.png)

* We count the volume of data downloaded per unique user per unique file, so if a user downloads a file multiple times, we only count that file once for that user. This methodology significantly underestimates the total volume of data served through our dataservers.

-

Publications using public and/or restricted CAIDA data (by non-CAIDA authors)

We currently know of a total of 55 publications by non-CAIDA authors that used these CAIDA data. Some of these papers used more than one dataset. A complete list of all papers can be found on our webpage for Non-CAIDA Publications using CAIDA Data

![[Figure: request statistics for restricted data]](images/2014_annual_report_paper_counts.png)

Tools

In the course of our funded research and infrastructure projects, we regularly develop tools for Internet data collection, analysis and visualization, and make these tools available to the community. The following table displays all CAIDA developed and currently supported tools (note that we do not receive specific funding for tool support and maintenance) and the number of downloads of each version during 2014.

![[Figure: The number of times each tool was downloaded from

the CAIDA web site in 2014.]](images/caida-tools-downloads-2014.png)

* Note: Chart::Graph is also available on CPAN.org. The number shown is direct downloads from caida.org only (statistics from CPAN not available).

Tool Description Downloads arkutil RubyGem containing utility classes used by the Archipelago measurement infrastructure and the MIDAR alias-resolution system. 207 Autofocus Internet traffic reports and time-series graphs. 333 Chart::Graph A Perl module that provides a programmatic interface to several popular graphing packages. 253 CoralReef Measures and analyzes passive Internet traffic monitor data. 199 Corsaro Extensible software suite designed for large-scale analysis of passive trace data captured by darknets, but generic enough to be used with any type of passive trace data. 516 Cuttlefish Produces animated graphs showing diurnal and geographical patterns. 174 dnsstat DNS traffic measurement utility. 271 iatmon Ruby+C+libtrace analysis module that separates one-way traffic into defined subsets. 167 iffinder Discovers IP interfaces belonging to the same router. 371 libsea Scalable graph file format and graph library. 110 kapar Graph-based IP alias resolution. 280 MIDAR Monotonic ID-Based Alias Resolution tool that identifies IPv4 addresses belonging to the same router (aliases) and scales up to millions of nodes. 367 Motu Dealiases pairs of IPv4 addresses. 91 mper Probing engine for conducting network measurements with ICMP, UDP, and TCP probes. 127 otter Visualizes arbitrary network data. 139 plot-latlong Plots points on geographic maps. 181 plotpaths Displays forward traceroute path data. 102 rb-mperio RubyGem for writing network measurement scripts in Ruby that use the mper probing engine. 472 RouterToAsAssignment Assigns each router from a router-level graph to its Autonomous System (AS). 445 rv2atoms (including straightenRV) A tool to analyze and process a Route Views table and compute BGP policy atoms. 163 scamper A tool to actively probe the Internet to analyze topology and performance 1691 sk_analysis_dump A tool for analysis of traceroute-like topology data. 154 topostats Computes various statistics on network topologies. 199 Walrus Visualizes large graphs in three-dimensional space. 1365

Tool Development Activities

- We created a package of tools to generate graphs embedded in the hyperbolic plane, and to compute the efficiency of greedy forwarding in these graphs and released them as the Hyperbolic Graph Generator, version 1.0.1 on October 7, 2014.

- We released version v3.9.3 of coralreef on March 27, 2014 and v3.9.4 on October 14, 2014 which included build fixes for issues with some compilers.

- Corsaro v2.1.0 was released on June 11, 2014.

- We released a new version of iatmon bringing it to v2.1.2 on September 29, 2014.

- We released an update for Scamper on September 1, 2014.

- DBATS is a high performing time series database. It is in development and almost ready for public release.

- We worked on the development of BGPStream, a software framework for processing large amounts of distributed BGP measurement data. We recently started sharing beta versions and we will soon release the first public version.

CAIDA 2014 in Numbers

CAIDA researchers published 24 peer-reviewed publications and 4 technical reports. For details, please see CAIDA papers.

CAIDA researchers presented their results and findings at IMC (Vancouver, Canada), UbiComp (Seattle, WA), HCI International (Crete, Greece), Broadband Internet Technical Advisory Group (BITAG) (Denver, CO), PAM (Los Angeles, CA), and NANOG (Atlanta, GA), as well as at various other workshops, seminars, Program and PI meetings. A complete list of presented materials are available on CAIDA Presentations page.

CAIDA organized and hosted 6 workshops: AIMS 2014: Workshop on Active Internet Measurements, CREDS II: Cyber-security Research Ethics Dialog & Strategy Workshop, a Mini-Workshop on Topology, BGP and Traceroute Data, NDNcomm 2014: NDN Community Meeting (co-hosted with UCLA), IMAPS: Network Outages, and WIE 2014: 5th Workshop on Internet Economics.

In 2014, our web site www.caida.org attracted 328,110 unique visitors, with an average of 2.38 visits per visitor.

As of the end of December 2014, CAIDA employed 17 staff, 4 visiting scholars, 3 postdocs, 2 graduate students, and 10 undergraduate students.

We received $3.40M to support our research activities from the following sources:

![[Figure: Allocations by funding source]](images/allocation_byfunding_2014.png)

| Funding Source | Amount ($) | Percentage |

|---|---|---|

| NSF | $1,930,353 | 57% |

| DHS | $1,060,698 | 31% |

| Gift & Members | $407,430 | 12% |

| Total | $3,398,481 | 100% |

These charts below show CAIDA expenses, by funding source, by type of operating expenses and by program area:

![[Figure: Operating Expenses]](images/operating_expenses_2014.png)

| Expense Type | Amount ($) | Percentage |

|---|---|---|

| Labor | $2,008,054 | 63% |

| Indirect Costs (IDC) | $952,240 | 30% |

| Professional Development | $11,306 | 0% |

| Supplies & Expenses | $86,887 | 3% |

| Workshop & Visitor Support | $25,682 | 1% |

| CAIDA Travel | $51,870 | 2% |

| Equipment | $50,074 | 2% |

| Total | $3,186,114 | 100% |

![[Figure: Expenses by funding source]](images/expenses_byfundingsource_2014.png)

| Funding Source | Amount ($) | Percentage |

|---|---|---|

| NSF | $1,064,358 | 33% |

| DHS | $1,672,058 | 52% |

| Gift & Members | $449,698 | 14% |

| Total | $3,186,114 | 100% |

![[Figure: Expenses by Program Area]](images/expenses_byprogram_2014.png)

| Program Area | Amount ($) | Percentage |

|---|---|---|

| Economic & Policy | $193,343 | 6% |

| Future Internet: IPv6 | $504,456 | 16% |

| Future Internet: NDN | $229,724 | 7% |

| Mapping & Congestion | $618,889 | 19% |

| Infrastructure | $929,713 | 29% |

| Security & Stability | $647,606 | 20% |

| Outreach | $38,823 | 1% |

| CAIDA Internal Operations | $23,560 | 1% |

| Total | $3,186,114 | 100% |

Funding Sources

CAIDA thanks our sponsors, members, and collaborators.

Expired in 2014

- NSF grant (CNS-0958547) Internet Laboratory for Empirical Network Science (iLENS), with REU supplement (ended February 2014)

- NSF grant (CNS-0964236) Discovering Hyperbolic Metric Spaces Hidden Beneath the Internet, with REU Supplement (ended March 2014)

- NSF grant (CNS-1059439) CRI-Telescope: A Real-time Lens into Dark Address Space of the Internet , with REU supplement (ended June 2014)

- NSF grant (CNS-1039646) Named Data Networking (ended August 2014)

Awarded in 2014

- NSF grant (NSF CNS-1414177) Mapping Interconnection in the Internet: Colocation, Connectivity and Congestion (began October 2014)

- NSF grant (NSF CNS-1423659) HIJACKS: Detecting and Characterizing Internet Traffic Interception based on BGP Hijacking (began August 2014)

- NSF grant (CNS-1345286) Named Data Networking Next Phase (began May 2014)

Continuing in 2014

- DHS S&T Directorate contract (N66001-12-C-0130) Cartographic Capabilities for Critical Cyberinfrastructure

- DHS S&T Directorate Cooperative Agreement (DHS FA8750-12-2-0326) Supporting Research and Development of Security Technologies through Network and Security Data Collection

- NSF grant (CNS-1111449) Exploring the evolution of IPv6: topology, performance, and traffic

- NSF grant, (CNS-1228994) Detection and analysis of large-scale Internet infrastructure outages, with REU supplement

- University Research sponsorship from Cable Labs, Cisco Systems, Inc., Comcast, Google, and NTT Corporation,