A Day In The Life of the Internet: A Summary of the January 9-10, 2007 Collection Event

Motivation

To begin building the community and infrastructure to document A Day In The Life (DITL) of the Internet, CAIDA performs coordinated data collections with the ultimate goal of capturing a data set of strategic interest to scientific researchers. On January 9-10, 2007, CAIDA and the DNS Operations, Analysis, and Research Center (OARC) coordinated 48-hour DITL collection event. This page provides a list of collection sites, a summary of the data and metadata we collected. We also discuss lessons we learned from this event and provide recommendations to others for future collection events, including instructions on proper documentation and indexing of data collections.

The Participants

-

DNS Root Participants

The following organizations conducted passive measurements of DNS root servers or related AS112 and Open Root servers. The DNS root server operators were the flagship participants -- contributing pcap files from several hundred anycast instances across four root servers. We are thankful to the following participants.

- c.root-servers.net, operated by Cogent.

- e.root-servers.net, operated by NASA.

- f.root-servers.net, operated by ISC.

- k.root-servers.net, operated by RIPE NCC.

- m.root-servers.net, operated by WIDE.

- as112.namex.it, operated by NaMeX.

- b.orsn-servers.net, operated by FunkFeuer Free Net.

- m.orsn-servers.net, operated by Home of the Brave GmbH.

Additional Participants

The following organizations conducted passive measurements and data collection of campus and transit networks.

The Data

In 2006, CAIDA coordinated synchronized measurements of the DNS root servers. In 2007, we increased the scope of the experiment to include a wider range of measurements from campus and transit links in addition to the DNS root server nodes.

- OARC DNS root traces January 9-10, 2007

The dataset contains 48 continuous hours of DNS packet traces with payload (the RIPE and b.orsn-servers.net sites did not achieve full 48 hour coverage), observed at most instances of C-root, F-root, K-root, and M-root on January 9th and 10th, 2007, UTC. The traces contain both inbound and outbound DNS traffic (only f-root does not have DNS replies). E-root participated in data collection, however, could not upload the data to OARC. See the link to the complete report on OARC data gathering in the conclusion section below for details. Also refer to Appendix A for further information about the format and quality of the OARC data files.

- WIDE-CAMPUS 10 Gigabit Ethernet Trace 2007-01-09

WIDE collected bidirectional packet traces stored in the pcap format with 96 bytes of payload from a 10 Gigabit Ethernet link that connects a university campus in Japan and the WIDE backbone.

- WIDE-TRANSIT 1 Gigabit Ethernet Trace 2007-01-09

WIDE collected bidirectional packet traces with 96 bytes of payload from a 1 Gigabit Ethernet link that connects WIDE and its upstream provider, AS 2914 Nippon Telegraph and Telephone (NTT) (formerly Verio).

- WIDE-TRANSIT Anonymized 1 Gigabit Ethernet Packet Header Trace 2007-01-09

WIDE collected bidirectional packet traces without payload from a 1 Gigabit Ethernet link that connects WIDE and its upstream provider. WIDE captured the first 96 bytes for each packet, performed prefix-preserving anonymization of IP addresses consistent across the entire data set, and removed payloads with tcpdpriv.

- POSTECH-KT 1 Gigabit Ethernet Trace 2007-01-09

POSTECH-KT collected bidirectional packet traces with 96 bytes of payload from a 1 Gigabit Ethernet link that connects POSTECH and KT.

- KAIST-KOREN 1 Gigabit Ethernet Trace 2007-01-09

KAIST-KOREN collected bidirectional packet traces with 96 bytes of payload from a 1 Gigabit Ethernet link that connects the KAIST campus network to KOREN, a national research network in Korea.

- KAIST-Hanaro Packet trace 2007-01-10

Packet payload traces collected on the inbound direction of Gigabit Ethernet links between KAIST and Hanaro, one of the major broadband ISPs in Korea.

- CNU Packet Trace 2007-01-09

Packet payload traces collected in both directions of a Gigabit border link which connects CNU (Chungnam National University) campus network and external networks such as KOREN, SuperSIREN, Hanaro Telecom, Dacom, and Korea Telecom.

- AMPATH Anonymized OC12 ATM Trace 2007-01-09

AMPATH collected bidirectional packet header traces with no payload from an OC12 ATM link on the AMPATH International Internet Exchange.

Data Access

To increase access to currently existing Internet datasets, CAIDA developed DatCat: the Internet Measurement Data Catalog. DatCat provides data documentation infrastructure to the Internet research, operations, and policies communities. The catalog lets researchers find, cite, and annotate data. We intend DatCat to facilitate searching and sharing of data, enhance documentation and annotation, and advance network science by promoting reproducible research. We index all the data collected during the DITL collection events in DatCat.

Access to the data collected during the DITL experiments varies based upon the policies of each collecting organization and the intended use of the data.

- OARC DNS root traces January 9-10, 2007

The OARC makes the root DNS traces available via membership and the Data Access Agreement. For each participating root server anycast instance, we stored (in compressed pcap package/format) the original data files on OARC servers.

- WIDE-CAMPUS 10 Gigabit Ethernet Trace 2007-01-09

- WIDE-TRANSIT 1 Gigabit Ethernet Trace 2007-01-09

- WIDE-TRANSIT Anonymized 1 Gigabit Ethernet Packet Header Trace 2007-01-09

Anonymized versions of the following traces without payload are publicly available at http://mawi.wide.ad.jp/mawi/samplepoint-F/20070109/. Access to the original traces is restricted Please send requests for the data to kjc at wide.ad.jp .

- POSTECH-KT 1 Gigabit Ethernet Trace 2007-01-09

Access to the original data files is restricted. Please send requests for the data to jwkhong at postech.ac.kr .

- KAIST-KOREN 1 Gigabit Ethernet Trace 2007-01-09

KAIST restricts access to this dataset but allows researchers outside KAIST to gain access through collaborative agreements to share analysis, implementation code, and results. For more information, please send email to Kilnam Chon, chon at cosmos.kaist.ac.kr .

- KAIST-Hanaro Packet trace 2007-01-10

Access restricted. Only KAIST staff can access the trace files. People outside KAIST can gain access to shared results only indirectly by providing implementation code for KAIST staff to run on the dataset. For more information, please send email to sbmoon at cs.kaist.ac.kr .

- CNU Packet Trace 2007-01-09

Access restricted. This dataset is not available to anyone outside CNU. People outside CNU can gain access to shared results only indirectly by providing implementation code for CNU staff to run on the dataset. For more information, please send email to Youngseok Lee, lee at cnu.ac.kr .

- AMPATH Anonymized OC12 ATM Trace 2007-01-09

CAIDA intends to make this data available to academic, government and non-profit researchers and CAIDA members.

The Metadata

To successfully reproduce experiments, we encourage participants to record and document not only the tools and methodology (e.g. tcpdump) used to collect their data but specific options and local machine configurations. For the January 2007 event, CAIDA provided a how-to document a data collection page with recommendations for metadata to record and store in DatCat that describes the collected data.

In addition to individual dataset descriptions, DatCat contains metadata that describes data collections, publications, formats, packages, locations, contacts, the motivation for and peculiarities of each data collection as well as citations for, and publications that make use of, the data. For each dataset from the DITL collection event, we store the following basic attributes such as: filenames, sizes, long and short descriptions, keywords, md5 hash value, gzip and tcpdump test flags, node name, first and last packet timestamps, maximum length of the packets, maximum capture length, counts of packets captured and truncated, and clock skew. For specific traces, such as those captured at the dns root servers, we record the server node name, counts of DNS queries and replies, counts of destination and source addresses of queries.

Coverage

Though the January 2007 experiment set a target of 50 hours of collection, some participants did not have resources to capture for the entire duration. Below we describe the periods of coverage for each of the collecting sites.

- OARC DNS root traces January 9-10, 2007

We analyzed the OARC DNS root server data to determine the largest continuous interval within the collection period with the maximum number of sites contributing data (2007-01-09 01:14:36 UTC to 2007-01-11 00:00:15 UTC) or 46 hours, 45 minutes, and 36 seconds.

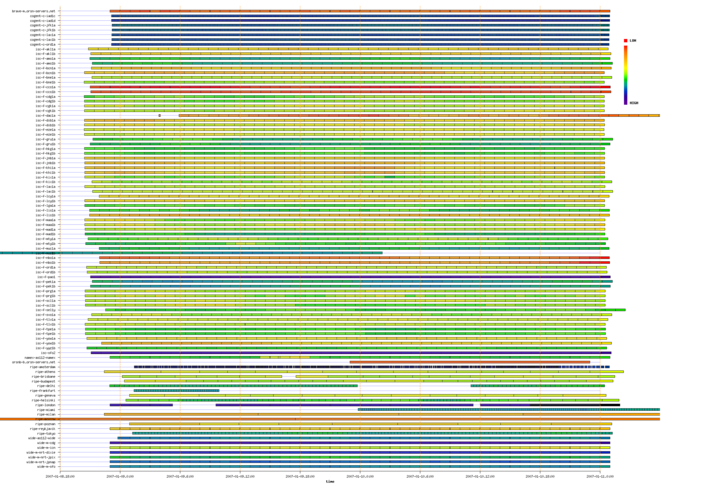

Figure 1 below shows the periods captured by participating DNS root servers during the Day In The Life of the Internet experiment conducted January 9-10, 2007. The colors indicate the relative rate of queries received by the anycast nodes with a range of 0 to 11,441 queries per second.

Figure 1. Coverage of DNS root servers during a Day In the Life of the Internet experiment conducted January 9-10, 2007. (Click for larger image)

- WIDE-CAMPUS 10 Gigabit Ethernet Trace 2007-01-09

This trace spans 44.5 hours and starts at 2007-01-08 19:27:09.402 PST (-0800).

- WIDE-TRANSIT 1 Gigabit Ethernet Trace 2007-01-09

This trace spans 50.25 hours and starts at 2007-01-08 14:45:01.108 PST (-0800).

- WIDE-TRANSIT Anonymized 1 Gigabit Ethernet Packet Header Trace 2007-01-09

This trace spans 50.25 hours and starts at 2007-01-08 14:45:01.108 PST (-0800).

- POSTECH-KT 1 Gigabit Ethernet Trace 2007-01-09

This trace spans 50 hours and starts at 2007-01-08 14:59:59.618 PST (-0800).

- KAIST-KOREN 1 Gigabit Ethernet Trace 2007-01-09

This trace spans 52 hours and starts at 2007-01-09 02:00 PST (-0800).

- KAIST-Hanaro Packet trace 2007-01-10

This trace spans 12 hours from 2007-01-10 11:58:56.810 KST (+0900).

- CNU Packet Trace 2007-01-09

This trace spans 4.1 hours from 2007-01-09 09:01:07.788 KST (+0900).

- AMPATH Anonymized OC12 ATM Trace 2007-01-09

This trace spans 50 hours and starts at 2007-01-08 23:00:30 UTC.

Lessons Learned

- Timing

Early notice increases the chances of participation. Scheduling the data collection experiment so soon after the new year was problematic, various participants being difficult to reach and coordinate. We planned the collection to occur on the second Tuesday and Wednesday of the calendar year for comparison with a similar collection we conducted last year on January 10-11, 2006. We hope to eventually support several such collections per year, scheduled in advance, and will avoid holiday periods unless they are of strategic scientific interest.

- Ownership Management Techniques

The Data Supplier Agreement should be simple. Having a simple one-page "Data Supplier Agreement" to ensure confidentiality of data stored at OARC has worked well for signing up new participants.

- System Requirements, Planning, and Monitoring

We found an underrated lesson in how many operating system and network administration issues are involved in transferring nearly a terabyte of data from various organization and individuals around the globe.

- Plan for disk storage. We would have benefited greatly from conducting a provisioning exercise to estimate the volume of data likely to be submitted versus available disk space. In the future, we should ask contributors for a size estimate in advance, and check against available disk space on the upload servers. Ideally, submitters should do a similar exercise on their local data collection servers, particularly those who do not gather this data on an ongoing basis, and where the upload bandwidth from the collection servers is limited.

- Monitor the file systems. Disk monitoring would assist with disk management and may provide early warning of conditions such as file systems filling faster than expected or unavailability.

- Conduct a dry-run of data collection as well as submission. The dry-run for data collection was an excellent idea, but in practice most of the issues were with data submission rather than collection. In particular, several submitters experienced problems with stateful firewalls at the outbound edge of their organization, causing file uploads to be truncated, corrupted or subject to maximum size limitations. A dry run for data uploading of least 1-2 hours worth of data would identify and navigate these issues prior to the collection event.

- Set up auto write-protection on files. A number of issues arose due to software and/or human error, when data files from one instance of a root server were confused with and overwrote previously submitted files from another instance. To minimize this risk one could setup auto write-protection on files immediately after upload, with a dialogue prompt to override this default when overwriting is explicitly intended.

- Remind participants to adjust for local configuration. Based on this year's experience, it would be useful to remind all participants before future experiments to either temporarily disable any auto-rotate scripts that delete data after an expiry period, or to copy older files to another location.

- Create a mechanism for participants to easily track upload status. Verification of the presence and integrity of the data uploaded by the submitter is not easy using the current scp-based access methods. A number of files uploaded were incomplete, which only became clear after subsequent local decompression verification. We created an extremely useful web page of "file upload status" during the exercise, which we will definitely use for future collecting events. Another possible option is to give contributors sftp and/or shell access to verify and amend their uploads.

- Agree in advance on standard filenaming conventions and formats. Publishing agreed standards for upload filename formats in advance of the exercise could head off various confusion.

- Keep a log of MD5 checksums of files submitted. Even after deleting files from the local collector, it would be potentially useful to keep a log of the MD5 checksum of files submitted for post-verification.

- Set up intermediate staging servers. It may be useful to set up some intermediate "staging" servers which can perform store-and-forward of data between the local collectors and central server. This setup could potentially mitigate some of the disk space and bandwidth issues experienced.

Future Areas of Work for DITL DNS Data

We highly encourage exploratory research and feedback on the DITL data. As a starting point, we suggest the following analysis tasks for the DNS subset of data:

- Automatic pre-processing of data:

(semi-)automatic extraction of "the largest common interval" covered by the data, visualization of the coverage, exclusion of large gaps and errors.

- Computation of basic statistics:

average query rates, number of clients per second seen at each instance, topological coverage of ASes and prefixes, percentage of private RFC1918 traffic.

- Geographical analysis:

client distribution by continents for each anycast instance for use in an Influence Map of DNS Root Anycast Servers.

- Analysis of query loads:

For each root server anycast cloud, the distribution of users binned by query rate intervals with the total number of queries sent by users within each bin. Detailed characteristics of the top five (or more) users for each root node listing IP address, origin AS name and number, location, and relevant statistics such as average query rate and breakdown of query types.

- Analysis of Anycast stability:

For all clients of all root servers - the distribution by the number of nodes they sent queries to. For "unstable" clients, report switches among anycast nodes, the frequency of switches, IP address, origin AS name and number, and geographic location. For each root server anycast cloud, report the percentage of clients switching instances within the same anycast group and to other root servers and the distribution of clients by the number of root server instances they queried. This paper presented at PAM 2007 of Two Days in the Life of the DNS Anycast Root Servers from the 2006 experiment serves as a good example of such analysis.

Additional Data

Resources permitting, CAIDA would like to integrate the following additional datasets into the DITL collection.

- Denial-of-Service attack backscatter data collected by the UCSD Network Telescope

The UCSD network telescope consists of a globally routed /8 network that carries almost no legitimate traffic. Because the legitimate traffic is easy to separate from the incoming packets, the network telescope provides us with a monitoring point to capture anomalous traffic that represents almost 1/256th of all IPv4 destination addresses on the Internet.

The UCSD Network Telescope is a continuous collection system that monitors malicious events such as Denial-of-Service (DOS) attack backscatter, Internet worms, and host scanning. CAIDA hopes to add the DOS backscatter metadata for the January 2007 DITL collection dates to DatCat.

- Macroscopic Topology Measurements

CAIDA's Macroscopic Topology Measurements project actively measures connectivity and latency data for a wide cross-section of the commodity Internet. CAIDA currently uses a special tool called skitter which actively probes forward IP paths and round trip times (RTTs) from a monitor to a specified list of destinations. This continuous collection system stores data in individual files classified by skitter host and by day, where day is defined as a 24 hour period starting from midnight UTC.

- University of Oregon Route Views Project

Route Views provides a tool for Internet operators to obtain real-time information about the global routing system from the perspectives of several different backbones and locations around the Internet. The Route Views project makes Cisco BGP RIBs and updates collected from participating routers in MRT format available to the public via anonymous ftp (or http) on ftp://archive.routeviews.org/.

- Internet2 NetFlow Statistics

Internet2 collects sampled Cisco NetFlow data for the Abilene backbone network of Internet2 and makes daily and weekly reports available.

Conclusion

OARC, acting on the recommendations of the National Academy of Sciences report "Looking over the Fence at Networks: A Neighbor's View of Networking Research (2001)", had never before supported such a broad reaching collection event yet delivered an unprecedented data set that provides a glimpse into a day in the life of the Internet.

For those interested in the details of operational issues faced during the collection event, see the report on OARC's DITL Data gathering Jan 9-10th 2007.

Related Links

- DatCat: Internet Measurement Data Catalog

- Operations, Analysis, and Research Center (OARC)

- Recommendations for future large scale simultaneous DNS data collections

Appendix A: Pcap File Format and OARC Data Quality

Pcap Files

We asked participants to capture packet traces in "pcap" file format using tcpdump (or something similar). Since the amount of data is large, we suggested that pcap files should be split into reasonable sizes, such as one-hour long chunks. Table 1 summarizes the number and compressed size of pcap files received from the participants.

| Participant | Files | Bytes |

|---|---|---|

| brave | 50 | 56,431,330 |

| cogent | 4182 | 178,386,154,065 |

| isc | 3372 | 169,853,420,719 |

| namex | 52 | 4,402,006,593 |

| orsnb | 1 | 54,651,682 |

| ripe | 1942 | 192,486,356,506 |

| wide | 350 | 254,120,851,772 |

| All | 9949 | 799,359,872,667 |

Table 1. The number and compressed size of pcap files received from the participants.

The goal for DITL 2007 was to collect 48 hours worth of data. To be safe, most participants began their collection at least one hour before, and stopped one hour after, the 48 hour period.

OARC Data Quality

Missing Data

Despite the best efforts of all involved, some data was lost. We are aware of four reasons for lost or missing data:

- Not enough local storage. Many of the machines where data was collected were designed only for running the DNS server. They do not have a significant amount of local disk storage. The data could not be sent to the OARC server before running out of disk space.

- Not enough server storage. The server receiving the uploaded pcap files was underprovisioned at the start of data collection. It ran out of disk space, thus putting more strain on those participants with small amounts of local storage. In addition, the collection server crashed a number of times during data collection, leaving partial uploads in place.

- Time zone mistakes. It seems that at least one collection node was configured to use a local time zone instead of UTC. On this machine, the collection process was started via cron(8) or at(1). Since the time zone was wrong, collection began and stopped six hours too late.

- Human error. On the receiving side we mistakenly removed two pcap files while trying to fix some problems. One of these files was still held at the source and could be recovered; the other was lost.

Duplicate Data

In addition to missing data, we also found duplicated data for some nodes. One participant (ISC) mistakenly started two tcpdump jobs on two of their collection nodes. This resulted in overlapping sets of pcap files.

Another participant (RIPE) also had two tcpdump processes running on one node for a few hours. This happened as they realized there was insufficient local storage and made some changes to their collection procedure.

Extra Data

One participant (ISC) has dedicated collection infrastructure at some their busy nodes. The collection machine receives packets via network tap so that data capture does not interfere with operation of the production server. The collection infrastructure is also connected to other production nameservers at the same location. Some of the ISC traces contain DNS messages from the other DNS servers.

Truncated Packets

By default, tcpdump does not capture the entire packet payload. It will only get the first 96 octets of the packet unless the snaplen is increased with the -s command line option. The pcap file stores the size of each packet, so it is easy to find cases where packet data was truncated.

One participant ("ORSNB/FunkFeuer Free Net") forgot to specify the snaplen and, therefore, only captured 96 octets of each Ethernet frame.

In the RIPE data, it appears that snaplen was set to 1500, while it should have been set slightly higher because tcpdump also stores the 14-octet Ethernet MAC header. This is probably not a serious problem, however, because DNS queries rarely exceed even 512 octets.

Clock Skew

During the data collection period we sent special queries to some nameservers in order to detect clock skew. Clock skew occurs when the clock of the collection machine is not synchronized to the global time standard. All DITL participants assured us that their systems use NTP, but since we don't have access to those machines, our technique allows us to check.

We detected five nodes with clock skew greater than 4 seconds. The worst cases were 20 and 17 seconds. While clock skew is not a problem for most of our analysis, it could cause problems when characterizing anycast stability.

Additional Content

/projects/ditl/summary-2007-01/oarc-ditl-operations-report-200701v1.1/

It became clear towards the end of the data collection that the

storage array (sa1) connected to the in1 collection server would fill

up before all data was submitted. sshd on in1 was thus shut down, and

the new storage volume was mounted via NFS. Synchronization of data

between old and new storage array volumes was then attempted, this

needed incoming data submission via sshd to remain shut down while

this proceeded.

This impacted DSC submission as well as it was difficult to separate

out DITL/PCAP and DSC upload services.

Some spare space found, uploads were briefly re-enabled.

in1 server crashed with data sync incomplete, caused NFS mounts to an1 server to hang.

Re-enabled PCAP uploads to in2 server, sync continuing.

Fixed NFS problems to an1 analysis server, re-enabled DSC uploads.

Various crashes of in2 server. DSC cron jobs suspected as possible cause - temporarily

disabled these and started migrating DSC to use postgres for data storage.

JFS problem on new fd1 file server, blocked NFS mounts to an1.

Crash of fd1 file server, caused in2 to hang. Power cycled fd1.

Further crash of fd1 file server, blocked NFS mounts to an1 and in2.

Installed latest standard non-SMP 2.6.16.27-0.6-default kernel on fd1,

re-mounted filesystem on an1.

Postgres problems on in2 - needed reboot.

Further in2 crash. Restored, then shut down in2 and fd1 to upgrade BIOS firmware.

Loss of an1 NFS mount again, fixed on 26th.

in2 server OS software upgraded to FreeBSD 6.2.

Brief downtime of sa1 RAID controller for performance tuning.

DDoS attack against various root servers - requirement for more space to store PCAP attack

data as well as DITL data.

Further loss of an1 NFS mount.

Crash of in2 due to lock violation.

fd1 server and NFS access to it down for 70 minutes for successful scheduled upgrade

of storage array.

Various crashes of in2 server.

A longer term resolution was achieved by replacing the disks in the

old array volume with further higher-capacity new disks, and then on

2nd March re-partitioning and merging this into a single new volume

with a total of 4.5TB capacity

The OARC filesystem is now at 36% of available capacity including both

DITL data to date and additional data collected during the DDoS attack

on 6/7th March,

OARC Report on DITL Data gathering Jan 9-10th 2007 - V1.1

Participants

Operational History

Decision was taken to migrate uploads to in2

server which had more up-to-date/stable OS software.

Data synchronization failed, needed to be restarted.

Downtime for oarc.isc.org website while migrating.

Disabled uploads and re-started data sync again.

DSC uploads still disabled at this point.

Loss of public.oarci.net website from in1.

public.oarci.net drupal website restored on in1.

DSC service re-started after migration from in1 to in2 server.

Resolved Issues

Open Issues

Lessons Learned