QUINCE (Quality of User Internet Customer Experience): a Reactive Crowdsourcing-based QoE Monitoring Platform

The proposal "A Reactive Crowdsourcing-based QoE Monitoring Platform" is also available in PDF.

Principal Investigators: Amogh Dhamdherekc claffy

Funding source: Joint Experiment Period of performance: January 1, 2018 - November 30, 2018.

Abstract

Measuring the Quality of Experience (QoE) in a real-world environment is challenging. Even though a number of platforms have been deployed to gauge network path performance from the edge of the Internet, one cannot easily infer QoE from that data because of the subjective nature of QoE. On the other hand, crowdsourcing-based QoE assessment, namely QoE crowdtesting, is increasingly popular in conducting subjective assessments for various services, including video streaming, VoIP, and IPTV. Workers on crowdsouring platforms can access and participate in assessment tasks remotely through the Internet. The experimenter can also select a pool of potential workers according to their geolocation or their historical accuracy. Existing QoE crowdtesting mainly evaluates emulated scenarios, instead of studying the impact of Internet events. This is because the launching of QoE crowdtesting is usually not based on network measurement results. Although we can measure network path quality from the workers, it will be difficult to correlate the assessment results with Internet events because of differences in the assessment time and network path being measured.1 Research Approach

Measuring the QoE of users is known to be a hard problem for network/service providers. Traditional active and passive network measurement can only obtain objective metrics from which it can be hard to infer the QoE for users. QoE crowdtesting is becoming popular, because it can obtain feedback from human subjects with a lower cost and within a shorter period of time than traditional laboratory-based assessments. Although the workers access the tests through the Internet and are able to conduct some simple network measurement tests, QoE crowdtesting is often evaluated on emulated scenarios (e.g., [5,9]). This is because there is no existing method to determine when and what to measure with QoE crowdtesting. In this project, we propose a reactive framework to launch QoE crowdtesting in a timely manner to evaluate the impact of network events such as link congestion on user QoE.2 The Reactive Framework

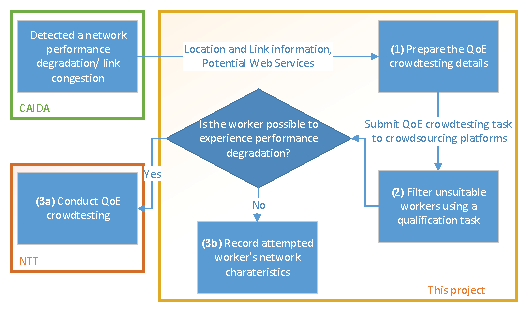

This framework consists of three major steps as shown in Figure 1.- [ resources: - title: Proposal src:

- (1)] The first step is to collect network event information from the measurement infrastructure and prepare the QoE crowdtesting. The congestion measurement infrastructure provides the ASes at the ends of an interdomain link, the relevant IPs, and possibly geolocation information. We propose to explore the web services that may possibly be hosted behind that link using hints in reverse DNS names. We are particularly interested in video streaming services, which account for a large fraction of peak-hour Internet consumption by the end user. For example, if the congestion measurement system finds evidence of link congestion between an an ISP and Netflix, then the platform can automatically launch a QoE crowdtesting campaign on the crowdsourcing platforms to invite workers who use that ISP, to assess the QoE of watching a video streamed from Netflix.

- [

- (2)] The crowdsourcing platforms only support coarse-grained filters for selecting workers, such as the country, the language proficiency, and the past accuracy of workers. Some workers who wish to enroll in our task will not be suitable for assessing the targeted network events. To save the cost and time, we will devise a qualification test, which is a short task that the workers will performn which will give us access to their network information, such as the IP and city-level geolocation. This step will allow us to choose only qualified workers, i.e, workers that are most likely to be in a position to evaluate the QoE effects of the network events we consider.

- [

- (3a)] The qualified workers then conduct QoE crowdtesting which we prepared in Step (1), thus enabling us to evaluate the effect of the network events on QoE.

- [

- (3b)] We will also log the data for unqualified workers, as they can be useful for testing future events. After collecting sufficient data, we can "pre-approve"/"blacklist" workers who are known to be suitable/unsuitable.

2.1 An Example

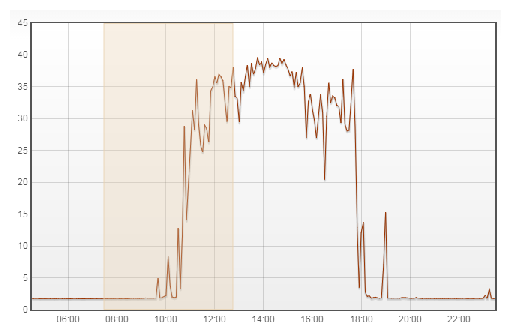

We illustrate the framework with the following example. Figure 2 shows a time-series of the round-trip time (RTT) from a probe in Hong Kong to a local newspaper website using Akamai's CDN service 1. From the traceroute data, we find that the RTT inflation is probably caused by a congestion event between the university and Wharf T&T. Therefore, we expect that the framework will launch QoE crowdtesting by enlisting workers from that university to stream video clips from Akamai in order to assess the impact on QoE.3 Research Challenges

There are a number of technical challenges that we will address in this project:3.1 Choice of Crowd

It is important to choose the correct crowd (a pool of people) to conduct QoE crowdtesting, because the size and diversity of the crowd can affect the opportunity of measuring from workers who are influenced by a network event. One possible way is to build our own crowd and require the participants to install software (e.g., Dasu [18] and Mobilyzer [16]). However, this approach is hard to scale and the participants tend to have low diversity. Furthermore, there is no real incentive for the participants to conduct QoE assessments, which results in sparse measurements. In this project, we propose a framework which utilizes the large and diverse pool of workers available from existing crowdsourcing platforms. For example, Amazon Mechanical Turk claims to have more than half a million registered workers from 190 countries. Around 70% of MTurk workers are from the US [3]. Furthermore, a recent study [20] collects worker's information from seven MTurk accounts registered and submitted tasks at different geographical locations. They find that each MTurk account can, on average reach a population of 7,300 Amazon MTurk workers. Apart from MTurk, we can also utilize other platforms such as Microworkers [2] and CrowdFlower [1] to increase the accessible workers. Even though the worker base in existing public crowdsourcing platforms is large, there is still a possibility that the framework cannot find sufficient workers, or cannot find workers in the "right" place to assess the observed network events. In this case, we will investigate the possibility of inviting participants from the network where the Ark probes are located. In several cases, our Ark probes are in residential locations, hosted by volunteers who may be willing to perform QoE measurements on request. Testing from the Ark vantage points will ensure that the QoE tests will traverse the same paths as the network measurement tests.3.2 Worker Selection

Selecting the workers who are affected by a particular network event (Step (2)) can be a challenging problem. This is because the browser has limited capability in performing network measurement. For example, traceroute cannot be run on the browser. Therefore, we can rely on control plane information (e.g., BGP) and data from other measurement infrastructures (e.g., iPlane and RIPE Altas) to predict the network path. Furthermore, the worker database in our framework can help speed up the worker selection process. The completion time of the QoE crowdtesting task is also critical, because the network degradation may be short-lived. To alleviate this problem, we can actively invite suitable workers by sending email or personal messages via the platform. Furthermore, we propose to dynamically adjust the wages for the crowdsourced workers, which can attract a sufficient number of workers in a timely manner, i.e., before the network degradation event ends.3.3 Network Event Selection

A number of network events on the Internet can degrade the network performance and hence impact the QoE of end-users. The measurement infrastructure we have built can detect the presence of network congestion in a timely manner. However, the platform still has to determine which event may cause impact on the QoE. In this project, we can focus on inter-domain congestion events, because congested links can result in significant delay inflation and packet loss. Existing studies [12] showed that these two network impairments can cause QoE degradation in video streaming services.3.4 QoE Crowdtesting Task Preparation and Design

The design of the QoE crowdtesting task is important to reliably measure QoE. For example, the description of the QoE crowdtesting task displayed on the dashboard can help attract suitable workers. We will consider publicly available information, such as BGP, IP-geolocation, and ISP-AS mapping, to convert technical details into layman's terms. For example, consider an inter-domain congestion event between an ISP and a video streaming provider. The IP-geolocation mapping of the links may narrow down to a physical area, say California. Therefore, we can specify in the task that we are interested in users in California connecting with the ISP. Therefore, workers can understand whether s/he suits our task. Besides, other factors including wages and the length of the task can also affect the incentive of participating the task. The collaboration with NTT can further facilitate the design of the QoE assessment.

Table 1: Summary of different quality metrics for video streaming investigated in existing literatures.

| Bitrate | Bitrate | Bitrate switching | Initial | Rebuffering | Rebuffering | ||

| Initial | Highest | Frequency | Amplitude | delay | events | duration | |

| [15] | ↓ | ||||||

| [9] | ↓ | ↓ | |||||

| [8] | ↓ | ↓ | |||||

| [11] | ↑ | ↓ | ↓ | ||||

| [19] | - | ↓ | ↓ | ||||

| [10] | ↑ | - | ↓ | ||||

| [7] | - | - | - | ||||

| [12] | ↓ | ↓ | ↓ | ||||

| [14] | ↓ | ||||||

| [13] | ↑ | ↓ | |||||

| improving, having no effect, or degrading the QoE, respectively. | |||||||

References

- [1]

-

Crowdflower. http://www.crowdflower.com/.

- [2]

-

Microworkers. https://microworkers.com/.

- [3]

-

mturk tracker. http://demographics.mturk-tracker.com.

- [4]

-

CAIDA. AS Ranking. https://asrank.caida.org/.

- [5]

-

K.-T. Chen, C.-J. Chang, C.-C. Wu, Y.-C. Chang, and C.-L. Lei. Quadrant of euphoria: a crowdsourcing platform for QoE assessment. IEEE Netw., 24(2):28-35, 2010.

- [6]

-

CrowdFlower. Crowd demographics. https://success.crowdflower.com/hc/en-us/articles/202703345-Crowd-Demographics.

- [7]

-

S. Egger, B. Gardlo, M. Seufert, and R. Schatz. The impact of adaptation strategies on perceived quality of HTTP adaptive streaming. In Proc. ACM VideoNext, 2014.

- [8]

-

T. Hoßfeld, S. Egger, R. Schatz, M. Fiedler, K. Masuch, and C. Lorentzen. Initial delay vs. interruptions: Between the devil and the deep blue sea. In Proc. QoMEX, 2012.

- [9]

-

T. Hoßfeld, M. Seufert, M. Hirth, T. Zinner, P. Tran-Gia, and R. Schatz. Quantification of YouTube QoE via crowdsourcing. In Proc. IEEE ISM, 2011.

- [10]

-

T. Hoßfeld, M. Seufert, C. Sieber, and T. Zinner. Assessing effect sizes of influence factors towards a QoE model for HTTP adaptive streaming. In Proc. QoMEX, 2014.

- [11]

-

Y. Liu, Y. Shen, Y. Mao, J. Liu, Q. Lin, and D. Yang. A study on quality of experience for adaptive streaming service. In Proc. IEEE IIMC, 2013.

- [12]

-

R. Mok, E. Chan, and R. Chang. Measuring the quality of experience of HTTP video streaming. In Proc. IFIP/IEEE IM (pre-conf), 2011.

- [13]

-

R. Mok, W. Li, and R. Chang. IRate: Initial video bitrate selection system for HTTP streaming. IEEE JSAC, in press, 2016.

- [14]

-

R. Mok, X. Luo, E. Chan, and R. Chang. QDASH: A QoE-aware DASH system. In Proc. ACM MMSys, 2012.

- [15]

-

P. Ni, R. Eg, A. Eichhorn, C. Griwodz, and P. Halvorsen. Spatial flicker effect in video scaling. In Proc. IEEE QoMEX, 2011.

- [16]

-

A. Nikravesh, H. Yao, S. Xu, D. Choffnes, and Z. M. Mao. Mobilyzer: An open platform for controllable mobile network. In Proc. ACM MobiSys, 2015.

- [17]

-

J. Redi, T. Hoßfeld, P. Korshunov, F. Mazza, I. Povoa, and C. Keimel. Crowdsourcing-based multimedia subjective evaluations: A case study on image recognizability and aesthetic appeal. In Proc. ACM CrowdMM, 2013.

- [18]

-

M. Sánchez, J. Otto, Z. Bischof, D. Choffnes, F. Bustamante, B. Krishnamurthy, and W. Willinger. Dasu: Pushing experiments to the Internet's edge. In Proc. USENIX NSDI, 2013.

- [19]

-

N. Staelens, J. D. Meulenaere, M. Claeys, G. V. Wallendael, W. V. den Broeck, J. D. Cock, R. V. de Walle, P. Demeester, and F. D. Turck. Subjective quality assessment of longer duration video sequences delivered over HTTP adaptive streaming to tablet devices. IEEE Trans. Broadcast., In press, 2014.

- [20]

-

N. Stewart, C. Ungemach, A. J. L. X. Harris, D. M. Bartels, B. R. Newell, G. Paolacci, and J. Chandler. The average laboratory samples a population of 7,300 Amazon Mechanical Turk workers. Judgment and Decision Making, 10(5), 2015.

- [21]

- YouTube. Live encoder settings, bitrates, and resolutions. https://support.google.com/youtube/answer/2853702?hl=en.

Footnotes:

File translated from TEX by TTH, version 4.03.

On 19 Apr 2018, 13:15.