CAIDA's Annual Report for 2007

Mission Statement: CAIDA investigates practical and theoretical aspects of the Internet, focusing on activities that:

- provide insight into the macroscopic function of Internet infrastructure, behavior, usage, and evolution,

- foster a collaborative environment in which data can be acquired, analyzed, and (as appropriate) shared,

- improve the integrity of the field of Internet science,

- inform science, technology, and communications public policies.

Executive Summary

This annual report covers CAIDA's activities in 2007, summarizing highlights from our research, infrastructure, and outreach activities. Our current research projects, funded by the U.S. National Science Foundation (NSF) and U.S. Department of Homeland Security (DHS), include several measurement-based studies of the Internet's core infrastructure, with focus on the health and sustainability of the global Internet's topology, routing, addressing, and naming system. Our routing research project made fundamental advances this year in applying key theoretical computer science results to solving an imminent global Internet problem: the lack of a truly scalable Internet routing architecture. In support of realistic modeling of routing on Internet-like networks, we improved our well-received dk-series topology modeling construct to support node and link annotations, as well as realistic topology generation. Our DNS research project included a collaboration with ISC's DNS-OARC to hold an unprecedented coordinated collection of all traffic to a set of DNS root name servers in January 9-10, 2007. We published analyses and visualization of the results as well as helped disseminate the largest set of simultaneous DNS traffic data ever made available to DNS researchers.

Per CAIDA's mission, our infrastructure activities aim to narrow the growing gap that impedes the field of network research, well as telecommunications policy and infrastructure sustainability: a dearth of available empirical data on the public Internet since the infrastructure privatized in the mid-1990s. Over the last decade our ability to get data from the commercial Internet has gradually diminished, as has the quality of science in the field of Internet research. In 2007 we continued to maintain a catalog of Internet measurement data sets, contributed to the planned (DHS-funded) PREDICT repository of datasets to support cybersecurity research, and developed and deployed new active and passive measurement infrastructure. We made tremendous progress on renovating our active measurement infrastructure, now collecting the most comprehensive set of IPv4 topology measurements made available to researchers, enhanced with DNS information. Our data repository now includes weekly archives of complete Internet AS-level topologies enriched with AS relationship information, and weekly updates of AS (ISP) rankings.

We led and participated in tool development to support measurement, analysis, indexing, and dissemination of data from operational global Internet infrastructure. Highlights include geographical visualizations of DNS workload to a given set of servers, updates to our IPv4 and IPv6 AScore posters, and visual maps of IPv4 address space consumption.

As an Internet data analysis and research group largely supported with public funding, we accept a responsibility to seek, analyze, and communicate the salient features of the best available data about the Internet, as well as identify and track progress on its underlying weaknesses. There are a common set of operational problems across the Internet industry which can be classified into four dimensions of emerging critical infrastructure: safety, scalability, sustainability, and stewardship. The bad news for scientists and engineers is that making progress on all of these operational problems, even those that seem technical in nature, is blocked on non-technical issues of economics, ownership, and trust. For ten years CAIDA sought to tackle one problem -- measurement -- whose biggest obstacles had long clearly been economic (cost of instrumentation and data management), ownership (legal access to data), and trust (privacy and security obstacles to measurement). A more recent, and more painful, insight was that measurement is not unique in this regard: all persistently unsolved operational problems of the Internet are blocked on issues of economics, ownership, and trust (EOT). In 2006 ( annual report) CAIDA began to expand its scope, including realigning some projects and pursuing new projects to specifically recognize and cross the boundaries between technology, policy, and economics. Our notable contributions in this area this year were a paper on "The (Un)economic Internet", and six original entries on CAIDA's new blog: According to the Best Available Data.

Finally, we engaged in a variety of outreach activities, including web sites, peer-reviewed papers, technical reports, presentations, blogging, animations, and workshops. Details of our activities are below. CAIDA's program plan for 2007-2010 is available at https://www.caida.org/about/progplan/progplan2007/. As always, please do not hesitate to send comments or questions to info at caida dot org.

Research Projects

Routing

Toward Mathematically Rigorous Next-Generation Routing Protocols for Realistic Network Topologies

Goals

Routing is a core element of any network architecture, but also the function with the greatest scalability challenges. Ominously, and in fact a motivation for the FIND program, there is broad consensus that the existing Internet routing architecture has reached its scalability limits and needs to be replaced. The best available data suggests that the most limiting factor of growth in the Internet routing system is the requirement to propagate updates across the network for each topology change. Confronting this reality, our project proposes a radical shift in the philosophy of network routing, as we investigate the possibility of routing without these resource-intensive updates, instead exploiting underlying macroscopic structure in the network topology to find efficient paths. Discovery of the relevant underlying structure of the Internet, which we call its 'hidden metric space' (HMS), will have profound practical implications for the Internet, potentially enabling an Internet routing architecture that is indefinitely scalable to the world's needs. Early results of our investigation between the structure and function of complex networks also imply fundamental ramifications for our understanding of complex systems in other disciplines.

Activities

We demonstrated that logarithmic scaling of routing table (RT) sizes on Internet-like topologies is fundamentally impossible in the presence of topology dynamics or topology-independent (flat) addressing. We showed analytically that the number of routing control messages per topology change cannot scale better than linearly on Internet-like topologies. We also confirmed by simulations that topology-independent addressing breaks logarithmic RT size scaling. These pessimistic findings led us to the conclusion that a fundamental re-examination of assumptions behind routing models and abstractions is needed in order to find a routing architecture that would be able to scale 'indefinitely'.

We began exploring new approaches to scalable routing. We developed analytic tools to extract from a given network the important scaling properties of routing, and the structure of paths produced by a given routing algorithm. We found that scale-free structure of the real complex networks maximizes their navigability when using a greedy routing strategy -- forward the packet to your neighbor who is closest to the destination in a hidden metric space. No other network structure allows such efficient path navigation. The Internet belongs to the class of such navigable networks, but its routing architecture does not take any advantage of this structural property or its promise for scalable routing, nor do we yet know how to describe or even prove the existence of its hidden metric space, which we set forth as our next goal, broken into the following steps:

- Obtain empirical evidence that hidden spaces do exist and that they are metric indeed;

- Identify navigability mechanisms that influence the efficiency of greedy routing in complex networks;

- Find the basic geometrical and topological properties of hidden spaces that make them maximally congruent with respect to the identified navigability mechanisms;

- Obtain empirical evidence that hidden spaces under real networks do possess these properties; and

- Find mappings of nodes in real networks to the identified spaces or their models.

In collaboration with S. Shakkottai from Stanford we refined our model of Internet growth which attempts to explain preferential attachment based on economic realities of the AS-level Internet. To our knowledge, this is the first Internet evolution model that is realistic, analytically tractable, and entirely based on physical, that is, measurable, parameters. Most important, when we substitute the measured values of the parameters into analytic expressions of the model it predicts and explains the observed value of the exponent of the power-law node degree distribution in real AS topologies. Thus, this model closes the 'measure-model-validate-predict' loop.

Major Milestones

- Analysis of compact routing schemes

-

- Completed the analysis of compact routing schemes and published our results in CCR "On Compact Routing for the Internet" by D. Krioukov, k. claffy, K. Fall, and A. Brady).

- Demonstrated that there can be no dynamic or even static name-independent scheme that would scale polylogarithmically on scale-free graphs.

- Began working on new exploration of self-similarity of complex networks and hidden metric spaces to underlying real networks. A paper "Self-Similarity of Complex Networks and Hidden Metric Spaces" by M. Angeles Serrano, D. Krioukov, and M. Boguna is in preparation.

- Began working on new approaches to routing scalability based on greedy routing strategies. A paper "Navigability of Complex Networks" by M. Boguna, D. Krioukov, and k. claffy is in preparation.

- We developed a network topology modeling framework that treats annotations as an extended correlation profile of a network. This framework allows us to capture the large-scale structure of the Internet for purposes of rescaling. We describe this work in the paper "Graph Annotations in Modeling Complex Networks" by X. Dimitropolous, D. Krioukov, A. Vahdat, and G. Riley.

- Outreach

-

- Published in CCR a report on the ISMA Workshop on Internet Topology (WIT).

- Evolution

-

- Developed a new model of the Internet growth.

- Submitted a paper "Evolution of the Internet AS-level Ecosystem" by S. Srinivas, M. Fomenkov, R. Koga, D. Krioukov, k. claffy to INFOCOM.

Student Involvement

The following graduate students participated in this research and were responsible for various tasks accomplished:

C. Hubble and J. Burke (both UCSD), and A. Brady (Tufts) simulated various compact routing models and estimated their performance.

A. Stauffer (UCB) worked on mapping growth-based network models to equilibrium ensembles.

Topology

Macroscopic Topology Measurements, Analysis, and Modeling

Goals

CAIDA's topology research remains focused on three areas: macroscopic topology measurement; topology modeling in support of routing research; and analysis of the observable ISP and router-level topologies.

Activities

In 2007 CAIDA made significant progress in all three areas of topology research.

-

We continued large-scale macroscopic topology measurements while developing and deploying Archipelago (Ark), our new measurement architecture. By December, we had deployed 13 Ark monitors worldwide which provided a more representative IPv4 topology census. We added automated DNS reverse lookups of IP addresses discovered in probing.

We created a visualization illustrating the current status of IPv4 address space utilization. This map uses the IPv4 census taken by the LANDER project that probed every IPv4 address with an ICMP echo request (ping) packet. Since some hosts do not respond to the probes due to firewalls, NAT boxes, and ICMP filtering, this scan data give a lower bound on IPv4 address utilization.

-

We extended our dK-series method that accounts for link and node attributes in graphs representing real complex systems. The ability to describe correlations of node or link attributes, not just degrees, enhances the set of tools available to network researchers and other disciplines using the dK-series method. We applied the dK-series method to a wide variety of available topologies of complex networks and found that all these networks are 3K-random at most. A paper is in preparation.

-

We investigated techniques to generate annotated Internet graphs of different sizes based on existing observations of Internet characteristics and reproducing the original structure at larger scale. We began development of methods to create a special case of annotated graphs -- dual AS-router Internet graphs -- by gluing statistics extracted from traceroute data.

Major Milestones

- Improving topology measurements

-

- On September 12, 2007, we began collecting data on the new Ark platform.

- By year's end, 13 Ark monitors were collecting the IPv4 Routed /24 Topology Dataset.

- Complemented traceroute topology probing with DNS reverse lookups of the discovered IP addresses.

- Posted a map of IPv4 address space utilization.

- dK-Series and dK-Annotations

- Developed dK-annotations methodology that treats annotations as an extended correlation profile of a network.

- Submitted a paper "Graph Annotations in Modeling Complex Network Topologies" by X. Dimitropoulos, D. Krioukov, A. Vahdat, and G. Riley to ACM Transactions on Modeling and Computer Simulation (TOMACS).

- Analyzed known complex networks in terms of dK series correlations and found that all these networks are 3K-random at most.

- Topology modeling

-

- Introduced a generic formalism for generation of annotated graphs of arbitrary sizes.

- Developed a new topology generator.

- Published "Orbis: Rescaling Degree Correlations to Generate Annotated Internet Topologies", in SIGCOMM '07, August 27-31, 2007, Kyoto, Japan by P. Mahadevan, C. Hubble, A. Vahdat, D. Krioukov, and B. Huffaker

- Began to work on constructing dual AS-router topologies of the Internet.

- Ongoing data releases

- We make publicly available a number of topology datasets.

-

- the adjacency matrix of the Internet AS-level graph computed daily from skitter measurements.

- AS relationship repository where we archive, on a weekly basis, the complete Internet AS-level topologies enriched with AS relationship information for every pair of AS neighbors;

- weekly updates of AS-ranking data

- daily files of the DNS annotations to the core traceroute data

Student Involvement

The following graduate students participated in this research and were responsible for various tasks accomplished:

A. Jamakovic (TU Delft) applied the dK-series methodology to study randomness of various complex networks.

P. Mahadevan (UCSD) worked on rescaling Internet graphs.

DNS

Improving the Integrity of Domain Name System (DNS) Monitoring and Protection

Goals

Supported by the NSF grant SCI-0427144, CAIDA supplies the research community with DNS measurement data, tools, models, and analysis methodologies for use by DNS operators and researchers.

Activities

During the 3rd year of the DNS project we acquired an unprecedented collection of data from root DNS servers and made that data available to the research community via the DNS-OARC. Academic researchers can participate in the DNS-OARC for free.- Measurements of traffic at

the DNS Root Servers

-

In collaboration with ISC and OARC, we held the second large-scale simultaneous "Day in the Life of the DNS Root Servers" data collection event on January 9-10, 2007. We captured tcpdump traces at nearly all anycast instances of the C, E, F, K, and M root servers and from two alternative Open Root Server Network (ORSN) servers. This unique dataset represents the most comprehensive measurements of the root servers and affords researchers an unprecedented insight into the specifics of root server operations. We published a summary of the collection event, cataloged the data into DatCat and presented a comparison of traffic from the DNS root nameservers in DITL 2006 and 2007. We updated our influence map visualization that illustrates the geographical diversity of DNS root server clients and differences in deployment strategies of different root server nodes.

-

Based on our experience with simultaneous multi-site measurements of DNS traffic, we created dnscap - DNS traces collection utility to streamline and standardize the collection efforts. This program collects DNS packets via libpcap instead of tcpdump. It enables automatic output file rotation preventing files from being accidentally overwritten, and automatic filtering of DNS-only messages reducing file sizes and, correspondingly, storage requirements. The program can also decode IPv6 packets. We will recommend participating sites to use dnscap for future global DNS measurements.

-

- Other DNS measurements

-

We implemented automated bulk DNS reverse lookups into our topology data collection process.

-

We continued our open resolvers survey and posted daily reports that identify open DNS resolvers. These resolvers represent a dangerous vulnerability to Internet users since they allow resource squatting, are easy to poison, and can be used in widespread Distributed Denial of Service (DDoS) attacks. We also studied the prevalence of open resolvers for .GOV and .MIL domains.

-

In collaboration with Prof. N. Brownlee (University of Auckland (UA), New Zealand), we maintained NeTraMet traffic meters installed at various locations in the US, New Zealand, and Japan, and continued monitoring requests to, paired with responses from, root/gTLD servers generated by large campus/enterprise networks. The resulting longitudinal dataset is available for researchers. It contains information useful for evaluating performance conditions and trends on the global Internet, although note that DNS RTTs are influenced by several factors, including remote server load, network congestion, route instability, and local effects such as link or equipment failures. We also collected DNS responses at the UA Internet gateway, and analyzed them to detect historical trends and unusual or abusive behavior such as occurrences of typo squatter, fast flux, and spammers' domains. We published these results in a paper "Passive Monitoring of DNS Anomalies" by B. Zdrnja, N. Brownlee, and D. Wessels at DIMVA 2007.

-

We collected daily packet traces to the servers in the .CL anycast cloud and indexed them into DatCat. We used this data to produce animated maps of the DNS workload for the .CL Chilean ccTLD.

-

- Studies of DNS anycast deployment

-

In collaboration with NIC Chile, we conducted a controlled anycast switching experiment when we observed the re-distribution of load on anycast nodes of the Chilean DNS infrastructure following failure and subsequent recovery of an anycast node. The experiment consisted of three phases. First, we collected packets on every interface of all the nodes in the .CL anycast cloud. Next, we removed a node and watched how clients redirected their requests to the next available node. Finally, we re-introduced the removed node back into the cloud and observed how the load rebalanced after recovery. The total duration of the experiment was 6 hours, 2 hours for each phase.

As a result of the node shutdown, part of the load previously seen by the anycast cloud moved to the unicast server, probably selected by the clients based on a lower RTT. We found that the transition period following the node shutdown is surprisingly short, within one second, indicating high stability and reliability of .cl's DNS service. During node recovery we observed longer convergence times, on the order of 10-20 seconds, although clients were being served by other nodes throughout.

- Working with Prof. G. Riley (Georgia Tech University) and his students, we are conducting laboratory simulations to model the effectiveness of anycast deployment for DNS root servers in general. We started experiments simulating various network failure scenarios for both global and local server nodes in realistic Internet AS topologies derived from CAIDA and RouteViews data. Our objective is to determine the impact of BGP convergence on the stability and reliability of anycast deployment as well as to assess possible effects of anycast deployment on BGP.

-

-

DNSSEC Performance Testbed

In collaboration with ISC we simulated DNSSEC performance using a dedicated laboratory testbed. First, we identified several candidate server computer configurations based on price and availability and measured the memory performance (bandwidth, latency, and cache performance) of each. On the basis of these measurements, we selected the HP 1 system (DL 140) to use for our testbed. Next, we benchmarked four operating systems on the selected hardware: FreeBSD (current version 6.2RC2), Solaris-10, Gentoo Linux (Version 2.6.17.9), and Windows.

Finally, we measured the performance of our system using both the recorded lookup request stream from a real name server and a generated hybrid stream augmented with database update requests. We investigated various DNSSEC extensions to the protocol and various OSes. In every case, we took measurements for a wide range of load magnitudes, increasing loads until the system collapsed in order to locate the crucial load limits. We concluded that commodity hardware with BIND 9 software is fast enough by a wide margin to run large production name service and serve queries from a signed zone, provided that it has enough physical memory to hold the zone data. The results are published in an ISC technical report, "A DNSSEC performance testbed design"

- DNS Statistics Collector (dsc)

tool

We installed dsc to monitor various DNS servers at CAIDA, SDSC, and NIC Chile, verified the resources required to run this software and received useful operational feedback from new DSC users. Based on this input, we streamlined DSC installation and operational procedures, implemented the ability to store data in an SQL database back-end, and added support for DNS TCP traffic. These changes should improve DSC's usability and (we hope) popularity in the DNS research and operational communities.

Major Milestones

- DNS Root servers traces

-

- Collected traces from nearly all anycast instances of C, F, K, and M root servers on January 9-10, 2007.

- Presented a paper based on 2006 data "Two days in the life of the DNS anycast root servers" by Z. Liu, B. Huffaker, M. Fomenkov, N. Brownlee and k claffy at PAM07.

- Indexed the analyzed 2006 data into DatCat.

- Posted 2007 Influence map of DNS root servers.

- Data releases

- We make a number of DNS datasets available publicly or by request.

-

- four years of data on RTTs from several campuses to root/gTLD servers

- daily reports identifying open DNS resolvers

- OARC DNS root traces January 10-11, 2006

- database of reverse DNS lookups

- Other

-

- Organized and co-organized OARC and WIDE workshops covering topics of DNS measurements and research.

- Published a paper "Passive Monitoring of DNS Anomalies" by B. Zdrnja, N. Brownlee, and D. Wessels.

- Demonstrated feasibility of running production-scale DNSSEC enabled name service using commercially available hardware.

- Increased dsc tool capabilities and convenience of use.

Student Involvement

Ziqian Liu (a visiting student from China) presented results of his analysis of root servers data at PAM 2007.

Sebastian Castro (a visiting student from Chile) collaborated with CAIDA researchers to collected and analyze data in the NIC Chile DNS infrastructure. He has also become integral to the analysis of the data collected during the DITL 2007 event.

Talal Jaafar and Veena Raghavan, graduate students from Georgia Tech University, worked on laboratory simulations of the anycast deployment effectiveness.

Haven Hash, an undergraduate student from Washington State University, participated in DNS root server traffic analysis.

Jeff Terrel, a graduate student from UNC, assisted CAIDA researchers with building transaction based models of client-server traffic.

Economics and Policy

Cooperative Measurement and Modeling of Open Networked Systems (COMMONS)

Goals

With support from Cisco Systems and the National Science Foundation, we continued activity on the COMMONS project. In this project we hope to conduct a scientific experiment that offers measurement technology and connectivity to a research and education backbone infrastructure in exchange for access to measurement of the resulting interdomain network and its costs. We believe this project offers a much needed resource to help chart the future of the Internet and a renewed telecommunications research agenda.

Activities

In early 2007 we published the final report of the December 2006 COMMONS workshop. The report concluded, "Through collaborative peering and rigorous data collection and analysis, the COMMONS Project facilitates both basic research and innovative improvements to the Internet." The data gathered via the COMMONS project will lend strategic significance to U.S. network research programs. We are still exploring funding and organizational options for expanding COMMONS project participation.

Major Milestones

In its first year, the COMMONS project reported the following major milestones.

- The first COMMONS participants

-

- We attached the first potential COMMONS participant providing passive data: ISC is providing real-time anonymized traffic reports and in exchange for R&E network access will provide vetted researchers with anonymized packet headers upon request.

- Austrian collaborators at Funkfeuer host an ark monitor and have expressed interest in hosting a passive monitor for real-time report generation as well.

- Publication

-

- We published "The (Un)economic Internet", in IEEE Internet Computing to introduce a new track on Internet economics. Unfortunately we did not get any publishable content in the series, despite lots of editorial effort.

- Independently, we published the top ten things we have learned about network infrastructure economics so far.

- We published the COMMONS Workshop Final Report for the COMMONS Workshop held in December 2006.

- Kimberly Claffy presented on technology policy panels at Supernova and Freedom to Connect.

- Sascha Meinrath presented the COMMONS proposal at the TPRC 2007 workshop.

- We continued to provide input from our research to the OECD for several of their reports.

Student Involvement

We supervised an undergraduate student, Jennifer Hsu, who worked for eight weeks building a prototype genealogy of Internet infrastructure companies. This project didn't get too far, but we put the prototype up to hopefully inspire further attention to the need for such a delineation of history.

Infrastructure Projects

CONMI: Cooperative Network Measurement Infrastructure

Goals

The core objective of the "Community-Oriented Network Measurement Infrastructure" (CONMI) project is to deliver needed data sets to the scientific community studying the Internet, while facing the tremendous operational, economic, and policy barriers. CAIDA stewards both active and passive infrastructure to measure a wide cross-section of the Internet and collect and curate the resulting data. The CONMI project is partially supported by NSF grant (CRI 05-51542) "Toward Community-Oriented Network Measurement Infrastructure."

Activities

Our efforts during 2007 focused on the two tasks described in the 2005 NSF proposal: (i) implementing Archipelago, our state-of-the-art, community-oriented, active measurement infrastructure; and (ii) deploying monitors capable of collecting passive traces on Internet links, including new monitors for OC192 backbone links and web pages displaying reports from publicly accessible realtime traffic monitors.

- i. Archipelago (Ark): A Coordination-Oriented Measurement Infrastructure

On September 12, 2007, our next generation active measurement infrastructure, Archipelago (Ark) began collecting its first production IPv4 Routed /24 Topology Dataset as part of the Macroscopic Topology Project. Archipelago makes use of measurement nodes located in various networks worldwide that connect via the Internet to a central server located at CAIDA. Ark achieves greater scalability and flexibility than the previous skitter-based measurement infrastructure, including better mechanisms for (automated) download by researchers. Though we designed Ark with macroscopic topology as its first target application using WAND's scamper tool for topology probing to large numbers of IPv4 and IPv6 addresses, CAIDA intends for Ark to eventually support a variety of network measurement experiments as needed by researchers.

Attention to the measurement coordination facility in Ark allows many pieces of the infrastructure to work efficiently toward a common goal, and will eventually allow collaborative use of the infrastructure by different researchers. Archipelago uses Marinda, a coordination facility inspired by David Gelernter's tuple-space based Linda coordination language. Archipelago extends Gelernter's tuple space model with features needed to support a globally distributed measurement infrastructure that hosts and stores heterogeneous measurements by a community of researchers.

- ii. Deployment of Passive Monitors for Trace Collection on Backbone Links

During 2007, CAIDA identified potential locations for hosting both new and repurposed monitors capable of capturing packet header traces on high-speed R&E and commodity backbone links as well as serving publicly available traffic reports. Candidate locations included tier 1 service providers, Internet exchange points and R&E organizations including Internet2, CENIC, NASA-Ames, FrontRange GigaPoP, North Carolina R&E Network, and Purdue University to evaluate potential monitoring locations. We had some success with AMPATH, an exchange point in Miami, Florida which facilitates peering between U.S. and international R&E networks. On one of their OC12 links, we collected packet header traces that we make available via the CAIDA Anonymized 2007 Internet Traces Dataset. Additionally, we captured real-time traffic reports from February 2007 to March 2008 when the OC12 link was decommissioned.

CAIDA also made progress with a commercial tier 1 ISP with hardware located at Equinix's data centers where we worked toward hosting newly designed, purchased, configured, and tested monitors capable of tapping commercial backbone links at OC192/10GE interfaces.

Locally, CAIDA worked on updating operating systems and retooling hardware to build monitors to tap UCSD's own campus links that carry commodity Internet and R&E (CENIC) network traffic. We hope to have these monitors deployed in 2008.

Major Milestones

In its second year, the Ark project reported the following milestones.

- Implementation and Deployment of Active Infrastructure (Ark)

-

- Young Hyun completed the development of the core facilities for communication and coordination of Ark experiments.

- We developed, implemented, tested and executed procedures for remote upgrades of legacy NLANR AMP and CAIDA skitter machines to repurpose for Ark. By the end of 2007 we had deployed thirteen Ark monitors conducting the team-probing experiment for topology discovery.

- We designed and implemented, and on September 12, 2007, we began running team-probing in production. Team-probing uses Ark's coordination facilities to parallelize execution and improve the dynamics of target IPv4 address destination selection. The application uses Routeviews (any large current BGP repository would work), to determine routable IP space, dividing it into network prefixes of /24's. Each monitor in a team randomly picks an IP address within each routable /24 and conducts a traceroute using scamper (see below). Increasing the number of monitors can reduce the cycle times and increase the number of traces attempted for each destination /24. Load is distributed across teams and monitors based on resource availability.

- The team-probing application successfully used WAND's scamper as its primary active measurement topology tool. Developed by Matthew Luckie, it supports a variety of probing methods, path MTU discovery, and storage of detailed meta-data. CAIDA worked closely with Matthew to help define and test the feature set for scamper as well as track down and fix bugs. Based on analysis by Matthew on which traceroute methods were optimal for reaching the target IP addresses, we switched the team-probing methodology from UDP to ICMP + Paris traceroute.

- Completed supporting software for Ark: team-driver to execute and manage the measurements on the monitors; ark-collector to download files, and various scripts to process and archive files. All tools for managing collected data stress scalability and fault tolerance.

- Deployment of Passive Measurement Infrastructure

-

- Brought online the Coralreef Report Generator for realtime monitoring of Miami FIU-AMPATH

- Publications

-

- Young Hyun presented Archipelago: A Coordination-Oriented Measurement Infrastructure to UCSD CSE, April 2007.

PREDICT: Network Traffic Data Repository to Develop Secure IT Infrastructure

Goals

The Protected Repository for the Defense of Infrastructure against Cyber Threats (PREDICT) was designed to provide sensitive security datasets to qualified researchers, while preserving privacy and preventing data misuse. PREDICT offers a secure technical and policy framework to process applications for data sharing from network providers, which includes tools for collection, processing, and hosting of data that will eventually be available through the program as well as secured infrastructure to support serving datasets to researchers.

Activities

CAIDA's involvement in the effort includes assisting with: the technical framework to process applications for data; development of communications between Data Providers, Data Hosting Sites, and Researchers; collection and processing of data that will eventually be available through the program; developing and deploying infrastructure to allow CAIDA to serve datasets to researchers; and providing input for the development of a legal framework to support the project. In 2007, CAIDA helped finalize Memoranda of Agreements to allow CAIDA to participate in the launch of the PREDICT program as a data provider and a data hosting site, serving data containing passive traces of backbone peering links, denial-of-service backscatter data, Internet worm data, Network Telescope data, and IP topology data to approved researchers.

Major Milestones

- CAIDA becomes a Data Provider and Data Host

-

- UCSD signed the PREDICT Memorandum of Agreement (MoA) to become both a Data Provider and Data Host.

- Uploaded Metadata to Predict Portal for Researchers

-

- Submitted four quarters of the Denial-of-Service (DoS) Backscatter Datasets.

- Submitted new dataset mapping IP prefixes to Internet Autonomous Systems (ASes).

- OC192 Passive Monitors

-

- CAIDA installed, configured, and tested four OC192 monitors in preparation for deployment.

DatCat: Internet Measurement Data Catalog

Goals

Originally funded by the NSF grant (OCI-0137121) "Correlating Heterogeneous Measurement Data to Achieve System-Level Analysis of Internet Traffic Trends", CAIDA built an Internet Measurement Data Catalog (IMDC) that facilitates access, archiving, and long-term storage of Internet data as well as sharing the metadata among Internet researchers. Since its launch in June 2006, the catalog has received contributions of metadata indexing nearly 19TB of data. Funding for the project ended in 2006, so we are currently seeking funding to maintain and extend the catalog.

CAIDA had three goals for the creation of the IMDC database:

- provide a searchable index of Internet data available for research

- enhance documentation of datasets via a public annotation system

- enable reproducible research in network science

Activities

- 1st DatCat Community Contribution (DCC 1) Workshop

-

We created this workshop series, and hosted the 1st DatCat Community Contribution (DCC 1) Workshop, to provide community members with the resources they need to contribute metadata to DatCat. At the time of writing, the First DatCat Community Contribution Workshop (DCC 1) Collection contains 1,239 files from 9 subcollections and 4 publications.

- Development of the Internet Measurement Data Catalog: DatCat Service

-

CAIDA's continued DatCat development efforts resulted in the addition of new features as well as improvements to performance, interface, and the submission process, as detailed in the list of milestones below.

Major Milestones

- New Contributions of Metadata

-

- During 2007, the DatCat catalog received 70 entries documenting the metadata for collections and publications.

Highlights include:

- Collection: Day in the Life of the Internet, January 10-11, 2006 (DITL-2006-01-10) and Collection: Day in the Life of the Internet, January 9-10, 2007 (DITL-2007-01-09)

- Collection: CRAWDAD datasets

- Collection: SUNET OC192 Traces

- During 2007, the DatCat catalog received 70 entries documenting the metadata for collections and publications.

Highlights include:

- New Features in DatCat

-

- Added:

- reserved handles

- collection staging, review, and activation

- nested collections

- support for publications

- built-in support for bibtex entries

- "20 Most Recently Contributed Collections" page

- automated data analyzer to assist with indexing and submission

- mailing lists

- Improved:

- site navigation, including drop-down menu bars

- multi-keyword search and better sorting and display of search results

- backend database upgraded to Oracle RAC cluster

- handling of database connection errors

- performance, especially in searches

- submission tools, templates and GUI interface

- documentation of submission process

- improved access to download links by putting them directly on data detail page

- Added:

National Laboratory for Advanced Network Research (NLANR) Resource Stewardship

Goals

The National Laboratory for Applied Network Research (NLANR) Project officially ended February 28, 2006. The funding for the NLANR project expired June 30, 2006 and the National Science Foundation discontinued support. In July 2006 CAIDA took over operational stewardship for all NLANR machines and data.

Over the last year, CAIDA confirmed our belief believe that some of the NLANR data and resources still offer value to the network science, research and development communities, and might help support other forms of research not envisioned in the original NLANR proposal. During 2007 CAIDA completed a careful audit, gracefully decommissioned obsolete hardware and data, and worked to apply most of the remaining resources to projects of similar intent and spirit. As we performed this audit, we kept the community informed of changes to operations of the machines at the sites. Hosting sites have the prerogative at any time to decline participation in the reincarnation of any NLANR equipment at their site. During the last year, we worked with numerous NLANR hosting sites to improve our Acceptable Use Policy and repurpose select hardware for the Archipelago Project. Since July 2006 CAIDA has also directly decommissioned over 100 NLANR hosts of equipment not viable for redeployment, and continues to work with sites that are not reachable and/or we plan to physically remove from service.

NLANR Resources

- The NLANR.NET Domain and Services

CAIDA now hosts the NLANR.NET domain, originally registered by Hans-Werner Braun, as well as eleven servers used to serve the various mail, web, application, and database services for the project. CAIDA hopes to preserve the NLANR.NET domain and provide best effort on maintenance to hardware that serves scientifically relevant data collected by the Active Measurement Project (AMP) and Passive Measurement and Analysis (PMA) projects.

- PMA Project Resource Reuse

CAIDA worked with eight sites to repurpose potentially viable passive monitoring equipment at: AMPATH, Front Range GigaPop, the Pittsburgh Supercomputing Center, Purdue University, SDSC, NASA-Ames, Indiana University, and the Corporation for Education Network Initiatives in California (CENIC).

- AMPATH:

On January 9, 2007, as part of the Day In the Life of the Internet Project, CAIDA collected bidirectional packet header traces with no payload from an OC12 ATM link on the AMPATH International Internet Exchange. Additionally, CAIDA collected and published near realtime aggregated statistics of the link traffic using the Coralreef software suite and its built-in report generator.

- Front Range GigaPop:

We spent much of 2007 trying to get approval of our Acceptable Use Policy (AUP) from the University of Colorado and Front Range GigaPop. After review of the CAIDA AUP by UCAR legal, they declined our offer to repurpose the GigE passive monitor due to privacy concerns and possible conflicts with UCAR policy. We will continue to work with this site to ship back the DAG card and potentially repurpose the machine as part of the Archipelago infrastructure.

- The Pittsburgh Supercomputing Center:

In 2007, PSC rearchitected their core network infrastructure and upgraded the OC48 link that NLANR had previously monitored. CAIDA received the hardware back from PSC and may redeploy.

- Purdue University:

While trying to get acceptance of the AUP at Purdue, our collaborator initially received resistance from a new network privacy review board. Education of the institutional review boards for university campuses has been the key challenge in repurposing passive monitors. The chair of the IRB committee at Purdue declared packet trace collection NOT human-subjects research unless there is a specific attempt to study individual users. In the meantime, the GigE link the previous monitor tapped has been upgraded to 10GE, so Purdue can't afford a monitor. We will continue to work with Purdue on possible approaches to monitoring and reporting on campus traffic.

- SDSC:

Locally, CAIDA worked on updating operating systems and retooling hardware to build monitors to tap UCSD's own campus links that carry commodity Internet and R&E (CENIC) network traffic. We hope to have these monitors deployed in 2008.

- Indiana University: We shipped and received the eight machines previously connected to a router located at the Indianapolis Internet2 site. The machines contained six DAG cards capable of measuring oc192/10Gb links. Of these six cards, three arrived in working order. We plan to redeploy this equipment on a commercial Internet backbone link.

- The Corporation for Education Network Initiatives in California:

From CENIC in Los Angeles we received shipment of the LambaMON optical network tap. The system contained two DAG cards capable of monitoring oc192/10Gb links. CAIDA hopes to redeploy this equipment on a backbone link of the Internet2 network.

- AMPATH:

- AMP Project Resource Reuse

CAIDA started the year with a target of approximately 30 to 40 NLANR AMP sites with hardware and/or geographic locations that we hoped to redeploy as part of the Archipelago infrastructure to support CAIDA's Macroscopic Topology Measurement Project. In 2007 we repurposed nine AMP sites now collecting measurements as part of the Ark infrastructure. An additional 13 sites accepted our Acceptable Use Policy (AUP) and 17 more are still in the process of review. Check the map on our web site for the most recent status of Ark.

Further Requests for Information

CAIDA will happily provide more specific details regarding the status of any of the NLANR sites and data. If sites have questions or comments on these changes, or input regarding future measurement experiments, please send the queries to nlanr-info at caida dot org.

Tools

CAIDA's mission includes providing access to tools for Internet data collection, analysis and visualization to facilitate network measurement and management. However, CAIDA does not receive specific funding for support and maintenance of the tools we develop. Please check our home page for a complete listing and taxonomy of CAIDA tools.

2007 Tool Development

CoralReef

The CoralReef Software suite, developed by CAIDA, provides a comprehensive software solution for data collect and analysis from passive Internet traffic monitors, in real time or from trace files. Real-time monitoring support includes system network interfaces (via libpcap), FreeBSD drivers for a number network capture cards, including the popular Endace DAG (10GE/OC192, POS and ATM) cards. The package also includes programming APIs for C and perl, and applications for capture, analysis, and web report generation. This package is maintained by CAIDA developers with the support and collaboration of the Internet measurement community.

We also updated and improved a realtime traffic report generator that allows dynamic selection of displayed reports. We released CoralReef version 3.8.2 late in 2007.

DSC

DSC provides a system for collecting and exploring statistics from busy DNS servers. It currently has two major components: the Collector and the Presenter. During 2007, under contract to CAIDA, The Measurement Factory release two new versions of the dsc software.

CAIDA Tools Download Report

The table below displays all the CAIDA developed tools distributed via our home page at https://catalog.caida.org/software and the number of downloads of each version during 2007.

-

Currently Supported Tools

Tool Description Downloads coralreef A software suite to collect and analyze data from passive Internet traffic monitors. 903 dsc A system for collecting and exploring statistics from DNS servers. 1310 dnsstat An application that collects DNS queries on UDP port 53 to report statistics. 220 dnstop is a libpcap application that displays tables of DNS traffic. 8493 sk_analysis_dump A tool for analysis of traceroute-like topology data. 385 walrus A tool for interactively visualizing large directed graphs in 3D space. 3085 libsea A file format and a Java library for representing large directed graphs. 390 Chart::Graph A Perl module that provides a programmatic interface to several popular graphing packages. Note: Chart::Graph is also available on CPAN.org. The numbers here reflect only downloads directly from caida.org, as download statistics from CPAN are not available. 253 plot-latlong A tool for plotting points on geographic maps. 282 -

Past Tools (Unsupported)

Tool Description Downloads Mapnet A tool for visualizing the infrastructure of multiple backbone providers simultaneously. 17087 GeoPlot A light-weight java applet creates a geographical image of a data set. 1071 GTrace A graphical front-end to traceroute. 977 otter A tool used for visualizing arbitrary network data that can be expressed as a set of nodes, links or paths. 539 plotpaths An application that displays forward and reverse network path data. 127 plankton A tool for visualizing NLANR's Web Cache Hierarchy 1096

Data

In 2007, CAIDA continued its data collection activities continued collection of Macroscopic Topology, passive collection at the DNS root nameservers and UCSD Network Telescope. We also developed several additional datasets derived from data we collected and processed to increase its utility to researchers. These datasets include our AS Rank, AS adjacencies, and Router adjacencies datasets that were all publicly available in 2007, as well as several Backscatter datasets. While some CAIDA data is available to anyone without restriction, CAIDA makes a subset of its collected data available to academic researchers and CAIDA members, with data access subject to Acceptable Use Policies (AUP) designed to protect the privacy of monitored communications, ensure security of network infrastructure, and comply with the terms of our agreements with data providers.

Major Milestones

- We tuned our Coralreef suite of data collection tools for generating highly accurate traffic summaries for high speed (OC48, 10GigE, OC192) links in real-time.

- We got the first data from the ampath-oc12 passive monitor, located at the AMPATH Internet Exchange in Miami, FL.

- We started collecting the first production data from the Archipelago measurement infrastructure.

Data Collected in 2007

Data Type File count First date Last date Total size (compressed) Total size (uncompressed) Macroscopic Topology Measurements (skitter) 7375 2007-01-01 2007-12-31 N/A 456 GB Macroscopic Topology Measurements (Archipelago) 1254 2007-09-13 2007-12-31 30.34 GB 95.0 GB Network Telescope 17821 2007-01-01 2007-12-31 6.44 TB 23.1 TB A Day In The Life (DITL) of the Internet - OARC 2007 9949 2007-01-09 2007-01-10 N/A 800 GB Passive Data Measurements (AMPATH) 102 2007-01-09 2007-01-10 17.9 GB 52.6 GB DNS root/gTLD RTT Dataset 1076 2007-01-01 2007-12-31 N/A 1.27 GB

Data Distributed in 2007

We process the raw data into specialized datasets to increase its utility to researchers and to satisfy security and privacy concerns. In 2007, we released the following datasets:

-

Publicly Available Data

These datasets require that users agree to an Acceptable Use Policy, but are otherwise freely available.

Dataset Unique visitors (IPs) Data Downloaded Notes AS Rank 2,494 6.62 GB AS Links (AS Adjacencies) 367 2.00 GB AS Relationships 643 6.27 GB Router Adjacencies 376 1.08 GB Witty Worm Dataset 254 597 MB AS Taxonomy 122 69.6 MB * Code-Red Worms Dataset 101 179 GB Crawler/Robot traffic is excluded from these numbers. This traffic amounts to up to 50% of total traffic volume to our data servers. * AS Taxonomy dataset is included in a mirror of the GA Tech main AS Taxonomy site, and thus does not represent all access to this data.

-

Restricted Access Data

These datasets require that users:

- be academic or government researchers, or join CAIDA;

- request an account and provide a brief description of their intended use of the data; and

- agree to an Acceptable Use Policy.

Dataset Unique visitors (usernames) Data Downloaded Raw Topology Traces (skitter) 51 2.86 TB 3 14.8 GB * Anonymized OC48 Peering Link Traces 77 68.1 TB ** 2003 Internet Topology Data Kit 54 38.4 GB Backscatter Datasets 29 717 GB Witty Worm Dataset 15 660 GB DNS Root/gTLD server RTT Dataset 6 3.14 GB * We had a small number of users having access to this data in 2007 before official release in 2008. ** While this number represents cummulative size of all data downloaded by users, we know this number is heavily affected by users downloading a single data file multiple times. This is likely caused by users using browsers instead of specialized retrieval tools like wget.

-

Restricted Access Data Requests

Statistics on how many requests for data access we got and how many we granted

Dataset Number of requests received Number of requests granted access Anonymized OC48 Peering Link Traces 103 68 Active Topology Trace Datasets 99 66 Backscatter Datasets 68 39 Witty Worm Dataset 18 12 DNS Root/gTLD server RTT Dataset 15 7

-

NLANR Datasets

This data was collected by the NLANR project. When this project came to an end in July 2006, CAIDA inventoried NLANR equipment and took over curation and distribution of NLANR data. CAIDA now maintains both the NLANR AMP and PMA public data repositories. Our efforts of serving this data are currently unfunded and we plan to cease serving this data in May 2009. For sponsorship or taking over hosting responsibility for this data please contact nlanr-info@caida.org.

Dataset Unique visitors (IPs) Data Downloaded PMA Traffic Traces 4149 125 TB AMP Topology Traces 122 9.01 GB Crawler/Robot traffic is excluded from these numbers. This traffic amounts to up to 50% of total traffic volume to our data servers.

Workshops

As part of our mission to investigate both practical and theoretical aspects of the Internet, CAIDA staff actively attend, contribute to, and host workshops relevant to research and better understanding of Internet infrastructure, trends, topology, routing, and security. Last year, CAIDA hosted the 1st DatCat Community Contribution Workshop, the 8th CAIDA-WIDE Workshop, and the 3rd DNS-OARC Workshop.

CAIDA staff presented at AT&T Research, NSF's CRI PI meeting, the Internet Security Operations and Intelligence (ISOI) workshop, the TERENA Networking Conference, Freedom to Connect 2007, Supernova 2007, NIST, IBM Cambridge, the University of Waikato and our own WIDE and DNS/OARC workshops described below.

Please check our web site for a complete listing of past and upcoming CAIDA Workshops.

1st DatCat Community Contribution Workshop

The 1st DatCat Community Contribution Workshop was held on March 12-14, 2007 (by invitation only) here at the San Diego Supercomputer Center in San Diego, CA. The Workshop provided community members with the resources they needed to contribute data to DatCat. The participants indexed numerous datasets and contributed to the First DatCat Community Contribution Workshop (DCC 1) collection.

8th CAIDA-WIDE Workshop

The 8th CAIDA/WIDE Workshop was held on July 20-21, 2007 (by invitation only) at the Electronic Visualization Laboratory at the University of Illinois, Chicago campus. The Workshop covered: Internet measurement projects and DNS as well as miscellaneous research and technical topics of mutual interest for CAIDA and WIDE participants.

3rd DNS-OARC Workshop

The 3rd DNS-OARC Workshop was held on November 2-3, 2007 in Los Angeles, CA. The focus of this workshop included DNS-related Internet measurements and research, a tutorial on DLV, operational co-ordination and trusted communication between root, TLD and other DNS and Internet operators, and the future evolution and governance of OARC. Participation was open to OARC members, presenters, by invitation, and to other parties interested in DNS research and operations subject to available space.

Publications

The following table contains the papers published by CAIDA for the calendar year of 2007. Please refer to Papers by CAIDA on our web site for a comprehensive listing of publications.

| Year | Author(s) | Title | Publication |

|

2007

|

Krioukov, D. claffy, k. |

Navigability of Complex Networks | arXiv physics.soc-ph/.0303 |

|

2007

|

Hubble, C. Vahdat, A. Krioukov, D. Huffaker, B. |

Orbis: Rescaling Degree Correlations to Generate Annotated Internet Topologies | SIGCOMM |

|

2007

|

Krioukov, D. Vahdat, A. Riley, G. |

Graph Annotations in Modeling Complex Network Topologies | arXiv cs.NI/.389 |

|

2007

|

Brownlee, N. Wessels, D. |

Passive Monitoring of DNS Anomalies | DIMVA |

|

2007

|

claffy, k. Fall, K. Brady, A. |

On Compact Routing for the Internet | ACM SIGCOMM Computer Communications Review (CCR) |

|

2007

|

Meinrath, S. Bradner, S. |

The (un)Economic Internet? | IEEE Internet Computing |

|

2007

|

Huffaker, B. Fomenkov, M. claffy, k. Brownlee, N. |

Two Days in the Life of the DNS Anycast Root Servers | Passive and Active Network Measurement Workshop (PAM) |

|

2007

|

Chung, F. claffy, k. Fomenkov, M. Vespignani, A. Willinger, W. |

The Workshop on Internet Topology (WIT) Report | ACM SIGCOMM Computer Communications Review (CCR) |

|

2007

|

Krioukov, D. Fomenkov, M. Huffaker, B. Hyun, Y. claffy, k. Riley, G. |

AS Relationships: Inference and Validation | ACM SIGCOMM Computer Communications Review (CCR) |

Presentations

The following table contains the presentations and invited talks published by CAIDA for the calendar year of 2007. Please refer to Presentations by CAIDA on our web site for a comprehensive listing.

Web Site Usage

In 2007, CAIDA's web site continued to attract considerable attention from a broad, international audience. Visitors seem to have particular interest in CAIDA's tools and analysis.

The table below presents the monthly history of traffic to www.caida.org for 2007.

| Month | Unique visitors | Number of visits | Pages | Hits | Bandwidth (GB) |

|---|---|---|---|---|---|

| Jan 2007 | 70,317 | 163,688 | 533,838 | 1,992,037 | 69.58 |

| Feb 2007 | 62,040 | 14,7304 | 507,152 | 1,766,491 | 62.10 |

| Mar 2007 | 64,556 | 178,719 | 628,114 | 2,069,748 | 68.72 |

| Apr 2007 | 62,860 | 170,391 | 578,692 | 2,347,355 | 83.39 |

| May 2007 | 60,625 | 178,239 | 610,849 | 2,155,525 | 65.34 |

| Jun 2007 | 58,241 | 224,857 | 592,074 | 1,997,951 | 68.56 |

| Jul 2007 | 56,428 | 254,485 | 670,013 | 2,134,335 | 80.24 |

| Aug 2007 | 55,620 | 203,411 | 559,881 | 1,962,549 | 66.84 |

| Sep 2007 | 56,443 | 129,613 | 417,394 | 1,813,536 | 67.99 |

| Oct 2007 | 62,403 | 123,476 | 422,632 | 1,908,238 | 81.88 |

| Nov 2007 | 61,090 | 113,147 | 459,908 | 1,898,232 | 74.90 |

| Dec 2007 | 50,295 | 98,060 | 433,380 | 1,582,691 | 62.90 |

| Total | 720,918 | 1,985,390 | 6,413,927 | 23,628,688 | 852.47 GB |

Organizational Chart

CAIDA would like to acknowledge the many people who put forth great effort towards making CAIDA a success in 2007. The image below shows the functional organization of CAIDA. Please check the home page For more complete information about CAIDA staff.

![[Image of CAIDA Functional Organization Chart]](./images/caida_organization_2007.png)

CAIDA Functional Organization Chart

Funding Sources

CAIDA thanks our 2007 sponsors, members, and collaborators.

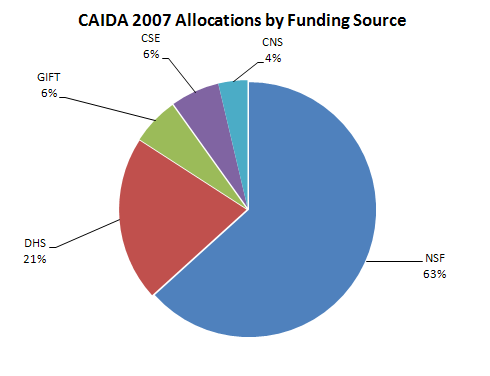

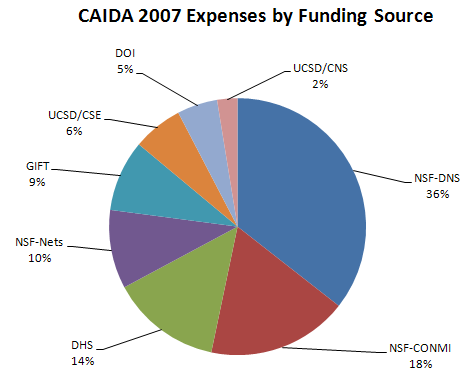

The charts below depict funds received by CAIDA during the 2007 calendar year.

| Funding Source | Allocations | Percentage of Total |

|---|---|---|

| NSF | $1,214,542 | 63% |

| DHS | $400,000 | 21% |

| GIFT | $115,000 | 6% |

| CSE | $118,632 | 6% |

| CNS | $70,909 | 4% |

| Total | $1,919,083 | 100% |

Figure 1. Allocations by funding source received during 2007.

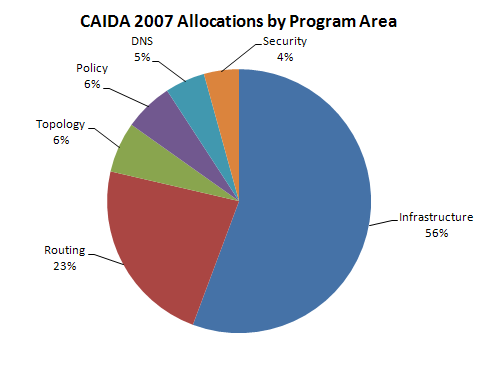

| Program Area | Allocations | Percentage of Total |

|---|---|---|

| Infrastructure | $1,068,788 | 56% |

| Routing | $439,999 | 23% |

| Topology | $118,990 | 6% |

| Policy | $115,000 | 6% |

| DNS | $93,755 | 5% |

| Security | $82,551 | 4% |

| Total | $1,919,083 | 100% |

Figure 2. Allocations by program area received during 2007.

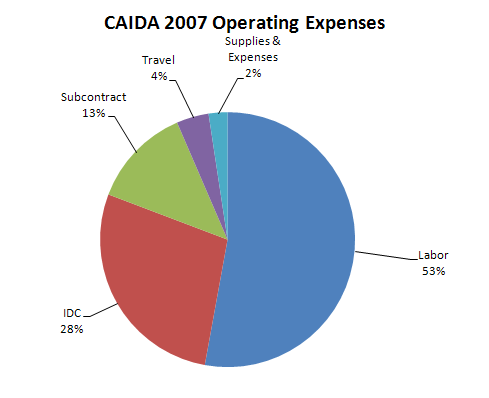

Operating Expenses

The charts below depict CAIDA's Annual Expense Report for the 2007 calendar year.

| Category | Description | ||

|---|---|---|---|

| LABOR | Salaries and benefits paid to staff and students | ||

| IDC | Indirect Costs paid to the University of California, San Diego including grant overhead (52-54%) and telephone, Internet, and other IT services. | ||

| SUBCONTRACTS | Subcontracts to the Internet Systems Consortium (ISC) and Georgia Institute of Technology | ||

| TRAVEL | Trips to conferences, PI meetings, operational meetings, and sites of remote monitor deployment. | ||

| SUPPLIES & EXPENSES | All office supplies and equipment (including computer hardware and software) costing less than $5000. | ||

| EQUIPMENT | Computer hardware or other equipment costing more than $5000. | ||

| TRANSFERS | Exchange of funds between groups for recharge for IT desktop support and Oracle database services. |

| Program Area | Expenses | Percentage of Total |

|---|---|---|

| Labor | $1,403,683 | 52.9% |

| IDC | $740,791 | 27.9% |

| Subcontract | $338,554 | 12.8% |

| Travel | $108,611 | 4.1% |

| Supplies & Expenses | $63,522 | 2.4% |

| Equipment | $0 | 0.0% |

| Transfers | -$1,995 | 0.0% |

| Total | $2,653,166 | 100.0% |

Figure 3. 2007 Operating Expenses

These numbers do not include salaries or expenses paid by the Computer Science & Engineering Department of the Jacobs School of Engineering at the University of California, San Diego.

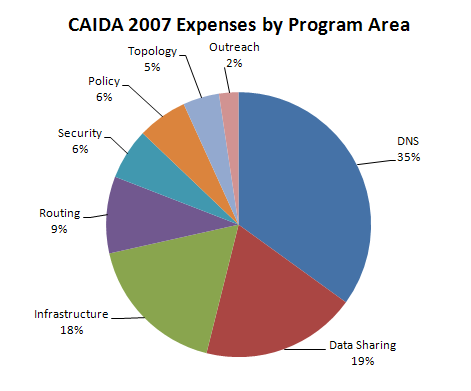

| Program Area | Expenses | Percentage of Total |

|---|---|---|

| DNS | $927,717 | 35.0% |

| Data Sharing | $502,287 | 18.9% |

| Infrastructure | $467,204 | 17.6% |

| Routing | $248,813 | 9.4% |

| Security | $165,345 | 6.2% |

| Policy | $161,271 | 6.1% |

| Topology | $117,414 | 4.4% |

| Outreach | $62,985 | 2.4% |

| Total | $2,653,036 | 100.0% |

Figure 4. 2007 Expenses by Program Area

| Funding Source | Expenses | Percentage of Total |

|---|---|---|

| NSF-DNS | $937,961 | 35.4% |

| NSF-CONMI | $467,127 | 17.6% |

| DHS | $366,275 | 13.8% |

| NSF-Nets | $261,462 | 9.9% |

| Gift | $236,649 | 8.9% |

| UCSD/CSE | $166,081 | 6.3% |

| DOI | $133,911 | 5.0% |

| UCSD/CNS | $67,568 | 2.5% |

| NSF-Trusted Comp. | $13,954 | 0.5% |

| NSF-Trends | 2,101 | 0.1% |

| UCSD/SDSC | $77 | 0.0% |

| Total | $2,653,166 | 100.0% |

Figure 5. 2007 Expenses by Funding Source