CAIDA's Annual Report for 2017

Mission Statement: CAIDA investigates practical and theoretical aspects of the Internet, focusing on activities that:

- provide insight into the macroscopic function of Internet infrastructure, behavior, usage, and evolution,

- foster a collaborative environment in which data can be acquired, analyzed, and (as appropriate) shared,

- improve the integrity of the field of Internet science,

- inform science, technology, and communications public policies.

Executive Summary

kc claffy receives prestigious Postel Service

award from the Internet Society (Olaf Kolkman and Kathy Brown).

kc claffy receives prestigious Postel Service

award from the Internet Society (Olaf Kolkman and Kathy Brown).

We lead with the two most exciting pieces of news. First, CAIDA celebrated its 20th anniversary this year! Perhaps no one, least of all us, thought we could keep it going this long, but each year seems to get better! Second, CAIDA director kc experienced the greatest honor of her career this year when she received the Internet Society's Postel Service Award!

On to this year's annual report, which summarizes CAIDA's activities for 2017, in the areas of research, infrastructure, data collection and analysis. Our research projects span Internet topology mapping, security and stability measurement studies (of outages, interconnection performance, and configuration vulnerabilities), economics, future Internet architectures, and policy. Our infrastructure, software development, and data sharing activities support measurement-based internet research, both at CAIDA and around the world, with focus on the health and integrity of the global Internet ecosystem.

Internet Performance Measurement. This year we leveraged our years of investment in topology measurement and analytic techniques to advance research on performance, reliability, resilience, security, and economic weaknesses of critical Internet infrastructure. We continued our study of interconnection congestion, which requires maintaining significant software, hardware, and data processing infrastructure for years to observe, calibrate and analyze trends. We also undertook several research efforts in how to identify and characterize different types of congestion and associated effects on quality of experience using a variety of our own and other (e.g., M-Lab) data.

Monitoring Global Internet Security and Stability. Our research accomplishments in Internet security and stability monitoring in 2017 included: (1) characterizing the Denial-of-Service ecosystems, and attempts to mitigate DoS attacks via BGP blackholing; (2) continued support for the Spoofer project, including supporting the existing Spoofer measurement platform as well as developing and applying new methods to expand visibility of compliance with source address validation best practices; (3) demonstrating the continued prevalence of that long-standing TCP vulnerabilities on the global Internet; (4) new methods to identify router outages and quantify their impact on Internet resiliency; (5) a new project to quantify country-level vulnerabilities to connectivity disruptions and manipulations.

Future Internet Research. We continued to engage in long-term studies of IPv6 evolution, including adaptation of IPv4 technology to IPv4 address scarcity (e.g., CGN), and detecting Carrier-Grade NAT (CGN) in U.S. ISP networks, as well as an updated longitudinal study of IPv6 deployment. We pared down our participation in the NDN project while we wait for some NSF-funded code development to complete. We hope we will be able to use this software platform to evaluate NDN's use in secure data sharing scenarios.

Economics and Policy. We undertook two studies related to the political and economic forces influencing interconnection in Africa, as well as several other studies on the economic modeling of peering that we are determined to publish in 2018. We also held a lively workshop on Internet economics where we continued the discussion on what a future Internet regulatory framework should look like.

Infrastructure Operations. We continued to operate active and passive measurement infrastructure with visibility into global Internet behavior, and associated software tools that facilitate network research and security vulnerability analysis for the community. We also maintained data analytics platforms for Internet Outage Detection and Analysis (IODA) and BGP data analytics (BGPStream). We are excited about a new project we started late in 2017 (PANDA) to support integration of several of our existing measurement and data analytics platforms.

Outreach. As always, we engaged in a variety of outreach activities, including maintaining web sites, posting blog entries, publishing 14 peer-reviewed papers, 2 technical reports, 2 workshop reports, making 31 presentations, and organizing 5 workshops (and hositng 4 of them). We also received several honors from the community: an IRTF Applied Networking Research Prize for our BGPStream work in March, and kc received the Postel Service Award in November!

This report summarizes the status of our activities; details about our research are available in papers, presentations, our blog, and interactive resources on our web sites. We also provide listings and links to software tools and data sets shared, and statistics reflecting their usage. Finally, we offer a "CAIDA in numbers" section: statistics on our performance, financial reporting, and supporting resources, including visiting scholars and students, and all funding sources.

Getting the next decade off to a hopefully auspicious start, CAIDA's new program plan for 2018-2022 is available at www.caida.org/about/progplan/progplan2018/. Please feel free to send comments or questions to info at caida dot org.

Research and Analysis

Internet Performance Measurement

This year we leveraged our state-of-the-art topology measurement and analytic techniques to advance research on performance, reliability, resilience, security, and economic weaknesses of critical Internet infrastructure. We undertook several research efforts in how to identify and characterize congestion and associated quality of experience from a variety of our own and other (e.g., M-Lab) data. We also pursued a collaboration to improve BGP convergence performance.

Interconnection congestion We continued our collaboration with MIT Computer Science and Artificial Intelligence Laboratory (MIT/CSAIL), on an NSF-funded MANIC project Measurement and Analysis of Interdomain Congestion. For this project we are developing techniques to measure congestion on interdomain links between ISPs. We now have developed and operationalized methods for identification of borders between IP networks, and built a scalable system for collecting and analyzing interdomain performance measurements. We have integrated open source industry platforms (inFluxDB, Grafana) to create a system that manages our performance probing from approximately 70 Archipelago VPs in 45 networks and 25 countries around the world, and our lighter weight version remotely controlled from CAIDA on 15 Bismark home routers. Our system collects and organizes data, and presents that data for easy analysis and visualization. We will completely document our analysis methods, analyze 18 months of gathered data, and open this system for public use in 2018.

Understanding the nature of Internet congestion: The recent explosion of demand for high-bandwidth content, particularly streaming video, has led to a shift in the nature of Internet traffic. While previously the last-mile network was commonly accepted as the most likely bottleneck in the end-to-end path, this shift in traffic patterns has focused the spotlight on core interdomain peering links, as another potential source of bottlenecks. We developed techniques to distinguish between these two types of bottlenecks. We focused on the question: was a TCP flow bottlenecked by an already congested (presumably core) link, or by a link that was otherwise idle, presumably a last-mile link? We showed that it is possible to distinguish between these two cases by exploiting the nature of s congestion control and its impact on flow RTT. This discovery has important implications for users, content providers, and regulators seeking an understanding of how congestion occurs in the Internet and how to alleviate it to improve quality of experience for users. (TCP Congestion Signatures, IMC).

Challenges in inferring Internet congestion using throughput measurements. We revisited the use of crowdsourced throughput measurements to infer and localize congestion on end-to-end paths, with particular focus on points of interconnections between ISPs. We analyzed three challenges of this approach. First, accurately identifying which link on the path is congested requires fine-grained network tomography techniques not supported by existing throughput measurement platforms. Coarse-grained network tomography can perform this link identification under certain topological conditions, but we show that these conditions do not hold generally on the global Internet. Second, existing measurement platforms do not provide sufficient visibility of paths to popular content sources, and only capture a small fraction of interconnections between ISPs. Third, crowdsourcing measurements inherently risks sample bias: using measurements from volunteers across the Internet leads to uneven distribution of samples across time of day, access link speeds, and home network conditions. Finally, it is not clear how large a drop in throughput to interpret as evidence of congestion. We investigated these challenges in detail, and offered guidelines for deployment of measurement infrastructure, strategies, and technologies that can address empirical gaps in our understanding of congestion on the Internet. (Challenges in Inferring Internet Congestion Using Throughput Measurements, IMC).

Router geolocation reliability in public and commercial databases. Internet measurement research frequently needs to map infrastructure components, such as routers, to their physical locations. Although public and commercial geolocation services are often used for this purpose, their accuracy when applied to network infrastructure has not been previously assessed since prior work focused on the overall accuracy of geolocation databases, which is dominated by their performance on end-user IP addresses. To evaluated the reliability of router geolocation in databases, we used a dataset of about 1.64M router interface IP addresses extracted from the CAIDA Ark dataset and examined the country- and city-level coverage and consistency of popular public and commercial geolocation databases. Our results show that the databases are not reliable for geolocating routers and that there is room to improve their country- and city-level accuracy. Based on our findings, we present a set of recommendations to researchers concerning using the geolocation databases to geolocate routers. (A Look at Router Geolocation in Public and Commercial Databases, IMC).

Crowd-sourced QoE measurement/analysis. With support from NTT and Comcast, we began a project to design and implement a crowdsourcing-based Quality of Experience (QoE) measurement platform. This platform will integrates and analyze existing CAIDA measurements and data to personalize measurement tasks for crowd-sourced participants. The overall objective of this project is to use crowdsourcing techniques to explore correlations between congestion on interdomain interconnection links and the quality of experience that users perceive and report.

Improving BGP convergence performance: SWIFT fast-reroute framework. Network operators often face the problem of remote outages in transit networks leading to significant (sometimes on the order of minutes) downtimes. The issue is that BGP, the Internet routing protocol, often converges slowly upon such outages, as large bursts of messages have to be processed and propagated router by router. In collaboration with ETH Zurich, we studied SWIFT, their fast-reroute framework which enables routers to restore connectivity in few seconds upon remote outages. SWIFT is based on two key contributions: (i) a fast and accurate inference algorithm; and (ii) a novel encoding scheme. We present a complete implementation of SWIFT and demonstrate that it is both fast and accurate (SWIFT: Predictive Fast Reroute, SIGCOMM).

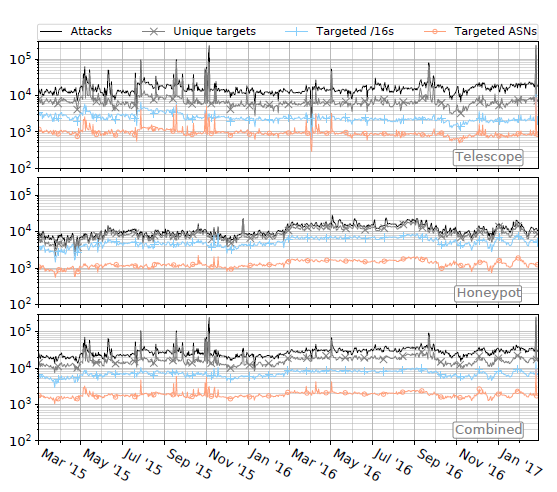

Figure. The number of attacks over time (black lines), and

the number of IP addresses (grey lines), /16 network blocks

(blue lines), and ASNs (orange lines) targeted over time for:

randomly-spoofed DoS attacks observed in the telescope

data set (top graph), attack events in the honeypots data set

(middle graph), and the union of these two data sets (bottom

graph). Note that the combined data is not simply the sum

of the top two graphs: in some cases we observe targets attacked

by both randomly-spoofed, and reflected DoS attacks,

on the same day (>Millions of

Targets Under Attack: a Macroscopic Characterization of the DoS

Ecosystem, IMC).

Figure. The number of attacks over time (black lines), and

the number of IP addresses (grey lines), /16 network blocks

(blue lines), and ASNs (orange lines) targeted over time for:

randomly-spoofed DoS attacks observed in the telescope

data set (top graph), attack events in the honeypots data set

(middle graph), and the union of these two data sets (bottom

graph). Note that the combined data is not simply the sum

of the top two graphs: in some cases we observe targets attacked

by both randomly-spoofed, and reflected DoS attacks,

on the same day (>Millions of

Targets Under Attack: a Macroscopic Characterization of the DoS

Ecosystem, IMC).

Monitoring Global Internet Security and Stability

Our research objectives in Internet security and stability monitoring in 2017 included: (1) characterizing the Denial-of-Service ecosystems, and attempts to mitigate DoS attacks via BGP blackholing; (2) continued support for the Spoofer project, which is focused on supporting the existing Spoofer measurement platform as well as developing and applying new methods to expand visibility of compliance with source address validation best practices; (3) demonstrating the continued prevalence of that long-standing TCP vulnerabilities on the global Internet; (4) developing new methods to identify router outages and quantify their impact on Internet resiliency; and (5) enabling near real-time detection and characterization of traffic interception events on the Internet.

Characterizing the Denial-of-Service ecosystem. We introduced and applied a new framework to enable a macroscopic characterization of attacks and attack targets, and DDoS Protection Services (DPSs), We used data from four independent global Internet measurement infrastructures over the last two years: backscatter traffic to UCSD's network telescope; logs from amplification honeypots; a DNS measurement platform covering 60% of the current namespace; and a DNS-based data set focusing on DPS adoption. Our results revealed the massive scale of the DoS problem, including an eye-opening statistic that one-third of all /24 networks recently estimated to be active on the Internet have suffered at least one DoS attack over the last two years. We found that often targets are simultaneously hit by different types of attacks. In our data, Web servers were the most prominent attack target; an average of 3% of the Web sites in .com, .net, and .org were subjected to attacks, daily. Finally, we shed light on factors influencing migration to a DPS. (Millions of Targets Under Attack: a Macroscopic Characterization of the DoS Ecosystem, IMC). In a separate study, we developed a method to automatically detect BGP blackholing activity on the global Internet, and used it to discover that hundreds of networks, including large transit providers, as well as about 50 Internet exchange points (IXPs) offer blackholing service to their customers, peers, and members. (Inferring BGP Blackholing Activity in the Internet, IMC).

Software systems for surveying spoofing susceptibility. We continue to support the Spoofer measurement project, which maintains a service that allows users and operators to determine whether a network they are currently using has been correctly configured to prevent spoofed packets from leaving (or entering) the network, known as source address validation. For the last two years we have stewarded this open source software and service project, and published anonymized results of all tests using our software. This year we put considerable effort into improving usability and performance of the client-server software system (resulting in new software releases), as well as the interactive reporting service targeting operators, response teams, and policy analysts. In the case of a positive spoofing test, the system now generates notifications that we manually send to a registered technical contact for the AS in question. Reports include statistics summary, recently run tests, remediation, results by AS, results by country, results by provider, and results by traceroute. We announced and solicited feedback on this new system at network operator and policy forums. We also developed and released an educational video that explains the importance of this project and demonstrates how to install the client software on a laptop.

Due to the sampling bias rooted in the need for volunteer vantage points, we have pursued additional methods that opportunistically leverage other data sources to infer evidence of networks allowing spoofed traffic. One method we developed extracts routing loops appearing in traceroute data to infer inadequate source address validation (SAV) at a transit provider edge. We found 703 provider ASes that did not implement ingress filtering on at least one of their links for 1,780 customer ASes. By increasing the visibility of networks that allow spoofing, we aim to strengthen the incentives for the adoption of SAV. ("Using Loops Observed in Traceroute to Infer the Ability to Spoof" PAM 2017))

Measurements of TCP behavior to understand security vulnerabilities. Following up on work we published in 2015 on the prevalence of TCP vulnerabilities, we posted a new technical report that considers the resilience of deployed TCP implementations to blind in-window FIN attacks, an attack not explicitly covered in RFC5961, where an off-path adversary disrupts an established connection by sending a TCP FIN that the victim believes came from its peer, causing the connection to be prematurely closed. We tested 4397 web servers and found 18% of tested connections were vulnerable to this attack. As with our 2015 results, this surprisingly high level of extant vulnerabilities illustrates the Internet's fragility, and demonstrates the need for more systematic, scientific, and longitudinal measurement and quantitative analysis of fundamental properties of critical Internet infrastructure, as well as the importance of better mechanisms to get best security practices deployed. (Resilience of Deployed TCP to Blind FIN Attacks)

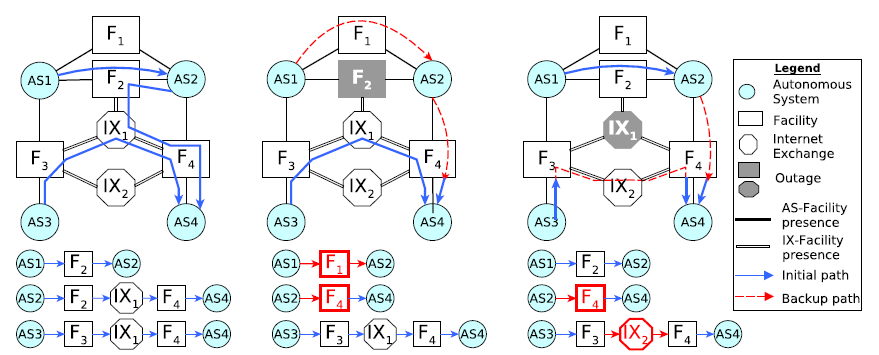

Figure. Examples of how facility-level and IXP-level outages affect the inter-domain paths (Detecting

Peering Infrastructure Outages in the Wild, SIGCOMM).

Figure. Examples of how facility-level and IXP-level outages affect the inter-domain paths (Detecting

Peering Infrastructure Outages in the Wild, SIGCOMM).

Measuring the impact of router outages. We supported collaborators Matthew Luckie (U. Waikato) and Rob Beverly (NPS) in their development, implementation, and evaluation of a new metric for understanding the dependence of the AS-level Internet on individual routers. Whereas prior work used large volumes of reachability probes to infer outages, Luckie and Beverly used Ark to design an efficient active probing technique that directly and unambiguously reveals router restarts. While they found the Internet core to be largely robust, they identified specific routers that were single points of failure for the prefixes they advertised. (The Impact of Router Outages on the AS-level Internet, SIGCOMM). In a separate study, we developed a methodology for detecting peering infrastructure outages using information inferred from BGP communities. (Detecting Peering Infrastructure Outages in the Wild, SIGCOMM).

Measuring susceptibility to country-level connectivity disruption and manipulation Despite much recent interest and a large body of research on cyber-attack vectors and mechanisms, we lack rigorous tools to reason about how the macroscopic Internet topology of a country or a region exposes its critical communication infrastructure to compromise through targeted attacks. Part of the problem is that collecting and interpreting data about the Internet connectivity, configurations and associated vulnerabilities is challenging. Due to the massive scale and broadly distributed nature of Internet infrastructure and the scarcity of publicly available data, we must resort to complex measurement and inference methodologies that require significant effort in design, implementation, and validation.

In late 2017 we launched a new NSF (SaTC)-funded project to address this gap. We will start by tackling the challenge of identifying important components of the Internet topology of a country/region -- Autonomous Systems (ASes), Internet Exchange Points (IXPs), PoPs, colocation facilities, and physical cable systems which represent the key terrain in cyberspace. To achieve this goal we will undertake a novel multi-layer mapping effort to discover the key components, relationships between them, and their geographic properties. In the next phase (in future years), we will develop methods to identify components that represent potential topological weaknesses, i.e., compromising a few such components would allow an attacker to disrupt, intercept or manipulate Internet traffic of that country. Our multi-layer view of the system will enable an assessment of weaknesses, holistically as well as at specific layers, under various assumptions about the capabilities and knowledge of attackers. Geographic annotations will enable us to consider risks related to the geographic distribution of critical components of the communication infrastructure. (This project started late 2017, we look forward to reporting early results next year.)

Future Internet Research

We continued to engage in long-term studies of IPv6 evolution, adaptation of IPv4 technology to IPv4 address scarcity (e.g., CGN), and a new proposed Internet protocol architecture: Named Data Networking.

IPv6: Measuring and Modeling the IPv6 Transition

Analysis of reported IPv4 address transfers. We continued our analysis of IPv4 address transfers as reported by the Regional Internet Registries (RIRs), with the goal of obtaining some insight into the nature of the transfer market. In the course of the last years, the IPv4 transfer markets have significantly increased in size. We completed a paper describing our analysis of reported IPv4 address transfers, and our methodology for inferring transfers using BGP data. This paper extends our earlier conference paper (CoNEXT 2013) on transfer markets with significantly expanded analysis of reported transfers, along with the refined BGP-based method for detecting/filtering transfers (On IPv4 transfer markets: Analyzing reported transfers and inferring transfers in the wild, Elsevier).

Detecting Carrier-Grade NAT (CGN) in U.S. ISP networks. Since CGNs may impair performance, understanding the extent of their deployment is of interest to policymakers, ISPs, and users. After our initial study (NAT Revelio: Detecting NAT444 (CGN) in the ISP, PAM 2016), we collaborated to deploy Revelio on the FCC's measurement platform (operated by SamKnows) in the U.S., where we found little use of CGN. (Tracking the Big NAT across Europe and the U.S., CAIDA).

Measuring the deployment of IPv6. We found that the IPv6 network is maturing, as indicated by its increasing similarity in size and composition, AS path congruity, topological structure, and routing dynamics, to the public IPv4 Internet. While core Internet transit providers have mostly deployed IPv6, edge networks are lagging behind. We completed a study extending our previous analysis of IPv6 deployment by adding 5 years of more recent data and completely redoing the analysis. We expect to publish this work in 2018.

Named Data Networking: Next Phase

We decreased our participation in NDN in 2017 as funding ran out, but hope to re-engage when the code base is sufficiently mature to experiment with using it to support an Internet data-sharing ecosystem. We continued to maintain a local UCSD hub on the national NDN testbed using the NDN Platform software running on a Ubuntu server on SDSC's machine room floor.

Economics and Policy

A framework for promoting interconnections in the developing world. In collaboration with researchers at the University of Carlos III Madrid, we analyzed the potential interconnection of existing African IXPs, which would hopefully increase peering density, traffic localization, ISP cache deployment, and thus performance for users. We demonstrated that a naive approach to interconnecting African IXPs (building a minimum spanning tree) would be infeasible in practice. We showed how to consider socio-economic realities as constraints in the topology optimization process, and parameterized them using publicly available data. We presented and evaluated a 4-step framework to build distributed IXP infrastructure, ensuring that each step respects the practical constraints we added. We demonstrated the quantitative benefits in terms of shorter AS paths, smaller RTTs, and traffic localization that could be realized from each step of the process. (Reshaping the African Internet: From Scattered Islands to a Connected Continent, Elsevier).

Internet infrastructure and congestion in Africa. We developed and evaluated a framework that aims to solve both the issues of poor traffic localization and poor access to popular content in the African region. The solution we pursued is to interconnect existing African IXPs, paving the way toward increased peering density, traffic localization, and incentivizing CDNs and content providers to deploy caches locally. We demonstrated that a naive approach to interconnecting African IXPs (building a minimum spanning tree) would be infeasible in practice. Our approach highlights how real-world solutions require careful consideration of political and economic factors (Investigating the Causes of Congestion on the African IXP substrate, IMC).

Workshop on Internet Economics (WIE). In December, CAIDA hosted the 8th interdisciplinary Workshop on Internet Economics (WIE) at the UC San Diego's Supercomputer Center. This workshop series provides a forum for researchers, Internet facilities and service providers, technologists, economists, theorists, policy makers, and other stakeholders to exchange views on current and emerging regulatory and policy debates. The FCC's expected decision (released during the workshop, on 14 December 2017) - to repeal the 2015 classification of broadband Internet access service as a telecommunications (common carrier) service - set the stage for vigorous discussion on what type of data can inform debate, development, and empirical evaluation of public policies we will need for Internet services in the future. The report highlights the discussions and presents relevant open research questions identified by participants (Workshop on Internet Economics Final Report, CCR 2018).

Measurement Infrastructure and Data Sharing Projects

Platform for Applied Network Data Analysis

For the last 20 years CAIDA has developed many data-focused services, products, tools and resources to advance the study of the Internet. CAIDA has also spent years cultivating relationships across disciplines (networking, security, economics, law, policy) with those interested in CAIDA data, but the impact thus far has been limited to a handful of researchers. The current mode of collaboration simply does not scale to the exploding interest in scientific study of the Internet. To address this gap, in late 2017 we launched a new project, funded by NSF's Data Infrastructure Building Blocks (DIBBS) program, to integrate several existing measurement and analysis components previously developed by CAIDA into a new Platform for Applied Network Data Analysis (PANDA). Our goal is to enable new scientific directions, experiments and data products for a wide set of researchers from the four targeted disciplines: networking, security, economics, and public policy. We will emphasize efficient indexing and processing of terabyte archives, advanced visualization tools to show geographic and economic aspects of Internet structure, and careful interpretation of displayed results. This five-year project just started, we have a long but exciting road ahead of us.

Archipelago (Ark)

CAIDA has conducted measurements of the Internet topology since 1998. Our current measurement tool scamper (deployed on Ark since 2007) tracks global IP-level connectivity by sending probe packets from a set of source monitors to millions of geographically distributed destinations across the IPv4 and IPv6 address space. We also use a subset of Ark monitors to conduct daily traceroutes to every announced BGP prefix, with each monitor probing the entire set of targets independently and completing exactly one pass of the target set every calendar day (aligned on UTC boundaries).

In addition to supporting these continuous measurements, Ark provides a secure and stable platform for researchers to run their own vetted experiments including: acting as probe receivers for the Spoofer Project, measurement surveys to infer TCP vulnerabilities, alias resolution measurement campaign of 10k targets with MIDAR for Dave Choffnes (Northeastern University), measurements to infer borders between ISPs (bdrmap: Inference of Borders Between IP Networks, IMC best paper 2016) and to detect congestion on these interdomain links, to localize middleboxes (boxMap, University of Waterloo), and to study Internet transport evolution (Using UDP for Internet Transport Evolution, ETH TIK 2016).

In 2017, we deployed 44 new vantage points, adding 23 new nodes in the U.S. and nodes in Belgium, Bhutan, China, Czechoslovakia, France, Ghana, Ireland, Italy, Paraguay, South Africa, Uruguay, and Zambia. Check the Archipelago Monitor Locations page to view all current hosting sites.

To further develop and improve Ark measurement capabilities, we gauged requirements to automate alias resolution MIDAR runs for community use in 2018. We also started working with Darryl Veitch on selecting network time services in all regions of the globe to increase the accuracy of network timing by our Ark monitors.

Finally, we continued developing our prototype system Henya for querying and visualizing our historical and ongoing traceroute data. Henya supports interactive data exploration with built-in analyses and visualizations for commonly occurring needs and provides full remote access to terabytes of traceroute data from the command-line, allowing researchers to execute queries and write their own analysis and visualization scripts. The Vela web interface and RESTful API for Vela traceroute/ping measurements support requesting and executing on-demand topology measurements in real time.

Note: To expand our reach of vantage points from which to launch lightweight active measurements, we began to re-write Periscope, a platform that unifies the disparate Looking Glass (LG) interfaces and instances on the global Internet into a standardized publicly-accessible querying API supporting on-demand measurements. This effort has turned out to be substantial. We hope to re-open the Periscope service to external researchers in late 2018.

Scamper

Matthew Luckie (U. Waikato) made efficiency improvements to scamper under the hood, fixing bugs and adding support for sending JSON over the control socket, fixing possible memory leaks detected by static analysis, and implementing an optional new driver to infer IPv6 device reboot windows if the device returns an incrementing identifier field in the IPv6 fragmentation header. He also added a blind FIN tbit test that tests receiver behavior to TCP FIN packets which could have come from an off-path attacker. (Scamper Release 20170822 and Scamper Release 20171204, CAIDA).

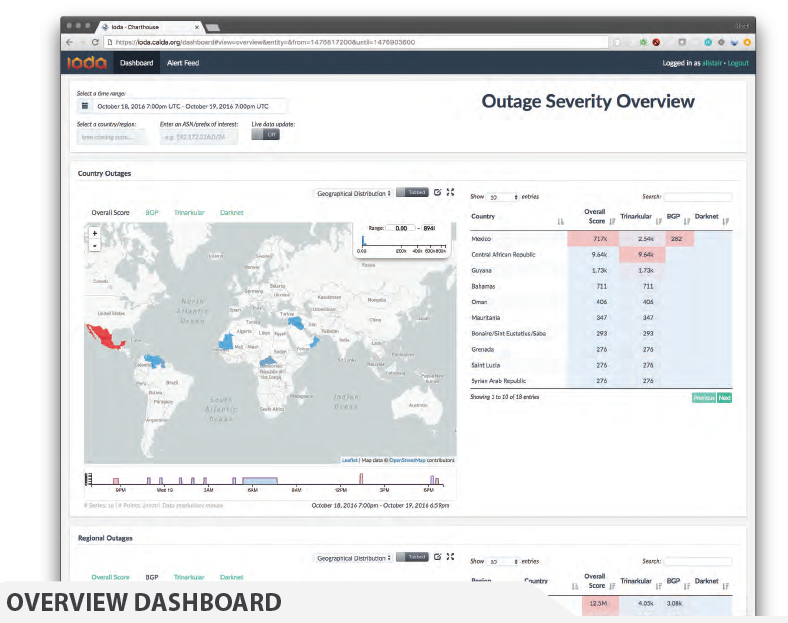

Figure. This example screenshot shows the countries in which the Active Probing data

indicated an outage (IODA Poster).

Figure. This example screenshot shows the countries in which the Active Probing data

indicated an outage (IODA Poster).

IODA platform

In January 2017, we launched the experimental IODA platform. The IODA system is designed as "Software as a Service", based on a complex distributed infrastructure and large and diverse live data streams taken as input. The prototype system currently runs as an experimental service 24/7 with high-level interactive dashboards accessible at ioda.caida.org. This year we also optimized and refined the algorithm that coalesces repeated alerts from automated criteria detecting signal level shifts for each data source (BGP monitoring, unsolicited traffic reaching the UCSD Network Telescope, and active probing) at various aggregation granularities, e.g., AS, country. Different system modules store alerts in a relational database, email alert notifications, and generate reachable/unreachable time series. A web-based dashboard combines alert and time-series data to enable deeper inspection of inferred connectivity disruptions. We gave several talks and prepared a poster for education and outreach, to promote the platform.

BGPStream software framework

In 2017, we began seeing regular use of BGPStream, our scalable, modular open-source software framework for the analysis of historical and live BGP measurement data, by academics and industry sponsored events (e.g., hackathons). Our paper on BGPStream received the prestigious IRTF Applied Networking Research Prize 2017 (BGPStream: a software framework for live and historical BGP data analysis, IMC 2016). In collaboration with Cisco Systems, we redesigned the lower layers of the libBGPStream library to natively support the BGP Monitoring Protocol (BMP, RFC 7854). Specifically, we re-architected libBGPStream to easily support multiple data formats and feeds and then developed code to natively support streams collected by an OpenBMP collector. This significant innovation, the key feature of the forthcoming v2 release of BGPStream, allows users to dramatically reduce latency in monitoring BGP and detecting hijacking attacks. In addition, it will create the opportunity for CAIDA (and for the entire community) to observe BGP from new additional vantage points (routers) supporting BMP.

UCSD Network Telescope

We maintain the UCSD Network Telescope measurement infrastructure to enable studying of Internet phenomena by monitoring and analyzing unsolicited traffic arriving at a globally routed underutilized /8 network. After discarding the legitimate traffic from the incoming packets, the remaining data represent a continuous view of anomalous unsolicited traffic, or Internet Background Radiation (IBR). We maximize the research utility of these data by enabling near-real-time data access to vetted researchers, which requires tackling the associated challenges in flexible storage, curation, and privacy-protected sharing of large volumes of data. Supporting this effort is our Corsaro software for capture, processing, management, analysis, visualization and reporting of collected data. The current Near-Real-Time Network Telescope Dataset includes: (i) the most recent 60 days of raw telescope traffic (in pcap format); and (ii) aggregated flow data for all telescope traffic since February 2008 (in Corsaro flow tuple format). In late 2017 NSF's Computing Research Infrastructure program funded our new STARDUST (Sustainable Tools for Analysis and Research on Darknet Unsolicited Traffic) project to enable sustainable operation of the UCSD Network Telescope infrastructure and maximize its utility to researchers from various disciplines.

Privacy-Sensitive Data Sharing / IMPACT

The Department of Homeland Security Information Marketplace for Policy and Analysis of Cyber-risk & Trust (IMPACT) project provides vetted researchers with network security-related data in a disclosure-controlled manner that respects the security, privacy, legal, and economic concerns of Internet users and network operators. IMPACT connects Data Providers with cybersecurity researchers. CAIDA contributes to the IMPACT Project as a Data Provider and Data Host, as well as with developing technical, legal, and practical aspects of IMPACT policies and procedures.

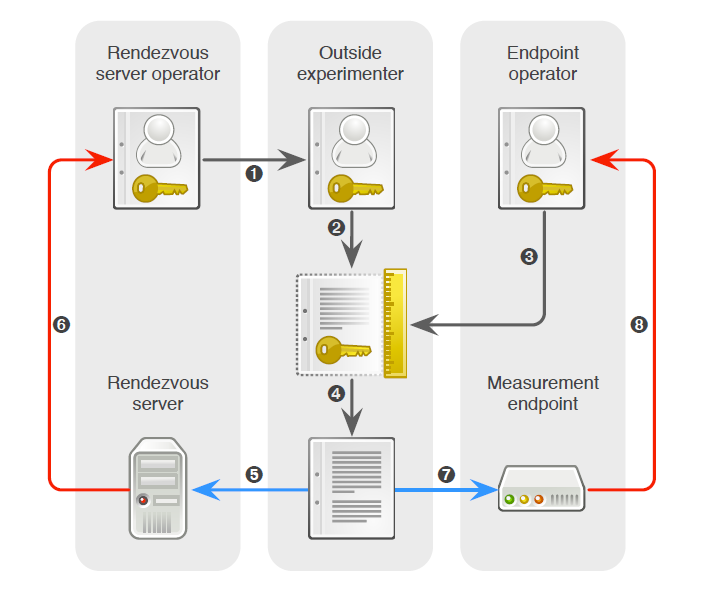

Figure. Authorization relationships in a PacketLab experiment (PacketLab: A Universal Measurement Endpoint Interface, IMC).

Figure. Authorization relationships in a PacketLab experiment (PacketLab: A Universal Measurement Endpoint Interface, IMC).

PacketLab

PacketLab. In collaboration with Kirill Levchenko at UC San Diego's CSE department, we explored the idea of a universal measurement endpoint interface that could lower the barriers faced by experimenters and measurement endpoint operators. We described PacketLab as a candidate measurement interface that can accommodate the research community's demand for future global-scale Internet measurement (PacketLab: A Universal Measurement Endpoint Interface, IMC). We also submitted a proposal to NSF to explore the many exciting research challenges involved in implementing such a system.

Data

In the interests of reproducibility of our own work and facilitating expanded scientific analysis of these topics, we invest significant effort to ensure that data we gather or derive in our work is available to other researchers. We list all available data, including legacy data, on our CAIDA Data page, and regularly email the principal researchers with updates and important news. Last year we added seven new datasets, and by the end of 2017 were serving more than 60 unique datasets. Below is a brief description of the new datasets released in 2017.New Datasets

Mapping peering interconnections at the facility level. In April, we released a dataset, AS Facilities, that contains information about geographic locations of interconnection facilities, autonomous systems (ASes) that have peering interconnections at those facilities, and the engineering approach to interconnection. This dataset used the methodology described in Mapping Peering Interconnections to a Facility (CoNEXT 2015).

CAIDA UCSD Geolocated Router Dataset. In order to evaluate the reliability of router geolocation in popular public and commercial geolocation databases, we created and made available via IMPACT a ground-truth dataset of 16,586 router interface addresses and locations, with city-level accuracy. Our evaluation methodology, regional breakdown analysis of the databases' accuracy, and resulting recommendations to researchers are described in A Look at Router Geolocation in Public and Commercial Databases (IMC 2017).

PeeringDB data. We began to share via our website (with permission) historical snapshots of PeeringDB, an online database of information about peering policies, traffic volumes, and geographic presence of participating networks. The first version of PeeringDB was an MySQL database, which was not scalable, lacked security features and data validation mechanisms (presenting potential risks of exposing contact information to spammers), and contained typos. Starting at the end of March 2016, PeeringDB was switched to a new data schema and API. CAIDA manages the only repository of historic PeeringDB data, which includes daily sql snapshots from July 2010 till March 2016.

Border Mapping (bdrmap) Dataset. We released the Border Mapping Dataset that consists of a set of border routers inferred to be owned by networks hosting Ark Vantage Points (VPs) along with the set of neighbor routers connected to each border router. Building on years of prior work in topology discovery, alias resolution, AS relationship inference, and active probing systems, CAIDA's border mapping tool bdrmap tackles the problem of automatically and correctly inferring network boundaries in a traceroute. The border mapping methodology, data collection, and analysis phases are described in the paper, bdrmap: Inference of Borders Between IP Networks (IMC 2016).

Paper Supplement Datasets. We added two paper supplement datasets: Traceroute Probe Method (IMC 2009) and AS Router Outages (SIGCOMM 2017). The IMC 2009 paper data supplement was derived from the MIT ANA Spoofer project. The data has been anonymized using the IP prefix-preserving method of CryptoPAn. The SIGCOMM 2017 paper data supplement contains survey of nearly 150K of distinct routers over 2.5 years. This data can be used to study deployment of BGP configurations, to evalute network resilience, and to cross-validate various outage detection methods.

Macroscopic Internet Topology Data Kit (ITDK). We released two new ITDK datasets: ITDK-2017-02 and 2017-08. In comparison to earlier releases, the latest ITDK (2017-08) utilizes traceroutes not only from our Archipelago measurement infrastructure but also from RIPE Atlas measurement infrastructure.

Data Collection Statistics

The graphs below show the cumulative amount of data collected over the last several years by our primary data collection infrastructures, Archipelago and the UCSD Network Telescope. We are currently collecting more than 3 TB of uncompressed data per day (more than 95% of which is Telescope data). In 2017 CAIDA captured about 6 TB of uncompressed topology data, and about 930 TB of Internet background radiation (IBR) traffic traces data.

We did not collect any new Anonymized Internet Passive traces (our most popular data sets among researchers) in 2017. The last Internet backbone traffic trace was captured in April 2016, when the backbone link on which these data were collected was upgraded to 100G (while our hardware only can handle 10G). During 2017, we secured access to a new 10G link at equinix-nyc and now plan to resume our trace collection in 2018. We are still pursuing the (costly) effort to develop monitors that can operate on 100GB links.

Data Distribution Statistics

There are two complementary ways that users can request access to CAIDA's data: through the CAIDA portal and through the Information Marketplace for Policy and Analysis of Cyber-risk and Trust (IMPACT) portal. Datasets shared through the CAIDA portal fall into two categories: public and by-request. Public datasets are available to users who agree to CAIDA's Acceptable Use Policy for public data. After filling out the corresponding public data request form, users are directed to the data download page. Access to the by-request datasets is subject to approval by CAIDA data administrator. These datasets are available for use by academic researchers, US government agencies, and corporate entities who participate in CAIDA's membership program. Users are required to provide a brief description of their intended use of the data, and agree to an Acceptable Use Policy.

Access to the CAIDA datasets through IMPACT is subject to corresponding IMPACT Terms and Agreements, and must be approved by IMPACT staff. These datasets are available for use by academic researchers, government agencies and corporate entities from DHS-Approved Locations (US, Canada, Australia, United Kingdom, Israel, Japan, the Netherlands, and Singapore). During 2017, access to several popular CAIDA datasets (e.g. DDos, Archived Telescope, and the most recent Ark IPv4 topology data) became available only through IMPACT. (All IPv6 Ark topology data, and all IPv4 topology data older than one year are public.)

The graphs below show the annual counts of unique visitors who downloaded CAIDA datasets (public, by-request, and IMPACT) and the total size of downloaded data. In 2017 we granted access to the CAIDA by-request and IMPACT datasets to more than 450 new users. Even though the number of users who downloaded Anonymized Internet traces datasets increased, the total number of users that downloaded CAIDA data decreased in comparison with the previous four years. This reduction might be explained by stricter scrutiny of IMPACT requests. Overall, more than 140 TB of data were downloaded in 2017, representing a 75% increase (mostly due to the growth of Anonymous Internet traces downloads). Note that this statistics do not include Near-Real-Time Telescope dataset dissemination. Users can analyze this data only on CAIDA computers and are not allowed to download it.

Data Distribution Statistics: (left) Number of unique users downloading CAIDA data. Colors indicate different datasets. Since mid-2016 we make the UCSD Network Telescope data exclusively available through IMPACT, which explains the decrease of unique users downloading CAIDA datasets in 2017; (right) Volume of data downloaded annualy. Colors indicate different downloaded datasets. Multiple downloads of the same file by the same user, which is common, only counted once.

Publications using public and/or restricted CAIDA data (by non-CAIDA authors)

We know of a total of 153 publications in 2017 by non-CAIDA authors that used these CAIDA data. (Not all publications are reported back to us, so this is a lower bound that we update as we learn of new publications. Some of these papers used more than one dataset. Please let us know if you know of a paper using CAIDA data that's not on our list. The latest list is at: Non-CAIDA Publications using CAIDA Data.

![[Figure: request statistics for restricted data]](images/2017_annual_report_paper_count.png)

Tools

CAIDA develops supporting

tools for Internet data

collection, analysis and visualization.

The following table displays CAIDA developed and currently supported tools

and number of external downloads (by unique IP address) during 2017.

![[Figure: The number of times each tool was downloaded from the CAIDA web site in 2017.]](images/caida-tools-downloads-2017.jpg)

| Tool | Description | Downloads |

|---|---|---|

| arkutil | RubyGem containing utility classes used by the Archipelago measurement infrastructure and the MIDAR alias-resolution system. | 265 |

| Autofocus | Internet traffic reports and time-series graphs. | 279 |

| BGPStream | Open-source software framework for live and historical BGP data analysis, supporting scientific research, operational monitoring, and post-event analysis | 557 |

| Chart::Graph | A Perl module that provides a programmatic interface to several popular graphing package | 191 |

| CoralReef | Measures and analyzes passive Internet traffic monitor data. | 401 |

| Corsaro | Extensible software suite designed for large-scale analysis of passive trace data captured by darknets, but generic enough to be used with any type of passive trace data. | 347 |

| Cuttlefish | Produces animated graphs showing diurnal and geographical patterns. | 190 |

| dbats | High performance time series database engine | 130 |

| dnsstat | DNS traffic measurement utility. | 258 |

| iatmon | Ruby+C+libtrace analysis module that separates one-way traffic into defined subsets. | 99 |

| iffinder | Discovers IP interfaces belonging to the same router. | 325 |

| libsea | Scalable graph file format and graph library. | 165 |

| libtimeseries | Provides a high-performance abstraction layer for efficiently writing to time series databases. | 113 |

| kapar | Graph-based IP alias resolution. | 232 |

| Marinda | A distributed tuple space implementation. | 200 |

| MIDAR | Monotonic ID-Based Alias Resolution tool that identifies IPv4 addresses belonging to the same router (aliases) and scales up to millions of nodes. | 265 |

| Motu | Dealiases pairs of IPv4 addresses. | 90 |

| mper | Probing engine for conducting network measurements with ICMP, UDP, and TCP probes. | 175 |

| otter | Visualizes arbitrary network data. | 432 |

| plot-latlong | Plots points on geographic maps. | 105 |

| plotpaths | Displays forward traceroute path data. | 72 |

| rb-mperio | RubyGem for writing network measurement scripts in Ruby that use the mper probing engine. | 327 |

| RouterToAsAssignment | Assigns each router from a router-level graph to its Autonomous System (AS). | 608 |

| rv2atoms (including straightenRV) | A tool to analyze and process a Route Views table and compute BGP policy atoms. | 43 |

| scamper | A tool to actively probe the Internet to analyze topology and performance | 349 |

| sk_analysis_dump | A tool for analysis of traceroute-like topology data. | 113 |

| topostats | Computes various statistics on network topologies. | 221 |

| Walrus | Visualizes large graphs in three-dimensional space. | 667 |

Publications

(ordered by primary topic area, but many cross multiple topics)Internet Performance Measurement

- SWIFT: Predictive Fast Reroute, ACM SIGCOMM, Aug 2017.

- Resilience of Deployed TCP to Blind FIN Attacks, Center for Applied Internet Data Analysis (CAIDA), Oct 2017.

- A Look at Router Geolocation in Public and Commercial Databases, IMC, Nov 2017.

- PacketLab: A Universal Measurement Endpoint Interface, IMC, Nov 2017.

- Inferring BGP Blackholing Activity in the Internet, IMC, Nov 2017.

Monitoring Global Internet Security and Stability

- Using Loops Observed in Traceroute to Infer the Ability to Spoof, PAM, Mar 2017.

- The Impact of Router Outages on the AS-level Internet, ACM SIGCOMM, Aug 2017.

- The 9th Workshop on Active Internet Measurements (AIMS-9) Report, ACM SIGCOMM CCR, Oct 2017.

- Millions of Targets Under Attack: a Macroscopic Characterization of the DoS Ecosystem, IMC, Nov 2017.

Policy Research

- Workshop on Internet Economics (WIE2016) Final Report, ACM SIGCOMM CCR, Jul 2017.

- Detecting Peering Infrastructure Outages in the Wild, ACM SIGCOMM, Aug 2017.

- Reshaping the African Internet: From Scattered Islands to a Connected Continent, Elsevier, Nov 2017.

- TCP Congestion Signatures, IMC, Nov 2017.

- Challenges in Inferring Internet Congestion Using Throughput Measurements, IMC, Nov 2017.

- Investigating the Causes of Congestion on the African IXP substrate, IMC, Nov 2017.

Future Internet Research

- Tracking the Big NAT across Europe and the U.S., Center for Applied Internet Data Analysis (CAIDA), Apr 2017.

- On IPv4 transfer markets: Analyzing reported transfers and inferring transfers in the wild, Elsevier, Oct 2017.

Future Internet Research: Named Data Networking

- Named Data Networking Next Phase (NDN-NP) Project May 2016 - April 2017 Annual Report, NDN, Nov 2017.

CAIDA Blog

CAIDA 2017 in Numbers

In 2017, CAIDA published 14 peer-reviewed papers, 2 technical reports, 2 workshop reports, and made 31 presentations. CAIDA researchers presented their results and findings at NZNOG (Tauranga, New Zealand), IETF (Chicago, IL), Internet2 (San Francisco, CA), IIJ (Tokyo, Japan), IMC (London, UK), and at other workshops and meetings. A complete list of presented materials is available on CAIDA Presentations page.

We organized and hosted four workshops: AIMS 2017: Workshop on Active Internet Measurements, the 8th NDN Retreat/3rd NDN Community Meeting, IMAPS 2017: Conflict and Contention in the Digital Age, and WIE 2017: 8th Workshop on Internet Economics. We co-organized the 10th CAIDA-WIDE Workshop held in Japan at IIJ in November 2017.

In 2017, our web site www.caida.org attracted 553,689 unique visitors, with an average of 1.6 visits per visitor, serving an average of 3.44 pages per visit.

During 2017, CAIDA employed 15 staff (researchers, programmers, data administrators, technical support staff), hosted 2 postdocs, 3 PhD students, 9 graduate students, and 17 undergraduate students.

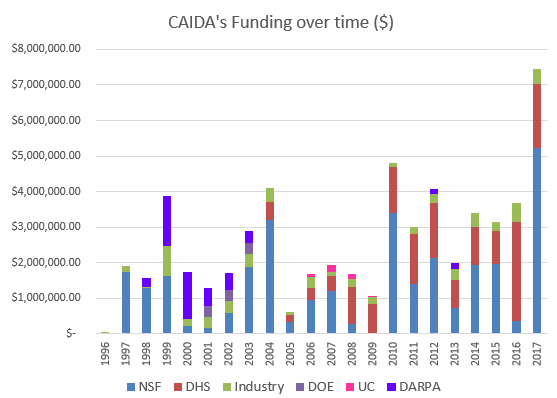

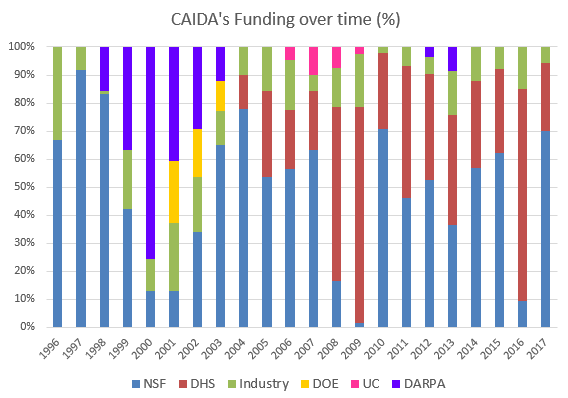

We received $7.45M to support our research activities from the following sources:

![[Figure: Allocations by funding source]](images/allocation_byfunding_2017.png)

| Funding Source | Amount ($) | Percentage |

|---|---|---|

| NSF | $5,181,910 | 70% |

| DHS | $1,792,918 | 24% |

| Other | $323,067 | 4% |

| Gift & Members | $155,000 | 2% |

| Total | $7,452,895 | 100% |

Two views of historical funding allocations are shown below, presented by total amount received and by percentage based on funding source.

These charts below show CAIDA expenses, by type of operating expenses and by program area:

![[Figure: Operating Expenses]](images/operating_expenses_2017.png)

| Expense Type | Amount ($) | Percentage |

|---|---|---|

| Labor | $2,115,292.64 | 60% |

| Indirect Costs (IDC) | $1,099,635.73 | 31% |

| Professional Development | $6,633.11 | <1% |

| Supplies & Expenses | $83,297.13 | 2% |

| Workshop & Visitor Support | $19,968.86 | 1% |

| CAIDA Travel | $54,893.94 | 2% |

| Subcontracts | $35,208.33 | 1% |

| Equipment | $86,384.83 | 2% |

| Total | $3,501,314.57 | 100% |

![[Figure: Expenses by Program Area]](images/expenses_byprogram_2017.png)

| Program Area | Amount ($) | Percentage |

|---|---|---|

| Economics & Policy | $244,278.96 | 7% |

| Future Internet | $158,955.47 | 5% |

| Mapping & Congestion | $578,636.30 | 17% |

| Infrastructure | $1,281,361.63 | 37% |

| Security & Stability | $1,151,332.92 | 33% |

| Outreach | $68,127.84 | 2% |

| CAIDA Internal Operations | $18,621.45 | 1% |

| Total | $3,501,314.57 | 100% |

Supporting Resources

CAIDA's accomplishments are in large measure due to the high quality of our visiting students and collaborators. We are also fortunate to have financial and IT support from sponsors, members, and collaborators, and monitoring hosting sites.

UC San Diego Students

- Alex Gamero-Garrido, PhD student at UC San Diego

- Jaehyun Park, graduate student at UC San Diego

- Ojas Gupta, graduate student at UC San Diego

- Induja Sreekanthan, graduate student at UC San Diego

- Gautam Akiwate, graduate student at UC San Diego

Visiting Scholars

- Vasileios Giotsas, postdoc from University College London (UCL)

- Ricky Mok, postdoc from Hong Kong Polytechnic University

- Danilo Cicalese, PhD student from Telecom ParisTech, France

- Mattijs Jonker, PhD student from University of Twente, The Netherlands

- Anant Shah, graduate student from Colorado State University

- Pawel Kalinowski, graduate student from Naval Postgraduate School

- Xiaohong Deng, graduate student from University of New South Wales (UNSW) Sydney, Australia

- Esteban Carisimo, graduate student from University of Buenos Aires, Argentina

- Yves Vanubel, graduate student from Montefiore Institute, Belgium

Funding Sources

- NSF grant (CNS-1345286) Named Data Networking Next Phase

- NSF grant (NSF CNS-1423659) HIJACKS: Detecting and Characterizing Internet Traffic Interception based on BGP Hijacking

- NSF grant (NSF CNS-1414177) Mapping Interconnection in the Internet: Colocation, Connectivity and Congestion

- DHS S&T Cooperative Agreement (DHS FA8750-12-2-0326) Supporting Research and Development of Security Technologies through Network and Security Data Collection

- DHS S&T contract (D15PC00188) Software Systems for Surveying Spoofing Susceptibility

- NSF grant (NSF CNS-1513283) Internet Laboratory for Empirical Network Science: Next Phase

- NSF grant (NSF CNS-1528148) Modeling IPv6 Adoption: A Measurement-driven Computational Approach

- NSF grant (NSF CNS-1513847) Economics of Contractual Arrangements for Internet Interconnections

- DHS S&T contract (HHSP 233201600012C) Science of Internet Security: Technology and Experimental Research

- NSF grant (NSF CNS-1705024) Investigating the Susceptibility of the Internet Topology to Country-level Connectivity Disruption and Manipulation

- NSF grant (NSF OAC-1724853) Integrated Platform for Applied Network Data Analysis (PANDA)

- NSF grant (NSF CNS-1730661) Sustainable Tools for Analysis and Research on Darknet Unsolicited Traffic (STARDUST)

- University Research sponsorship from Cisco Systems, Inc., Comcast, and NTT Corporation.

![[Figure: Archipelago cumulative data capture]](images/ark-ipv4-traceroute_ark-ipv6-traceroute_ark-ipv4-prefix-probing.2018-01-01.size.cdf.png)

![[Figure: UCSD Network Telescope capture]](images/telescope-live.2018-01-01.size.cdf.png)

![[Figure: total request counts statistics for data]](images/users-total.png)

![[Figure: download statistics for CAIDA data]](images/size-total.png)